-

When training on Irish, English and possibly other languages, Hung et al. (2020) ["Improving Multilingual Models with Language-Clustered Vocabularies"](https://www.aclweb.org/anthology/2020.emnlp-main…

-

This is Chinese NL2SQL dataset. It has same format with wikisql. https://github.com/ZhuiyiTechnology/TableQA

Except a little of difference.

"sql": [2]. It uses list to wrap the value.

When I loa…

-

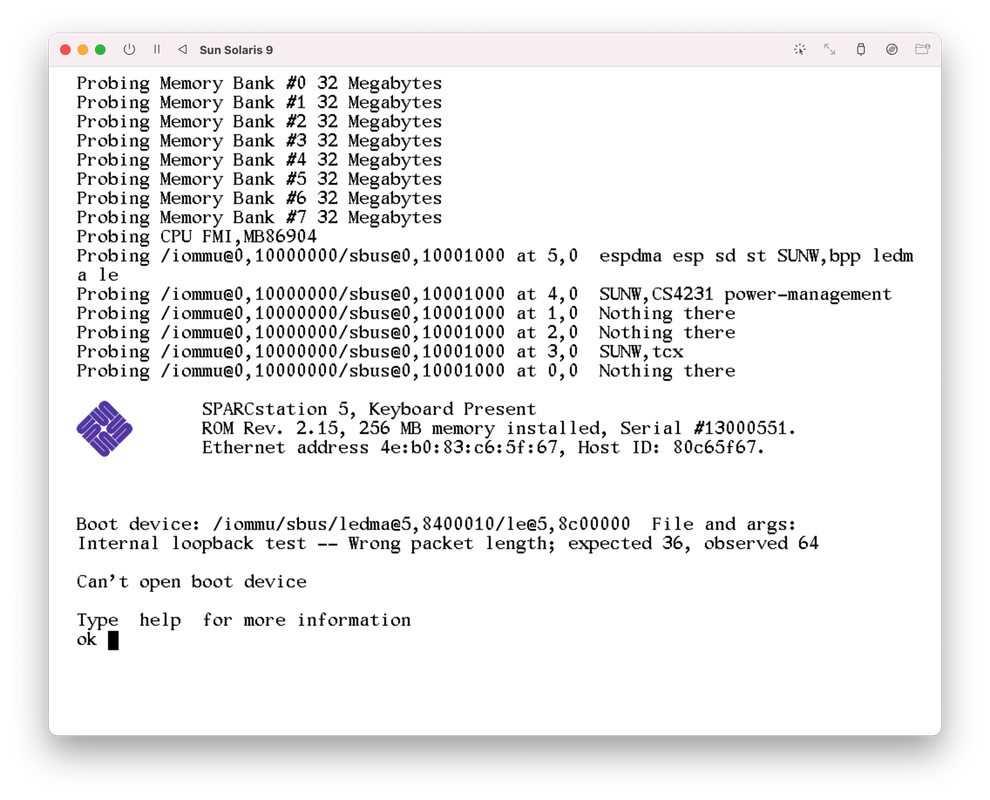

An error shows up right after downloading it. Don't really know what's wrong.

[](https…

-

#### Description

We aim to simplify the deployment process of our FastAPI application, which serves as an interface to our BERT and FastText models for SMS classification. The current setup process…

-

I have been trying to run the mBERT extraction script for the dataset : ca/head_first with bert-base-multilingual-cased. I am faced with the following error trace :

Using bert-base-multilingual-ca…

-

Is it possible to train Albert from scratch in another language using a TPU v3 (128Gb)?

Could you give an estimated training time? Days, weeks, months?

What is a reasonable corpus size? 1B words…

-

Thank you for your upload.

I finetuned v11 and v14, it went great. However, when I do the same thing for v1, v2, v3 and v4, I got different error messages as the followings:

$ python finetune_demo…

-

Expand Arabic experiments to Arabert - https://github.com/aub-mind/arabert. Test their different models with/with out tokenisation

-

Didn't find a clue to get the datasets, so I ask here. It's not an issue related to the implementation.

-

- uid: unsupervised_cross_lingual_representation_learning_at_scale

- type: processed

- description:

- name: Unsupervised Cross-lingual Representation Learning at Scale

- description: This pap…