您好,最近在研究spark第三方jar包依赖问题,不知为何使用spark-submit --jars 或者--packages 参数不起作用,系统任务提交后,就给我抛出classnotfound异常。找不到MySQL驱动类,我是用的不是CDH安装的Spark。环境是Spark on yarn,测试版本spark-2.4.1 +hadoop-2.9.2

val spark = SparkSession

.builder()

.appName("Spark SQL basic example")

.getOrCreate()

import spark.implicits._

val jdbcDF = spark.read

.format("jdbc")

.option("url", "jdbc:mysql://CentOS:3306/test")

.option("dbtable", "t_user")

.option("user", "root")

.option("password", "root")

.load()

jdbcDF.select('id,'salary)

.groupBy('id)

.sum("salary")

.map(row => row.getInt(0)+"\t"+row.getDouble(1))

.write.text("hdfs:///aa")任务提交如下

./bin/spark-submit --master yarn --num-executors 2 --executor-cores 2 --class 入口类 --packages mysql:mysql-connector-java:5.1.47 /root/spark-rdd-1.0-SNAPSHOT.jar其中spark-rdd-1.0-SNAPSHOT.jar并没有将MySQL依赖打包进去,主要就是测试--packages,貌似失败了。于是乎我有使用 ---jars参数 指定jar路径 结果都是一样的,系统会显示当前mysql驱动jar会上传到spark中,但是貌似程序在执行到DriverWrap的时候,就会抛出com.mysql.jdbc.Driver加载不到驱动,ClassNotFound问题。

同样我在chd5 上测试 spark-1.6.0 同样的脚本,确实执行ok的,还请大神指点,谢谢!

spark执行的大致流程

task、partition关系

lineage(血统)

dependencies between RDDs

stage

fault-tolerant

lineage与DAG的区别

RDD (Resilient Distributed Dataset)

在内存中计算, 内存中放不下遵循LRU(最近最少使用算法)将其余置换到disk

懒计算, 直到执行action 操作, 才会去计算RDD

容错性

不可变性(Immutability)

spark persist

参考自 https://github.com/JerryLead/SparkInternals/blob/master/markdown/6-CacheAndCheckpoint.md

cache

cache和checkpoint的区别

与mr的checkpoint的区别

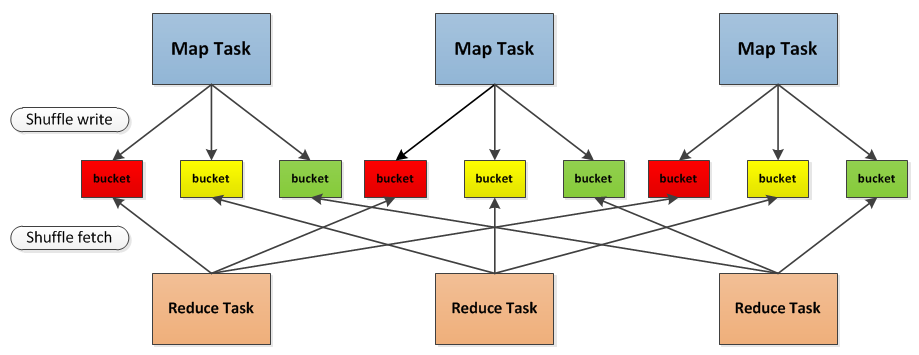

spark shuffle

参考自 SparkInternals-shuffleDetails

简而言之, 是再次分布数据的过程.例如 reduceByKey(), 需要在所有的分区找到所有的key的所有value ,并把所有的value聚合到一起计算.

具体流程, 如下图所示

reducer端如何进行fetch

性能调优

这篇博文还需再看 link

spark 资源分配

executor core --executor-cores in shell(or in conf spark.executor.cores) 5 means that each executor can run a maximum of five tasks at the same time。

executor memory the heap size can be controlled with the --executor-memory flag or the spark.executor.memory property

spark.yarn.executor.memoryOverhead 指的是 off-heap memory per executor, 用来存储 VM overheads, interned strings, other native overheads, 默认值是 Max(384MB, 10% of spark.executor-memory), 所以每个executor的实际物理内存需要囊括spark.yarn.executor.memoryOverhead 和executor memory两部分.

spark memory model link

num of executor --num-executors command-line flag or spark.executor.instances configuration property control the number of executors requested

yarn.nodemanager.resource.memory-mb controls the maximum sum of memory used by the containers on each node.

yarn.nodemanager.resource.cpu-vcores controls the maximum sum of cores used by the containers on each node.

跑批任务中(hive on spark, hive on mr)的资源分配

spark 的并行程度

如何设置并行度

spark 性能调优

美团点评spark基础篇

数据倾斜

某个parttion的大小远大于其他parttion,stage执行的时间取决于task(parttion)中最慢的那个,导致某个stage执行过慢

driver的作用

spark sql和presto的区别

hive on spark, hive on MR 和spark sql的区别

若要讨论spark和MR的区别

spark-submit

!/bin/sh

set -o nounset

第一个错误, shell终止执行

set -o errexit export SPARK_HOME= lib_path= tempView=mblTempView outputDir=/user/wutong/mblOutput mblFile=mbl.txt hdfs dfs -rm -r -f ${outputDir} ${SPARK_HOME}"/bin/spark-submit" \ --class com.mucfc.cms.spark.job.mainClass.PushMblToMerchantShellMain \ --master yarn \ --deloy-mode client \ --queue root \ --diver-class-path ${lib_path}"" \ --jars ${lib_path}"" \ --conf spark.default.parallelism=200 \ --conf spark.sql.shuffle.partitions=400 \ --conf spark.executor.cores=3 \ --conf spark.executor.memory=450m \ --conf spark.executor.instances=4 \ ${lib_path}"cms-sparkintegration.jar" "$@" \ -file_name -insertSql "insert overwrite table crm_appl.mbl_filter select ${tempView}" \ -tempView ${tempView} \ -outputSql "select from crm_app.mbl_filter" \ -showSql "select from ${tempView}" \ -outputDir ${outputDir} \ -inputPartitionNum 200 \ -outputPartitionNum 200 \ -inputFlag true \ -appNme PushMblToMerchantShellMain \ -local_file_path ${lib_path}${mblFile} -remote_file_path /user/wutong/mblFile \ -countForShortMbl 3000 -encrypt_type md5

spark.sql("xxxsql").queryExecution() spark.sql("xxxsql").explain()