Thanks for the detailed write-up @dhalbert! I'm not sure why it varies between Notepad and Notepad++ but do have some general ideas.

First a little background. With mass storage devices the host OS is responsible for maintaining the on-disk file system metadata. This is super common and well supported across OSes. The alternative is the Media Transfer Protocol which is more complex and relies on the device maintaining the underlying file system. Android phones do this so that they can read and write files at the same time as things are changed over USB. However, MacOS in particular doesn't have built-in support for MTP. So, CircuitPython is a mass storage device instead. Relying on the OS has its downsides though.

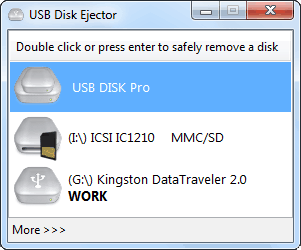

I suspect what you are running into is caching thats done on the OS side. There's no requirement that the OS write immediately to the disk upon save (though its common to). All it has to do is give the appearance that its written from within Windows. "Safely removing" is Windows' way of saying "Force me to flush the cache." So, try doing that next time its inconsistent.

You are right that 512 bytes is important. That's the size of each filesystem block. So, I think windows is writing the first block on save but waiting on the second. If that gap is large enough then the auto-reset will trigger and show an error. The OS can also do this for the metadata parts of the file system which may have lead to your OSError.

Neither of these things should be fatal to the filesystem though. You should be able to resave or "safely remove" to cause Windows to flush its cache and make the actual stored file system consistent.

MicroPython has had similar reports because they allow for writing the file system even when the OS is as well which can definitely lead to corruption. They chose to allow this for user flexibility. In CircuitPython the file system is never writeable from a user's Python code which should reduce the chance of FS corruption. (Its still possible by disconnecting the device before the OS's cache has been written.) We hope to change this to be toggleable (writeable over USB or from CircuitPython but not both) but haven't yet. It'll probably be done when we finish the SD card support.

So, next time it happens try doing a "safe remove" on the device and see if that fixes the file for CircuitPython. If that works, then its the best we can do on the CircuitPython side.

I have had problems writing to the onboard filesystem from Windows. I sometimes see complete filesystem corruption, and sometimes just problems with one file. See https://forums.adafruit.com/viewtopic.php?f=60&t=109687 for background.

Specific scenario, showing a file that CPy has trouble reading:

The file in question is here: main.py.txt. (Renamed from

main.pytomain.py.txtso that GitHub will take it as an attachment.) I wrote this Python code while doing some CPy I/O testing, and the specific code probably doesn't have anthing to do with this problem. However, this file is 556 bytes long, so it's more than one 512-byte block, which does seem to be important.If I write this file to

D:\CIRCUITPY\main.pyusingNOTEPAD.EXE, it runs just fine. It prints "5" and the button-reading loop works.If I write this exact same file using Notepad++ (a very common lightweight editor used on Windows) it does not work. The serial port shows:

Line 26 is right around the 512-byte boundary in the file.

In the REPL, I can read the file I wrote with

NOTEPAD.EXE:But if I write the same file again with Notepad++, I get an OSError when trying to read it in the REPL:

I can

TYPEeither file fromCMD.EXE, and if I useod(from GnuWin32) to look at the characters in the file, they are identical.I have repeated the cycle of writing with

NOTEPAD.EXEand then Notepad++ and I consistently have the error only with Notepad++. Once or twiceTYPEcomplained it did not have access to the bad version of the file, but I cannot reproduce that problem consistently. If I look at the file properties in Windows Explorer, the two versions have identical properties and sizes.I've looked at the Notepad++ source code where it writes files. It looks innocuous: it uses

::fwrite(). It does support UTF-8, but the file in question is all ASCII, and I set up Notepad++ to write it as ANSI.This appears to be some oddity or corruption about how Windows is writing to the CPy filesystem. I've inquired on the Notepad++ forum about whether it does anything unusual when writing files, and will report back if I hear anything.

The workaround is not to use Notepad++, but it seems important to figure out what's going wrong so other users will not have the same problem. I did some websearching and haven't turned up any similar reports about Notepad++, FatFs, or MicroPython.