As commented by @xiaoxiang781216:

can we reduce the board on Linux host to keep macOS/Windows? it's very easy to break these host if without these basic coverage.

I suggest that we monitor the GitHub Cost after disabling macOS and Windows Jobs. It's possible that macOS and Windows Jobs are contributing a huge part of the cost. We could re-enable and simplify them after monitoring.

Hi All: We have an ultimatum to reduce (drastically) our usage of GitHub Actions. Or our Continuous Integration will halt totally in Two Weeks. Here's what I'll implement within 24 hours for

nuttxandnuttx-appsrepos:When we submit or update a Complex PR that affects All Architectures (Arm, RISC-V, Xtensa, etc): CI Workflow shall run only half the jobs. Previously CI Workflow will run

arm-01toarm-14, now we will run onlyarm-01toarm-07. (This will reduce GitHub Cost by 32%)When the Complex PR is Merged: CI Workflow will still run all jobs

arm-01toarm-14(Simple PRs with One Single Arch / Board will build the same way as before:

arm-01toarm-14)For NuttX Admins: Our Merge Jobs are now at github.com/nuttxpr/nuttx. We shall have only Two Scheduled Merge Jobs per day

I shall quickly Cancel any Merge Jobs that appear in

nuttxandnuttx-appsrepos. Then at 00:00 UTC and 12:00 UTC: I shall start the Latest Merge Job atnuttxpr.(This will reduce GitHub Cost by 17%)macOS and Windows Jobs (msys2 / msvc): They shall be totally disabled until we find a way to manage their costs. (GitHub charges 10x premium for macOS runners, 2x premium for Windows runners!)

Let's monitor the GitHub Cost after disabling macOS and Windows Jobs. It's possible that macOS and Windows Jobs are contributing a huge part of the cost. We could re-enable and simplify them after monitoring.

(This must be done for BOTH

nuttxandnuttx-appsrepos. Sadly the ASF Report for GitHub Runners doesn't break down the usage by repo, so we'll never know how much macOS and Windows Jobs are contributing to the cost. That's why we need https://github.com/apache/nuttx/pull/14377)(Wish I could run NuttX CI Jobs on my M2 Mac Mini. But the CI Script only supports Intel Macs sigh. Buy a Refurbished Intel Mac Mini?)

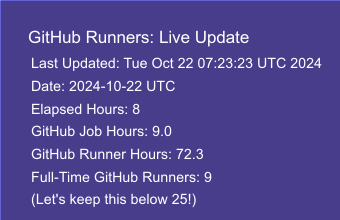

We have done an Analysis of CI Jobs over the past 24 hours:

https://docs.google.com/spreadsheets/d/1ujGKmUyy-cGY-l1pDBfle_Y6LKMsNp7o3rbfT1UkiZE/edit?gid=0#gid=0

Many CI Jobs are Incomplete: We waste GitHub Runners on jobs that eventually get superseded and cancelled

When we Half the CI Jobs: We reduce the wastage of GitHub Runners

Scheduled Merge Jobs will also reduce wastage of GitHub Runners, since most Merge Jobs don't complete (only 1 completed yesterday)

See the ASF Policy for GitHub Actions