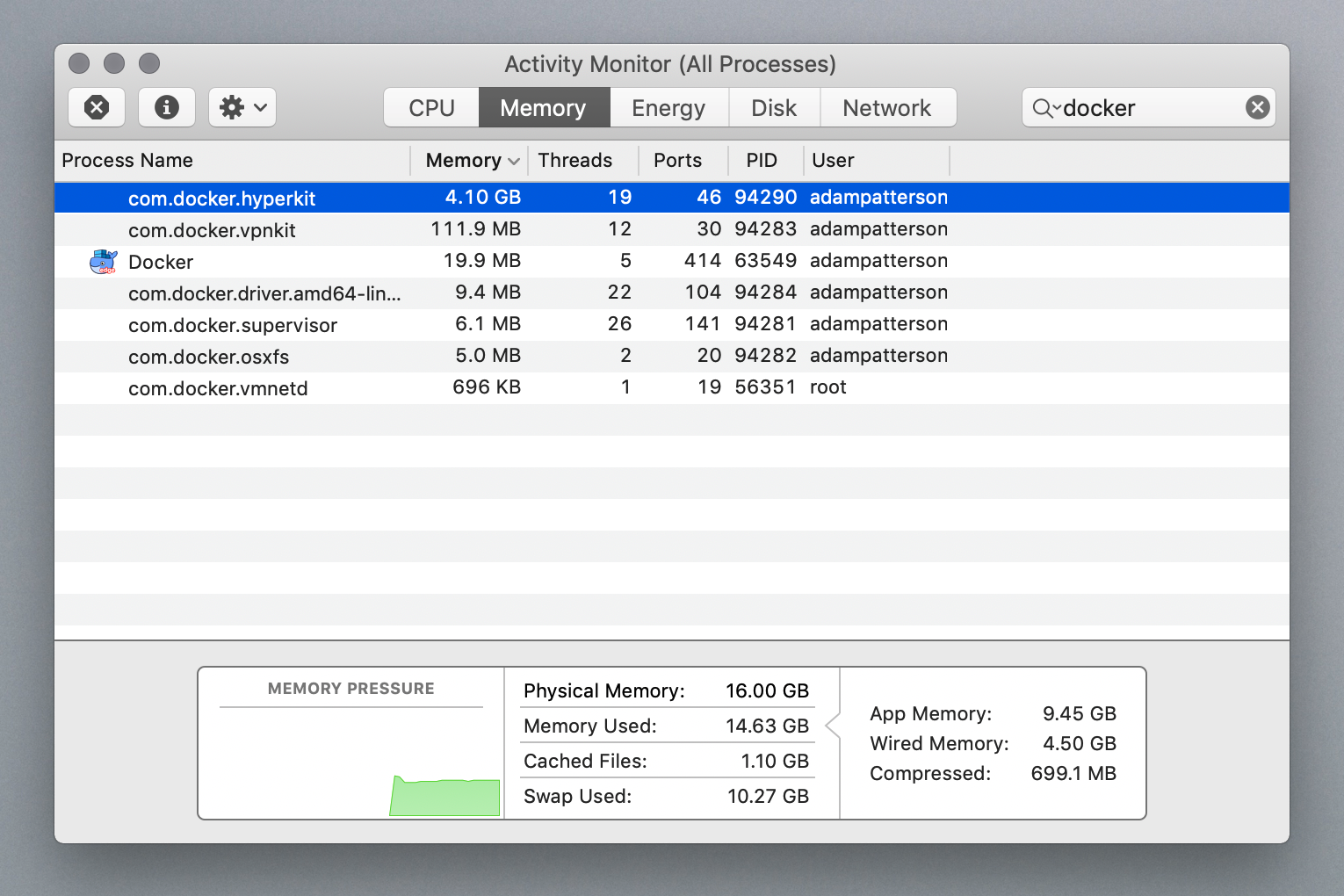

Thanks for your report. Unfortunately once the memory has been touched by the Linux kernel within the VM and therefore becomes populated RAM in the hyperkit process (by the usual OS page faulting mechanisms) there is no way for the guest kernel to then indicate back to the hypervisor and therefore the host when that RAM is free again and to turn those memory regions back into unallocated holes.

However since the RAM is unused in the guest it should not be touched by anything in the VM and therefore not by the hyperkit process either and therefore I would expect it to eventually get swapped out to disk in favour of keeping actual useful/active data for other processes in RAM, just as it would for any large but idle process.

It sounds like you are checking the virtual address size (vsz in ps output) of the hyperkit process rather than the resident set size (rss). The later should be shrinking for an idle hyperkit process as other processes request memory and hyperkit gets swapped out while the former basically only grows but does not necessarily represent use of actual RAM.

I'm afraid that compacting the vsz is basically a wont-fix issue here and I'm therefore going to close on that basis. If however you are observing the rss not shrinking (and there are other processes to create memory pressure i.e. the memory appears to be somehow locked into RAM and not swappable) then please do update this ticket with details of the rss memory patterns you are observing and we can reopen and investigate that angle further.

Expected behavior

The process com.docker.hyperkit after killing all the docker containers need to free the used memory to the initial state (50 mb)

Actual behavior

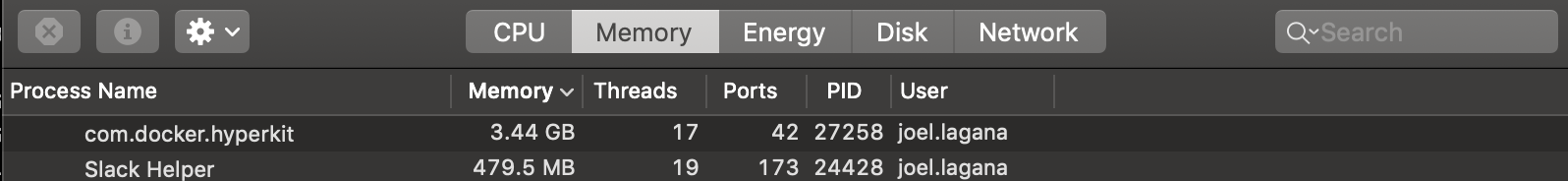

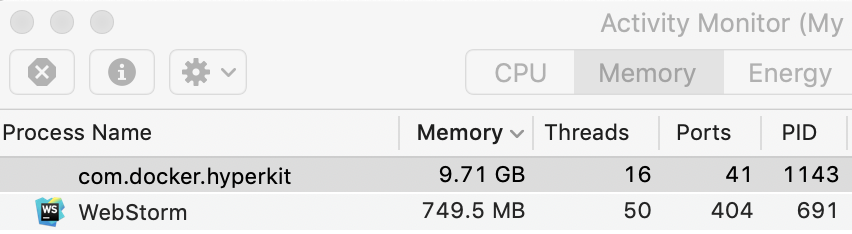

After killing all the docker containers the process com.docker.hyperkit still using 3.49 GB.

Information

Diagnostic ID: EB6AFE2E-34AA-4617-B849-D79863CDC40C Docker for Mac: 1.12.0 (Build 10871) macOS: Version 10.11.6 (Build 15G31) [OK] docker-cli [OK] app [OK] moby-syslog [OK] disk [OK] virtualization [OK] system [OK] menubar [OK] osxfs [OK] db [OK] slirp [OK] moby-console [OK] logs [OK] vmnetd [OK] env [OK] moby [OK] driver.amd64-linux

Steps to reproduce

version: '2' services: student: image: docker:dind ports:

docker-compose scale student=10

3.

docker-compose down

4.-

docker ps -> no containers running, but consuming a lot of memory com.docker.hyperkit (3,49 gb)

Only restarting the docker engine VirtualMachine (using hyperkit) free the memory.