I couldn't figure out the best area label to add to this issue. Please help me learn by adding exactly one area label.

Closed Ashuaidehao closed 3 years ago

I couldn't figure out the best area label to add to this issue. Please help me learn by adding exactly one area label.

cc @bartonjs for area.

Tagging subscribers to this area: @bartonjs, @vcsjones, @krwq Notify danmosemsft if you want to be subscribed.

I took a look at this and I don't see any easy wins for performance.

In .NET Core, we don't implement any of the underlying cryptographic algorithms. It defers to functionality built in to the OS. In macOS, this is called SecurityTransforms.

The performance issue seems to be that macOS's implementation is simply not that fast. The bulk of the verification time is spent in SecTransformExecute, which is Apple's own API. I'm seeing anywhere from 9 to 10 milliseconds of execution time to verify 1 P256 signature (hashing time is mostly negligible).

I made a Swift Playground that uses your key, signature, and message and used the same Cryptographic APIs that .NET is using. I did a little pre-processing of the data that .NET uses, like pre-computing the hash of the message and formatting the signature, but otherwise it's the same.

https://gist.github.com/vcsjones/8b17709cdd23d7e86cd2f65cd8591723#file-cryptoperf-transforms-swift

Example output:

Execution: 10.333497999999999 milliseconds.

Signature is valid.This is on macOS 10.15.4. Since your original program did 1,000 iterations, we can multiply that by 1000 and then divide by 1000 again to go back to seconds, so the total time for 1,000 invocations is approximately 10 seconds, so the Swift code demonstrates comparable time as the .NET code.

Just for fun, I looked at the new CryptoKit API introduced in macOS 10.15, and I didn't see any improvement.

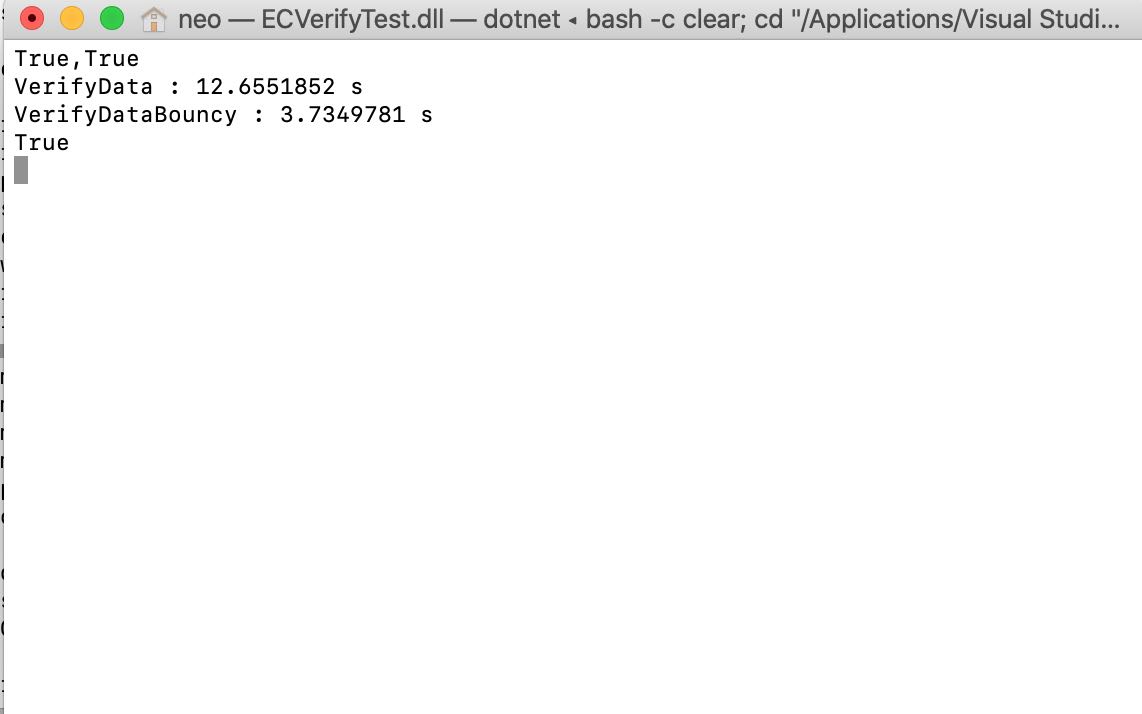

@vcsjones Thanks for explaining. I run your code on my Mac, and find something interesting. I run CryptoKit API version first then SecTransformExecute version. Here are my results:

The SecTransformExecute was really time-consuming but CryptoKit API was quite efficient. Maybe using new CryptoKit API could fix this issue.

@Ashuaidehao

Interesting, it appears that CryptoKit has a bit of a "start up" time. If I run SecurityTransforms and CryptoKit in a loop 1000 times, the performance difference is very noticeable.

CryptoKit is quite fast.

I don't know how feasible it is to use CryptoKit for this off the top of my head. There are a number of barriers with using CryptoKit.

The biggest one is the lack of a C-like API that .NET can call. .NET can work with SecurityTransforms because it does offer a C API. Since the native parts of .NET are written in C, thus SecurityTransforms is easy enough to use.

CrytpoKit does not have a C API - it offers a Swift-only API/ABI that .NET doesn't understand. In order to use it, there would need to be a Swift -> Objective-C -> C indirection. This was explored while researching AES-GCM but it's only been mildly explored so far.

The other issue is that CryptoKit is 10.15+ on macOS. I can't say with any certainty but I suspect .NET 5 will continue to support at least 10.14 as well.

The CryptoKit times are encouraging though that CoreCrypto (Apple's truly low-level implementation of cryptographic functions that powers CommonCrypto and CryptoKit) is fast, and that something in SecurityTransforms is holding it back. I will continue to investigate other possibilities of SecurityTransforms - perhaps there is a way to get it closer to CryptoKit's performance.

It looks like SecKeyVerifySignature has the same performance as CryptoKit. It has some limitations, mainly that DSA and MD5 digests are not supported.

@bartonjs given the significant performance improvement with SecKeyVerifySignature (10s vs 265ms for 1000 verifications), do you think it is acceptable to use it in places where it supports a given algorithm?

Does it require the original data (VerifyData) or does it also work with the hash (VerifyHash)? It'd be weird if VerifyHash ended up being slower.

@bartonjs both. Can use kSecKeyAlgorithmECDSASignatureDigestX962SHA256 for digests and kSecKeyAlgorithmECDSASignatureMessageX962SHA256 for messages.

There are similar options for RSA, (sign and encrypt).

Cool, I'm up for the experiment, deferring to the existing function when we need to (DSA, RSA+MD5)

I spent a little time on this to plumb this in to ECDSA verification and the results were not as good as I had hoped they would be.

The branch for the changes is here: https://github.com/vcsjones/runtime/tree/ecdsa-perf

I put together a benchmark project here: https://github.com/vcsjones/DotNetCryptoBenches

The gist is that there was no to small improvement depending on the curve and data length. Small being at most a millisecond, usually half a millisecond.

This is not the order of magnitude improvement I was hoping for. However there is one more avenue left for me to explore, which is how the key gets imported. The current approach uses SecItemImport whereas the small spike I did used SecKeyCreateWithData. That makes sense to me on the surface as a source of performance differences, so I will explore that next.

@bartonjs Out of curiosity, what is the history behind keygen on macOS roundtripping through import/export?

It looks like keys are generated in a temporary keychain, then shuffled off in to the ephemeral keychain. Why generate them in a temporary keychain at all, then export them to the ephemeral one?

Something obviously didn't work :). I think it's that SecGenerateKeyPair won't (or wouldn't in 10.12) generate to the ephemeral keychain; or something broke when it did so. So a temporary keychain gets created to make it happy, but then when we delete said keychain it gets angry, so we then export/import to the ephemeral keychain so we can delete the temporary keychain.

All so that ECDsa.Create() doesn't pollute login.keychain.

Okay, after switching key generating to use Key Services instead of Security Transforms, I see much more substantial results. I updated the branch and the benchmark's results directory, but to give an idea:

| Method | DataLength | CurveName | HashAlgorithm | Mean | Error | StdDev |

|---|---|---|---|---|---|---|

| SignHash | 32 | ECDSA_P256 | SHA256 | 5.218 ms | 0.0213 ms | 0.0200 ms |

| VerifyHash | 32 | ECDSA_P256 | SHA256 | 10.147 ms | 0.0360 ms | 0.0319 ms |

| SignHash | 32 | ECDSA_P521 | SHA512 | 17.683 ms | 0.0538 ms | 0.0477 ms |

| VerifyHash | 32 | ECDSA_P521 | SHA512 | 34.861 ms | 0.1689 ms | 0.1497 ms |

| Method | DataLength | CurveName | HashAlgorithm | Mean | Error | StdDev | |

|---|---|---|---|---|---|---|---|

| SignHash | 32 | ECDSA_P256 | SHA256 | 279.0 μs | 2.57 μs | 2.28 μs | 278.2 μs |

| VerifyHash | 32 | ECDSA_P256 | SHA256 | 231.2 μs | 1.65 μs | 1.38 μs | 231.3 μs |

| SignHash | 32 | ECDSA_P521 | SHA512 | 1,108.3 μs | 15.10 μs | 14.13 μs | 1,102.6 μs |

| VerifyHash | 32 | ECDSA_P521 | SHA512 | 848.5 μs | 14.84 μs | 15.24 μs | 841.3 μs |

@bartonjs There is a performance win here I think, but it is a fairly substantial amount of work and changes. I made enough changes to get keygen, sign, verify on key services. Import / export is broken in the branch for ECDSA.

There is some compatibility between key services and keychain / transforms, but not enough. For example, SecItemExport can't export a key created by key services since the key is not in a keychain at all, not even a temporary one.

I'm also not sure right now what this means up the stack, like CopyWithPrivateKey / GetECDsaPrivateKey or can these keys work with SslStream.

Since there is little interop between key services and keychain, I don't see a great piecemeal approach, either.

This is on the order of "rewrite the macOS crypto PAL". More annoyingly, I don't see full DSA support here, which means in order to keep supporting DSA we would need to keep all of the existing code as well.

All that said, if it works, it's a performance improvement of 10x to 50x.

My gut tells me this will also improve RSA performance, but I don't have numbers to back that feeling up, yet.

If import/export work then the semi-piecemeal approach would be to move ephemeral keys to key services while leaving cert+key keys on the current stack (import/export would be required so that CopyWithPrivateKey can succeed... in addition to all the other obvious needs of import/export)

@bartonjs i think that is reasonable to try.

Here are some numbers for RSA. Signing improves, however verify stays the same, (All tests are using PKCS1 padding).

| Method | DataLength | Config | Mean | Error | StdDev |

|---|---|---|---|---|---|

| SignData | 32 | RSA1024, SHA1 | 1,713.4 μs | 2.96 μs | 2.77 μs |

| VerifyData | 32 | RSA1024, SHA1 | 387.4 μs | 2.72 μs | 2.27 μs |

| SignHash | 32 | RSA1024, SHA1 | 1,715.0 μs | 3.38 μs | 2.99 μs |

| VerifyHash | 32 | RSA1024, SHA1 | 383.1 μs | 0.73 μs | 0.65 μs |

| SignData | 32 | RSA2048, SHA256 | 9,160.4 μs | 5.40 μs | 4.51 μs |

| VerifyData | 32 | RSA2048, SHA256 | 563.1 μs | 2.08 μs | 1.95 μs |

| SignHash | 32 | RSA2048, SHA256 | 9,150.6 μs | 4.21 μs | 3.93 μs |

| VerifyHash | 32 | RSA2048, SHA256 | 567.1 μs | 1.16 μs | 1.09 μs |

| SignData | 32 | RSA4096, SHA512 | 58,754.8 μs | 419.73 μs | 392.62 μs |

| VerifyData | 32 | RSA4096, SHA512 | 1,203.5 μs | 1.59 μs | 1.48 μs |

| SignHash | 32 | RSA4096, SHA512 | 58,782.1 μs | 219.42 μs | 194.51 μs |

| VerifyHash | 32 | RSA4096, SHA512 | 1,199.5 μs | 1.03 μs | 0.80 μs |

| Method | DataLength | Config | Mean | Error | StdDev |

|---|---|---|---|---|---|

| SignData | 32 | RSA1024, SHA1 | 343.6 μs | 4.46 μs | 4.18 μs |

| VerifyData | 32 | RSA1024, SHA1 | 379.3 μs | 3.96 μs | 3.51 μs |

| SignHash | 32 | RSA1024, SHA1 | 343.5 μs | 3.89 μs | 3.64 μs |

| VerifyHash | 32 | RSA1024, SHA1 | 384.0 μs | 0.96 μs | 0.89 μs |

| SignData | 32 | RSA2048, SHA256 | 1,805.6 μs | 22.26 μs | 19.73 μs |

| VerifyData | 32 | RSA2048, SHA256 | 569.6 μs | 1.49 μs | 1.25 μs |

| SignHash | 32 | RSA2048, SHA256 | 1,795.7 μs | 15.25 μs | 14.26 μs |

| VerifyHash | 32 | RSA2048, SHA256 | 559.5 μs | 0.81 μs | 0.75 μs |

| SignData | 32 | RSA4096, SHA512 | 12,348.3 μs | 166.94 μs | 156.16 μs |

| VerifyData | 32 | RSA4096, SHA512 | 1,206.7 μs | 12.11 μs | 9.45 μs |

| SignHash | 32 | RSA4096, SHA512 | 12,482.0 μs | 206.82 μs | 193.46 μs |

| VerifyHash | 32 | RSA4096, SHA512 | 1,199.2 μs | 1.33 μs | 1.18 μs |

@bartonjs continuing to play with this, I think I figured out that if this were to be done the best solution mabe to carry a duplicate set of keys... the original keys in SecKeyPair with another pair of data keys, and to use the data keys for sign / verify on primitives, and the original keys for import / export / "I need a handle for things like SslStream". So our pair becomes a key... quartet.

This makes import and key gen slightly more expensive since any time the key mutates we need to shove the key through a few places to get a data key, with the benefit of substantially improved perf for sign / verify.

I put together a rough idea of what it would look like here: https://github.com/dotnet/runtime/compare/dotnet:7ef49af...vcsjones:58e5a11. There's some missing error checking and TODOs in there, but it should illustrate what a complete solution would look like.

As to "why both"? It's for a few various reasons, mainly that data keys can't be pushed through SecItemExport and data key exports in an entirely different format, and also with this, keys loaded from PFX / attached to X509Certificates should also get fast primitive operations, they just need to be taught to populate the data keys.

The latter will keep performance consistent regardless of where the key is coming from without needing to tell people to export / import it again to get better performance.

Is this sensible?

It seems like a reasonable approach. I'd try to feed the SecKeyPair methods a delegate and the key type, rather than have so much repeated-looking code, but that's just an initial reaction to seeing very similar blocks multiple times.

I believe this may be worth revisiting with benchmarks on main branch. It's on my backlog for the moment.

Most of the work done by @vcsjones has been now merged in slightly modified form. There may still be a scenario or two where generating the keys and directly using them falls back to the legacy CSSM EC implementation. That would be easy to solve with carefully injecting kSecUseDataProtectionKeychain attribute during the key generation.

believe this may be worth revisiting with benchmarks on main branch

I can do that much at least, I still have the benchmarks. Thanks for getting all that code in to a mergeable state.

Benchmarks taken from: b8dbea7c618bf346e7d4a5f78ddb1f6e8abf67ea

There may still be a scenario or two where generating the keys and directly using them falls back to the legacy CSSM EC implementation

This seems to be the case. Fresh keys note no performance improvement, round tripping the keys through export/import gets good performance.

Ignore the allocated column.

New keys (no improvement):

| Method | Config | Mean | Error | StdDev | Ratio | Gen 0 | Gen 1 | Gen 2 | Allocated |

|---|---|---|---|---|---|---|---|---|---|

| SignHash | nistP256, SHA256 | 4.439 ms | 0.0625 ms | 0.0554 ms | 1.00 | - | - | - | 252 B |

| VerifyHash | nistP256, SHA256 | 8.727 ms | 0.1647 ms | 0.1691 ms | 1.00 | - | - | - | 1,344 B |

| SignHash | nistP384, SHA384 | 8.516 ms | 0.1655 ms | 0.1700 ms | 1.00 | - | - | - | 320 B |

| VerifyHash | nistP384, SHA384 | 17.228 ms | 0.2357 ms | 0.2089 ms | 1.00 | - | - | - | 1,383 B |

| SignHash | nistP521, SHA512 | 16.262 ms | 0.3093 ms | 0.3037 ms | 1.00 | - | - | - | 408 B |

| VerifyHash | nistP521, SHA512 | 32.303 ms | 0.3816 ms | 0.3383 ms | 1.00 | - | - | - | 1,438 B |

Imported keys (improvement)

Same benchmark, just did ECDsa.ImportParameters(ECDsa.ExportParameters(true)); in setup.

| Method | Config | Mean | Error | StdDev | Ratio | Gen 0 | Gen 1 | Gen 2 | Allocated |

|---|---|---|---|---|---|---|---|---|---|

| SignHash | nistP256, SHA256 | 153.8 us | 0.95 us | 0.85 us | 1.00 | - | - | - | 248 B |

| VerifyHash | nistP256, SHA256 | 147.8 us | 0.78 us | 0.73 us | 1.00 | - | - | - | 1,336 B |

| SignHash | nistP384, SHA384 | 695.6 us | 1.81 us | 1.60 us | 1.00 | - | - | - | 312 B |

| VerifyHash | nistP384, SHA384 | 640.0 us | 1.11 us | 0.93 us | 1.00 | - | - | - | 1,368 B |

| SignHash | nistP521, SHA512 | 908.9 us | 4.00 us | 3.55 us | 1.00 | - | - | - | 392 B |

| VerifyHash | nistP521, SHA512 | 872.6 us | 2.40 us | 2.00 us | 1.00 | - | - | - | 1,408 B |

That would be easy to solve with carefully injecting kSecUseDataProtectionKeychain attribute during the key generation.

I can take a look at this since you are likely busy with iOS things. Unfortunately this attribute is new in macOS 10.15, but we can continue to use the CSSM path for 10.14.

I believe the kSecUseDataProtectionKeychain attribute existed prior to macOS 10.15. It just was not public and had a different name (kSecAttrNoLegacy). The actual string value of the dictionary key was identical though (nleg).

@vcsjones I have the patch ready, just waiting for other stuff to be merged first: https://github.com/dotnet/runtime/compare/d74211c...filipnavara:sscan-avail?expand=1

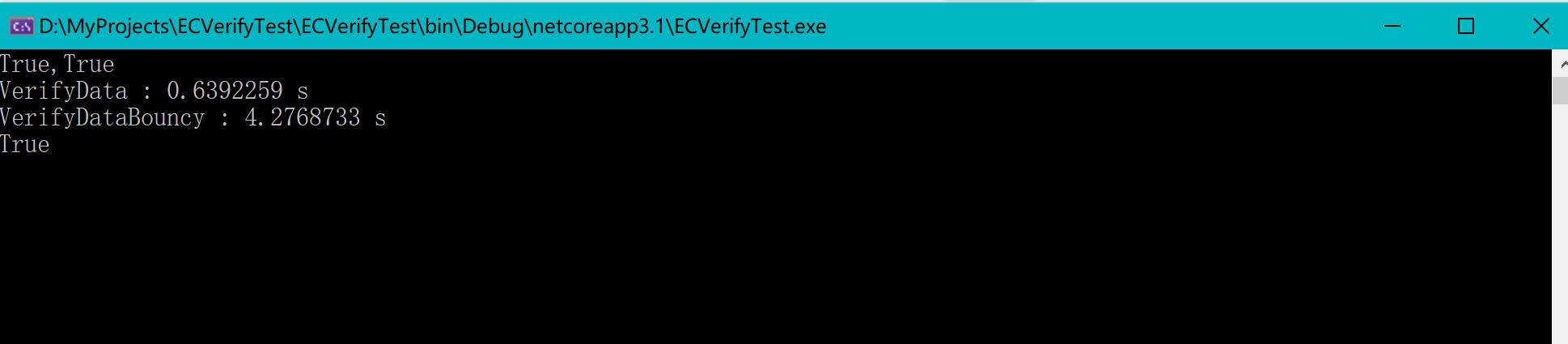

Hi, I have got performance issue when use ECDsa.VerifyData() on Mac, it's almost 10 times slower than on windows, which cause my app running very slow on Mac.

The runtime is dotnet core 3.1. Here my test code:

on Win10:

on Mac: