Thanks for your request!

Heads up, you’re requesting more than the typical weekly onboarding rate of DataCap!Closed huahuabuer closed 9 months ago

Thanks for your request!

Heads up, you’re requesting more than the typical weekly onboarding rate of DataCap!Thanks for your request! Everything looks good. :ok_hand:

A Governance Team member will review the information provided and contact you back pretty soon.

Total DataCap requested

5PiB

Expected weekly DataCap usage rate

200TiB

Client address

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

f01858410

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

100TiB

143a1788-010b-4a91-bf06-73dac6d6a459

Thanks for your request!

Heads up, you’re requesting more than the typical weekly onboarding rate of DataCap!@simonkim0515 @galen-mcandrew @raghavrmadya @Kevin-FF-USA

Since the company name has changed recently, the organization information in the submitted information has been modified accordingly. Is it necessary to correct the status of the Issue?

Copy from Issue#1276

Our company's external corporate email address is mipai-media@qq.com, you can check it from our company's official website https://www.todaysuccess.cn/. So we sent a KYC email from this mailbox, please check it.

Due to the nature of the company's business, our existing data volume is about 3-5PB, and about 500-800GB of various media data will be generated every day.

Total DataCap requested

5PiB

Expected weekly DataCap usage rate

200TiB

Client address

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

f01858410

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

100TiB

ea7513fa-ba39-46cd-8ff8-ba2711ee35a0

Your Datacap Allocation Request has been proposed by the Notary

bafy2bzacebpcnuzhllqj2abipgjtg2gef2zci76wd2n6rrwy7d2hsp4yt5w7u

Address

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

Datacap Allocated

100.00TiB

Signer Address

f1qdko4jg25vo35qmyvcrw4ak4fmuu3f5rif2kc7i

Id

ea7513fa-ba39-46cd-8ff8-ba2711ee35a0

You can check the status of the message here: https://filfox.info/en/message/bafy2bzacebpcnuzhllqj2abipgjtg2gef2zci76wd2n6rrwy7d2hsp4yt5w7u

1:can you send email to [[filplus-app-review@fil.org] 2:Which nodes do you want to store?

@Tom-OriginStorage

I would like to support real video data storage in Filecoin network, especially when there is a way (a tool example) to retrieval data for client to play. But, @huahuabuer , I did not see the approval for #1276 , would you please make that KYC process finished (I think you may need get a response from filplus-app-review to confirm that), and get that approved first? Thanks.

There are three exactly the same applications for a total of 15 PiB DataCap, #1278, #1277, #1276. I believe it's better to apply for another 5PiB after almost using up previous 5PiB.

@steven004 It was sent on the slack channel last week, hoping to get official confirmation, but has not received a reply yet, probably because of the recent vacation.

Since he couldn't find #1276 in the system the last time the notary signed it, he simply started with #1278. Of course, we will not work on three Issues at the same time, we will open the next one after #1278 is packaged. @IreneYoung

Your Datacap Allocation Request has been approved by the Notary

bafy2bzacedbti3ir4ibax2us7ungovm3me34qg7ikhu2phjtrmtlsfbos5qe4

Address

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

Datacap Allocated

100.00TiB

Signer Address

f1hhippi64yiyhpjdtbidfyzma6irc2nuav7mrwmi

Id

ea7513fa-ba39-46cd-8ff8-ba2711ee35a0

You can check the status of the message here: https://filfox.info/en/message/bafy2bzacedbti3ir4ibax2us7ungovm3me34qg7ikhu2phjtrmtlsfbos5qe4

@huahuabuer Hi! Great to see that you have gotten approval for DataCap! BDE is a verified deals auction house helping you to get paid storing your data with reliable storage providers. If you need any help, please get in touch.

checker:manualTrigger

There is no previous allocation for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

checker:manualTrigger

Beijing Jincheng Mingyuan Culture Media Co., Ltd.(北京觅拍文化传媒有限公司)f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

1Alex118011psh0691

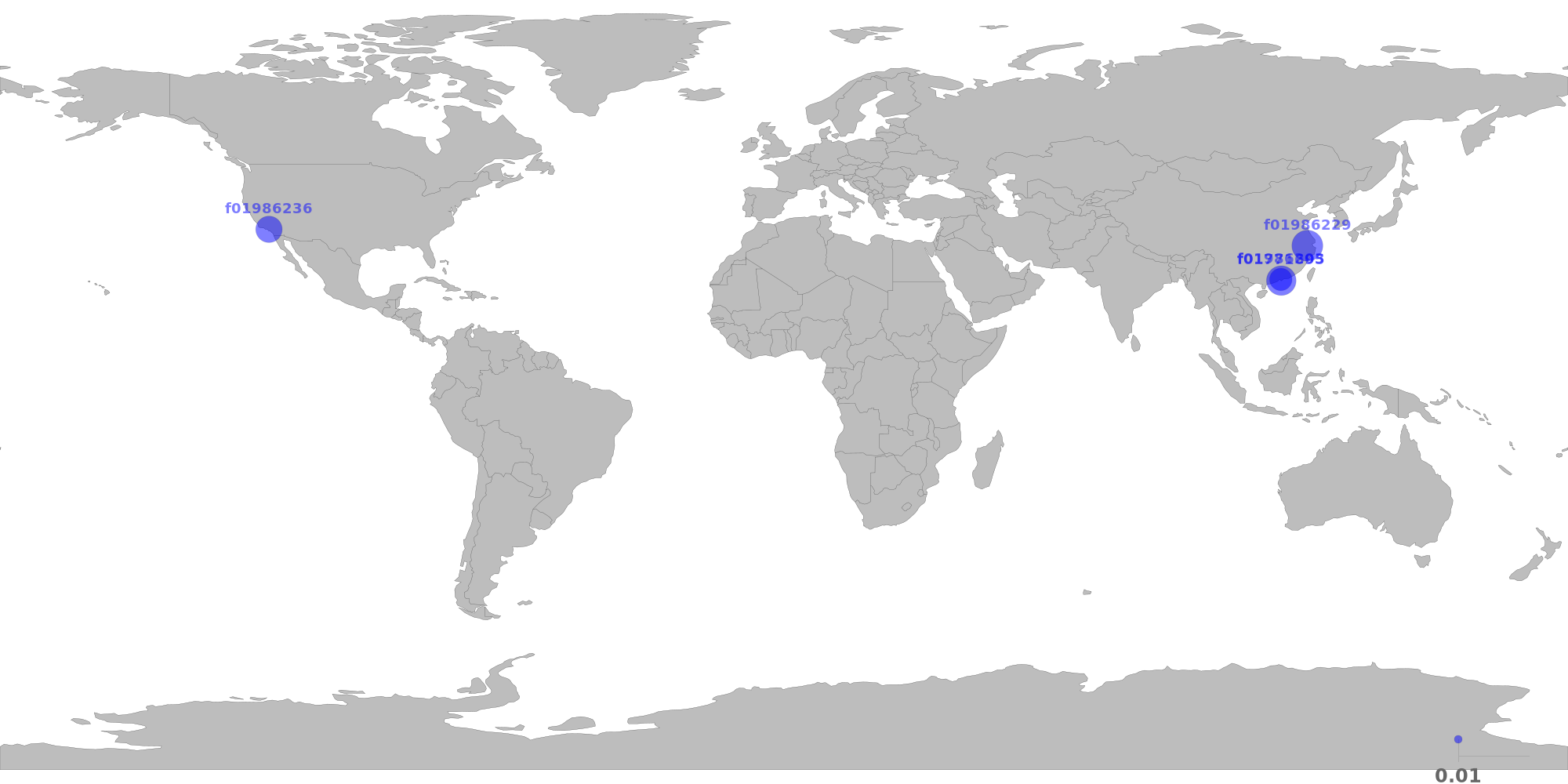

The below table shows the distribution of storage providers that have stored data for this client.

If this is the first time a provider takes verified deal, it will be marked as new.

For most of the datacap application, below restrictions should apply.

Since this is the 3rd allocation, the following restrictions have been relaxed:

⚠️ 20.26% of total deal sealed by f01986229 are duplicate data.

| Provider | Location | Total Deals Sealed | Percentage | Unique Data | Duplicate Deals |

|---|---|---|---|---|---|

f01986229new |

Hangzhou, Zhejiang, CNCHINANET-BACKBONE |

39.19 TiB | 45.92% | 31.25 TiB | 20.26% |

| f01986203 | Shenzhen, Guangdong, CNCHINANET-BACKBONE |

5.63 TiB | 6.59% | 5.63 TiB | 0.00% |

| f01986236 | Los Angeles, California, USCogent Communications |

12.94 TiB | 15.16% | 12.94 TiB | 0.00% |

| f01771695 | Hong Kong, Central and Western, HKHONG KONG BRIDGE INFO-TECH LIMITED |

27.59 TiB | 32.33% | 27.59 TiB | 0.00% |

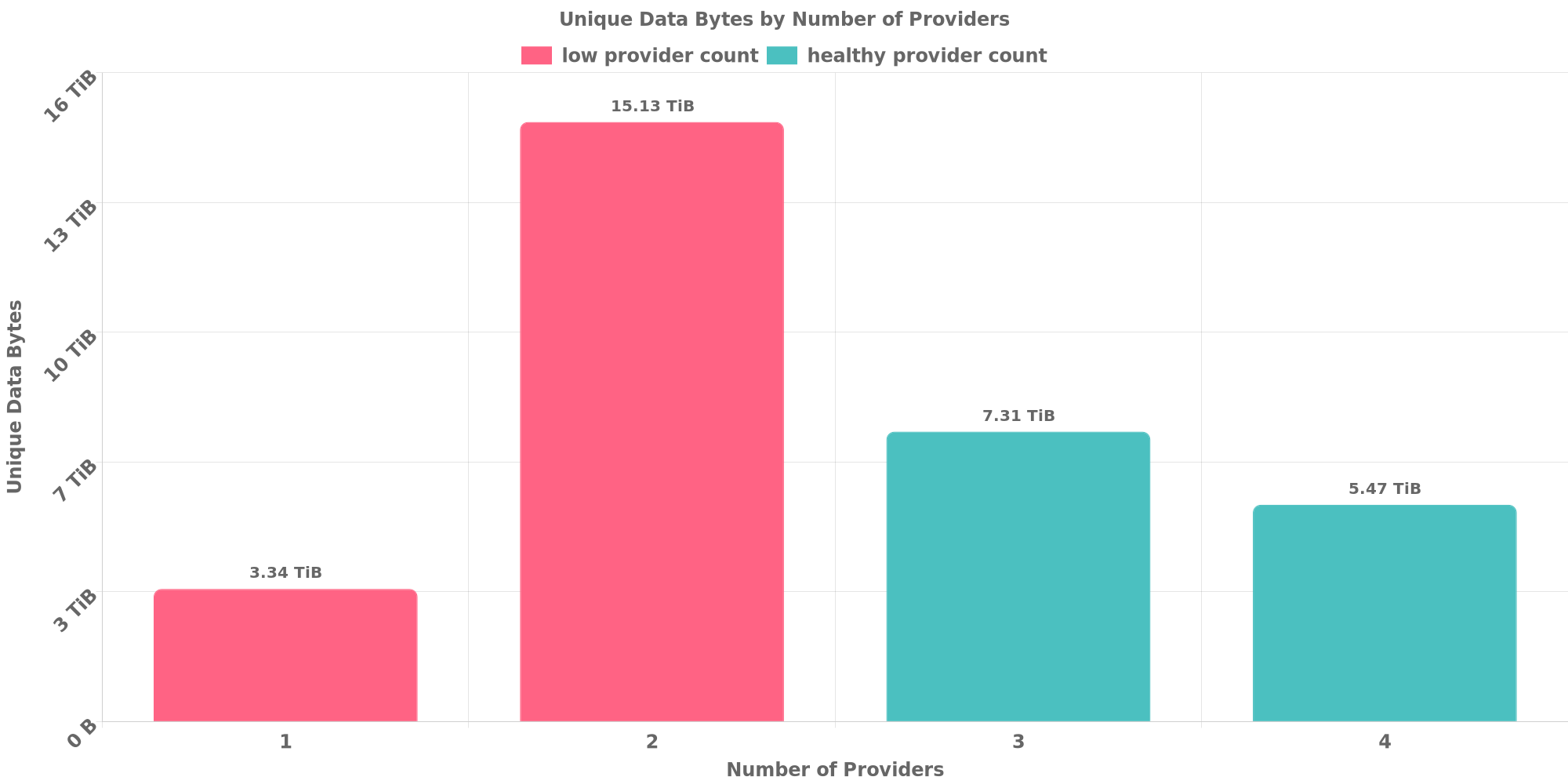

The below table shows how each many unique data are replicated across storage providers.

Since this is the 3rd allocation, the following restrictions have been relaxed:

✔️ Data replication looks healthy.

| Unique Data Size | Total Deals Made | Number of Providers | Deal Percentage |

|---|---|---|---|

| 3.34 TiB | 4.03 TiB | 1 | 4.72% |

| 15.13 TiB | 32.28 TiB | 2 | 37.82% |

| 7.31 TiB | 25.66 TiB | 3 | 30.06% |

| 5.47 TiB | 23.38 TiB | 4 | 27.39% |

The below table shows how many unique data are shared with other clients. Usually different applications owns different data and should not resolve to the same CID.

However, this could be possible if all below clients use same software to prepare for the exact same dataset or they belong to a series of LDN applications for the same dataset.

✔️ No CID sharing has been observed.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

@huahuabuer I saw your (our your colleagues) message on Slack requesting why the bot did not triggered yet. This triggered me to test the retrieval of your first allocations. I am sad to report I could only retrieve data from SP f01771695. 2 out of the 3 other miners refused the retrieval and the 3th is just no responding.

# lotus client retrieve --provider f01986203 uAXASIOdMHt_TvCY4ZnbgAaymgsEnIldK5eQvlFQ83KMH0r0M tmp

Recv 0 B, Paid 0 FIL, Open (New), 0s [1676022719749763514|0]

Recv 0 B, Paid 0 FIL, DealProposed (WaitForAcceptance), 1ms [1676022719749763514|0]

Recv 0 B, Paid 0 FIL, DealAccepted (Accepted), 1.707s [1676022719749763514|0]

Recv 0 B, Paid 0 FIL, PaymentChannelSkip (Ongoing), 1.707s [1676022719749763514|0]

Recv 0 B, Paid 0 FIL, ProviderCancelled (Cancelling), 1.92s [1676022719749763514|0]

Recv 0 B, Paid 0 FIL, CancelComplete (Cancelled), 1.92s [1676022719749763514|0]

ERROR: Retrieval Proposal Cancelled: Provider cancelled retrieval

# lotus client retrieve --provider f01986236 uAXASIEKlnBAS_-YWEibDiYqU-CEejNVSfsrzG-aUjKb6OeXF tmp

Recv 0 B, Paid 0 FIL, Open (New), 1ms [1676022719749763513|0]

Recv 0 B, Paid 0 FIL, DealProposed (WaitForAcceptance), 4ms [1676022719749763513|0]

Recv 0 B, Paid 0 FIL, DealAccepted (Accepted), 382ms [1676022719749763513|0]

Recv 0 B, Paid 0 FIL, PaymentChannelSkip (Ongoing), 382ms [1676022719749763513|0]

Recv 0 B, Paid 0 FIL, ProviderCancelled (Cancelling), 384ms [1676022719749763513|0]

Recv 0 B, Paid 0 FIL, CancelComplete (Cancelled), 384ms [1676022719749763513|0]

ERROR: Retrieval Proposal Cancelled: Provider cancelled retrieval

# lotus client retrieve --provider f01986229 uAXASILkWZbs_5zt6A9Qljy4nIelJMsrJdfJnXwbbIx_S7WLz tmp

2023-02-13T09:16:32.827+0100 WARN rpc go-jsonrpc@v0.1.8/client.go:548 unmarshaling failed {"message": "{\"Err\":\"exhausted 5 attempts but failed to open stream, err: failed to dial 12D3KooWBu7REndvDbxjWXaBGpSytoe21c8akFVfFZX5s9Gpjzuj:\\n * [/ip4/219.138.23.133/tcp/7005] dial tcp4 0.0.0.0:63452-\\u003e219.138.23.133:7005: i/o timeout\",\"Root\":null,\"Piece\":null,\"Size\":0,\"MinPrice\":\"\\u003cnil\\u003e\",\"UnsealPrice\":\"\\u003cnil\\u003e\",\"PricePerByte\":\"\\u003cnil\\u003e\",\"PaymentInterval\":0,\"PaymentIntervalIncrease\":0,\"Miner\":\"f01986229\",\"MinerPeer\":{\"Address\":\"f01986229\",\"ID\":\"12D3KooWBu7REndvDbxjWXaBGpSytoe21c8akFVfFZX5s9Gpjzuj\",\"PieceCID\":null}}"}

2023-02-13T09:16:32.827+0100 INFO retry retry/retry.go:17 Retrying after error:RPC client error: unmarshaling result: failed to parse big string: '"\u003cnil\u003e"'

2023-02-13T09:17:08.909+0100 WARN rpc go-jsonrpc@v0.1.8/client.go:548 unmarshaling failed {"message": "{\"Err\":\"exhausted 5 attempts but failed to open stream, err: failed to dial 12D3KooWBu7REndvDbxjWXaBGpSytoe21c8akFVfFZX5s9Gpjzuj:\\n * [/ip4/219.138.23.133/tcp/7005] dial tcp4 0.0.0.0:63452-\\u003e219.138.23.133:7005: i/o timeout\",\"Root\":null,\"Piece\":null,\"Size\":0,\"MinPrice\":\"\\u003cnil\\u003e\",\"UnsealPrice\":\"\\u003cnil\\u003e\",\"PricePerByte\":\"\\u003cnil\\u003e\",\"PaymentInterval\":0,\"PaymentIntervalIncrease\":0,\"Miner\":\"f01986229\",\"MinerPeer\":{\"Address\":\"f01986229\",\"ID\":\"12D3KooWBu7REndvDbxjWXaBGpSytoe21c8akFVfFZX5s9Gpjzuj\",\"PieceCID\":null}}"}

2023-02-13T09:17:08.909+0100 INFO retry retry/retry.go:17 Retrying after error:RPC client error: unmarshaling result: failed to parse big string: '"\u003cnil\u003e"'Can you please work with the SPs you have sealed with to enable retrieval so we can check the content of what is stored?

@lvschouwen You can try to retrieve older deals. Because SP told me that they need to merge metadata in batches, so the latest batch of deals can be retrieved after the recent merge. recent merge operation will soon.

@huahuabuer As you suggest I have tried the last deals to f01986203 and f01986236 Both failed with the same error.

Lets work the other way around. Tell me which deals should work and I'll give those a try.

OK, I ask SP merge metadata now.

f01858410

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

400TiB

28a19aeb-90bd-4f8a-b8d9-971ae5c97d26

f01858410

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

Alex11801 & psh0691

200% of weekly dc amount requested

400TiB

100TiB

4.90PiB

| Number of deals | Number of storage providers | Previous DC Allocated | Top provider | Remaining DC |

|---|---|---|---|---|

| 3332 | 4 | 100TiB | 41.3 | 128GiB |

Beijing Jincheng Mingyuan Culture Media Co., Ltd.(北京觅拍文化传媒有限公司)f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

1Alex118011psh0691

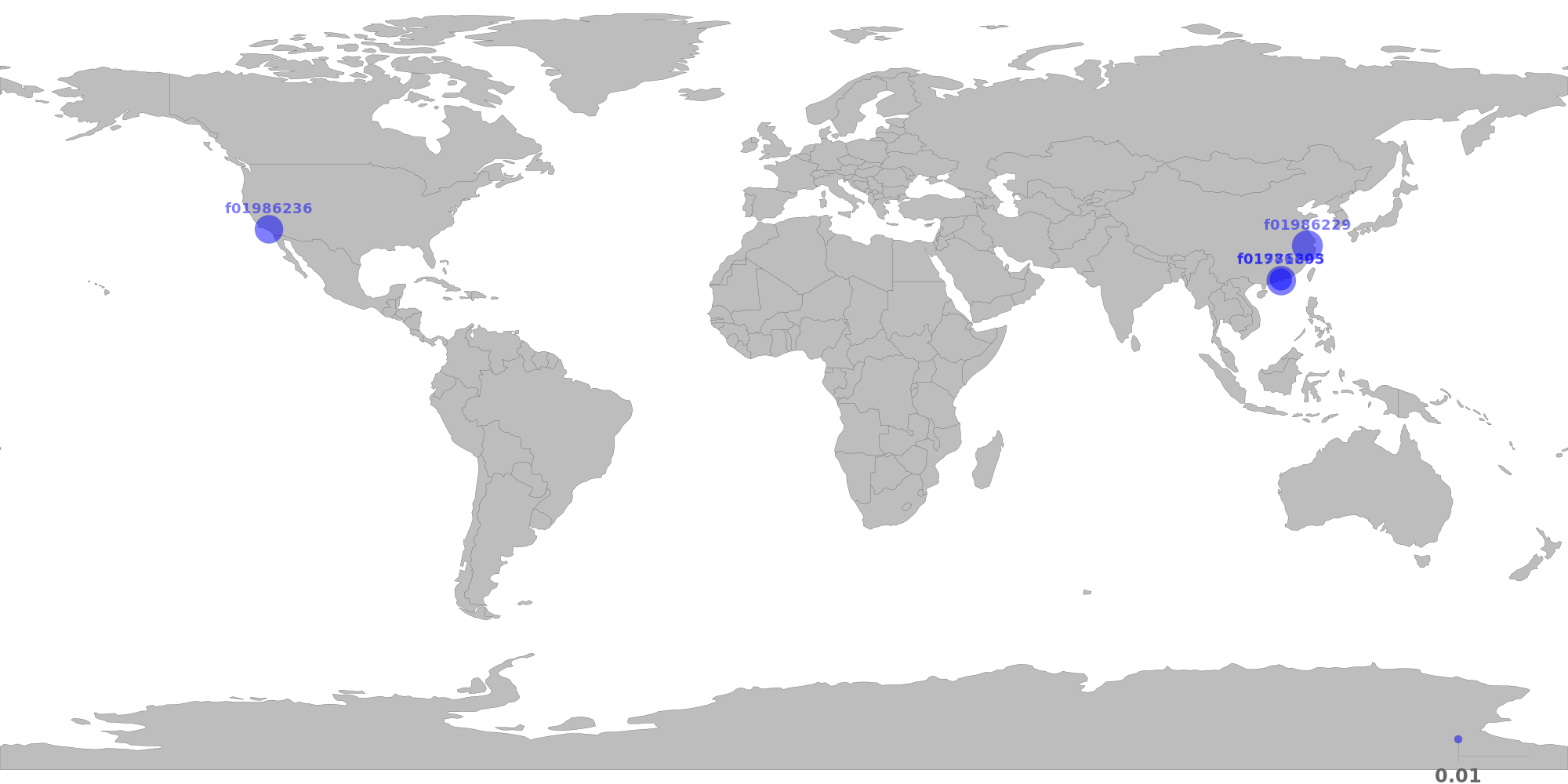

The below table shows the distribution of storage providers that have stored data for this client.

If this is the first time a provider takes verified deal, it will be marked as new.

For most of the datacap application, below restrictions should apply.

Since this is the 4th allocation, the following restrictions have been relaxed:

⚠️ 20.26% of total deal sealed by f01986229 are duplicate data.

| Provider | Location | Total Deals Sealed | Percentage | Unique Data | Duplicate Deals |

|---|---|---|---|---|---|

f01986229new |

Hangzhou, Zhejiang, CNCHINANET-BACKBONE |

39.19 TiB | 41.58% | 31.25 TiB | 20.26% |

| f01986203 | Shenzhen, Guangdong, CNCHINANET-BACKBONE |

5.63 TiB | 5.97% | 5.63 TiB | 0.00% |

| f01986236 | Los Angeles, California, USCogent Communications |

21.84 TiB | 23.18% | 21.84 TiB | 0.00% |

| f01771695 | Hong Kong, Central and Western, HKHONG KONG BRIDGE INFO-TECH LIMITED |

27.59 TiB | 29.28% | 27.59 TiB | 0.00% |

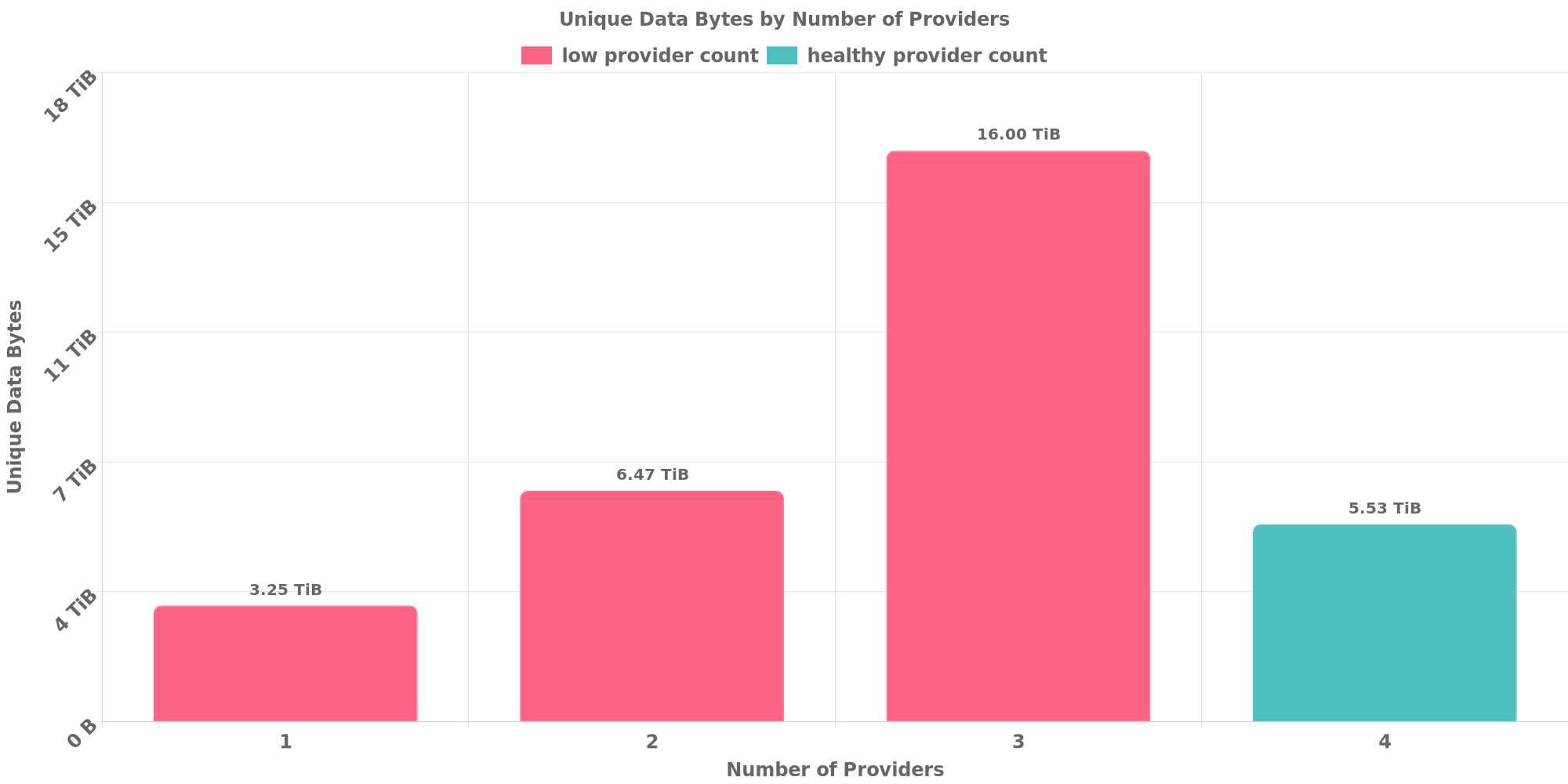

The below table shows how each many unique data are replicated across storage providers.

Since this is the 4th allocation, the following restrictions have been relaxed:

⚠️ 74.87% of deals are for data replicated across less than 4 storage providers.

| Unique Data Size | Total Deals Made | Number of Providers | Deal Percentage |

|---|---|---|---|

| 3.25 TiB | 3.91 TiB | 1 | 4.14% |

| 6.47 TiB | 13.50 TiB | 2 | 14.32% |

| 16.00 TiB | 53.16 TiB | 3 | 56.40% |

| 5.53 TiB | 23.69 TiB | 4 | 25.13% |

The below table shows how many unique data are shared with other clients. Usually different applications owns different data and should not resolve to the same CID.

However, this could be possible if all below clients use same software to prepare for the exact same dataset or they belong to a series of LDN applications for the same dataset.[^3]

✔️ No CID sharing has been observed.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

[^2]: Deals from those addresses are combined into this report as they are specified with checker:manualTrigger

[^3]: To manually trigger this report with deals from other related addresses, add a comment with text checker:manualTrigger <other_address_1> <other_address_2> ...

@lvschouwen It`s merged , i have tested it OK on local environment.

~$ lotus state get-deal 23280035

{

"Proposal": {

"PieceCID": {

"/": "baga6ea4seaqfnd72sdhsmehuklq3x3ghcwcgowd5ndkoa2jlamsrfpmeo3n2kki"

},

"PieceSize": 34359738368,

"VerifiedDeal": true,

"Client": "f01966506",

"Provider": "f01986229",

"Label": "uAXASIC2DpTY-GUyrEX1D7NeUD2tn0ayxZbLyrz4Ho3JoDUXz",

"StartEpoch": 2562660,

"EndEpoch": 4106031,

"StoragePricePerEpoch": "0",

"ProviderCollateral": "10026613118896620",

"ClientCollateral": "0"

},

"State": {

"SectorStartEpoch": 2553881,

"LastUpdatedEpoch": 2601635,

"SlashEpoch": -1,

"VerifiedClaim": 5757867

}

}

~$ lotus client retrieve --provider f01986229 uAXASIC2DpTY-GUyrEX1D7NeUD2tn0ayxZbLyrz4Ho3JoDUXz tmp

Recv 0 B, Paid 0 FIL, Open (New), 0s

Recv 0 B, Paid 0 FIL, DealProposed (WaitForAcceptance), 3ms

Recv 0 B, Paid 0 FIL, DealAccepted (Accepted), 45ms

Recv 0 B, Paid 0 FIL, PaymentChannelSkip (Ongoing), 45ms

Recv 5.172 KiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.854s

Recv 6.793 KiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.855s

Recv 1.007 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.871s

Recv 2.007 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.884s

Recv 3.007 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.897s

Recv 4.007 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.908s

Recv 5.007 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.917s

Recv 6.007 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.925s

Recv 7.007 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.936s

Recv 8.007 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.947s

Recv 9.007 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.957s

Recv 10.01 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.964s

Recv 11.01 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.974s

Recv 12.01 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.982s

Recv 13.01 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m31.993s

Recv 14.01 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m32.002s

Recv 15.01 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m32.011s

Recv 16.01 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m32.021s

Recv 17.01 MiB, Paid 0 FIL, BlocksReceived (Ongoing), 1m32.027s⚠️ 20.26% of total deal sealed by f01986229 are duplicate data. ⚠️ 74.87% of deals are for data replicated across less than 4 storage providers.

What's your plan to balance the two higher rate items please? @huahuabuer

@GaryGJG

I noticed that there are several applications of the same company. Could you explain why you need such a large volume of datacap? Or can you prove that you have enough valid data to onboard?

@Alex11801 This is mainly due to the nature of our company's business. We are a company engaged in the media business. Our works are mainly high-definition video data. The volume of this type of data is large and it is a process of continuous increase. For example, one of our media platforms - 新片场 https://www.xinpianchang.com/ It can be seen that the volume of data is very large, and it is concentrated in high-quality and high-definition works.

Can you pls support us this round too? Thank !

Greetings!

I was able to retrieve data from you.

Having done a quick scan on your data and watching some of your video's it looks like you are storing what you have said you would store. Therefore i will propose.

Fellow notary's from Asai region , if you want to check what is here please look here

If there is anything out of the ordinary please contact me.

Your Datacap Allocation Request has been proposed by the Notary

bafy2bzacealtuu24k6p33zfhp6neqpr2wvrn4hznsm3myqe7jvryevjoyzksw

Address

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

Datacap Allocated

400.00TiB

Signer Address

f1krmypm4uoxxf3g7okrwtrahlmpcph3y7rbqqgfa

Id

28a19aeb-90bd-4f8a-b8d9-971ae5c97d26

You can check the status of the message here: https://filfox.info/en/message/bafy2bzacealtuu24k6p33zfhp6neqpr2wvrn4hznsm3myqe7jvryevjoyzksw

Your Datacap Allocation Request has been approved by the Notary

bafy2bzacebtz4pbmqiw6lfnieeqc5ou7p66y4v4yyl2tgepiorntmk5abwreq

Address

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

Datacap Allocated

400.00TiB

Signer Address

f1hhippi64yiyhpjdtbidfyzma6irc2nuav7mrwmi

Id

28a19aeb-90bd-4f8a-b8d9-971ae5c97d26

You can check the status of the message here: https://filfox.info/en/message/bafy2bzacebtz4pbmqiw6lfnieeqc5ou7p66y4v4yyl2tgepiorntmk5abwreq

f01858410

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

800TiB

85060b08-9c39-455f-83bd-da87f3d9ac61

f01858410

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

Alex11801 & cryptowhizzard

400% of weekly dc amount requested

800TiB

100TiB

4.90PiB

| Number of deals | Number of storage providers | Previous DC Allocated | Top provider | Remaining DC |

|---|---|---|---|---|

| 3332 | 4 | 400TiB | 41.3 | 128GiB |

⚠️ 1 storage providers sealed more than 30% of total datacap - f01986229: 39.24%

⚠️ 1 storage providers sealed too much duplicate data - f01986229: 20.26%

⚠️ 76.28% of deals are for data replicated across less than 4 storage providers.

✔️ No CID sharing has been observed.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

[^2]: Deals from those addresses are combined into this report as they are specified with checker:manualTrigger

[^3]: To manually trigger this report with deals from other related addresses, add a comment with text checker:manualTrigger <other_address_1> <other_address_2> ...

Click here to view the full report.

checker:manualTrigger

⚠️ 1 storage providers sealed more than 30% of total datacap - f01986236: 30.71%

⚠️ 1 storage providers sealed too much duplicate data - f01986229: 20.26%

⚠️ 81.10% of deals are for data replicated across less than 4 storage providers.

✔️ No CID sharing has been observed.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

[^2]: Deals from those addresses are combined into this report as they are specified with checker:manualTrigger

[^3]: To manually trigger this report with deals from other related addresses, add a comment with text checker:manualTrigger <other_address_1> <other_address_2> ...

Click here to view the full report.

checker:manualTrigger

⚠️ 1 storage providers sealed more than 30% of total datacap - f01986236: 30.53%

⚠️ 1 storage providers sealed too much duplicate data - f01986229: 20.26%

⚠️ 80.81% of deals are for data replicated across less than 4 storage providers.

✔️ No CID sharing has been observed.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

[^2]: Deals from those addresses are combined into this report as they are specified with checker:manualTrigger

[^3]: To manually trigger this report with deals from other related addresses, add a comment with text checker:manualTrigger <other_address_1> <other_address_2> ...

Click here to view the full report.

⚠️ 1 storage providers sealed more than 30% of total datacap - f01986236: 30.53%

⚠️ 1 storage providers sealed too much duplicate data - f01986229: 20.26%

⚠️ 80.81% of deals are for data replicated across less than 4 storage providers.

✔️ No CID sharing has been observed.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

[^2]: Deals from those addresses are combined into this report as they are specified with checker:manualTrigger

[^3]: To manually trigger this report with deals from other related addresses, add a comment with text checker:manualTrigger <other_address_1> <other_address_2> ...

Click here to view the full report.

Notice:1 storage providers sealed more than 30% of total datacap - f01986236: 30.53%

Everything else is good, can you adjust this issue in the future? what's the plan?

@NDLABS-OFFICE Yes, I've been paying attention to these issues. But we are continuing packaging, when this round of packaging is complete, these issues will be resolved. As other SPs are about to join, the proportion of f01986236 will drop.

@huahuabuer Thank you for your reply, I hope to see the corrected results. In addition, I tried to conduct a sample search, and the results are normal. I am willing to support this round, I hope you can adjust and complete the next round.

Your Datacap Allocation Request has been proposed by the Notary

bafy2bzaceaas4g2g6vpgre4xagrklplmdeihuwzupdywwh66u37dserb6iygc

Address

f1qreowipcbjnfjrpjxbyqnskhgxwbady7nx72o6q

Datacap Allocated

800.00TiB

Signer Address

f1yayfsv6whu3rheviucvventj3y6t542xfpb47ei

Id

85060b08-9c39-455f-83bd-da87f3d9ac61

You can check the status of the message here: https://filfox.info/en/message/bafy2bzaceaas4g2g6vpgre4xagrklplmdeihuwzupdywwh66u37dserb6iygc

checker:manualTrigger

Large Dataset Notary Application

To apply for DataCap to onboard your dataset to Filecoin, please fill out the following.

Core Information

Please respond to the questions below by replacing the text saying "Please answer here". Include as much detail as you can in your answer.

Project details

Share a brief history of your project and organization.

What is the primary source of funding for this project?

What other projects/ecosystem stakeholders is this project associated with?

Use-case details

Describe the data being stored onto Filecoin

Where was the data in this dataset sourced from?

Can you share a sample of the data? A link to a file, an image, a table, etc., are good ways to do this.

Confirm that this is a public dataset that can be retrieved by anyone on the Network (i.e., no specific permissions or access rights are required to view the data).

What is the expected retrieval frequency for this data?

For how long do you plan to keep this dataset stored on Filecoin?

DataCap allocation plan

In which geographies (countries, regions) do you plan on making storage deals?

How will you be distributing your data to storage providers? Is there an offline data transfer process?

How do you plan on choosing the storage providers with whom you will be making deals? This should include a plan to ensure the data is retrievable in the future both by you and others.

How will you be distributing deals across storage providers?

Do you have the resources/funding to start making deals as soon as you receive DataCap? What support from the community would help you onboard onto Filecoin?