About customer data: First, we got in touch with the official members of the fil+ team and a large number of notaries, who have done detailed due diligence on our application. Secondly, our sample data and the specific amount of data have been publicly disclosed in the due diligence of the notary public in the early stage, and can be viewed in this application. Finally, using Filecoin for storage is mainly because I see the future of distributed storage and hope to participate in the Web3 world. About files and processing: We mainly use the offline mail storage server for data distribution, and the server configuration as the transmission carrier is: X10DRL-I motherboard, E5-2680v42, 480G SSD1, DDR4 32G2, dual-port 10GbE network card + optical module 10G, LSI 9311-8I 2, CRPS1600W*1 In order to ensure the security of data during transmission, the storage system adopts ZFS-RaidZ2 storage pool, which allows data not to be lost if less than two disks are damaged. We use the Singularity program for data cutting and packaging, and at the same time, we have customized and optimized it to improve efficiency. In order to ensure the compliance of data encapsulation, we have established a corresponding management program that will monitor the entire life cycle of each car file.

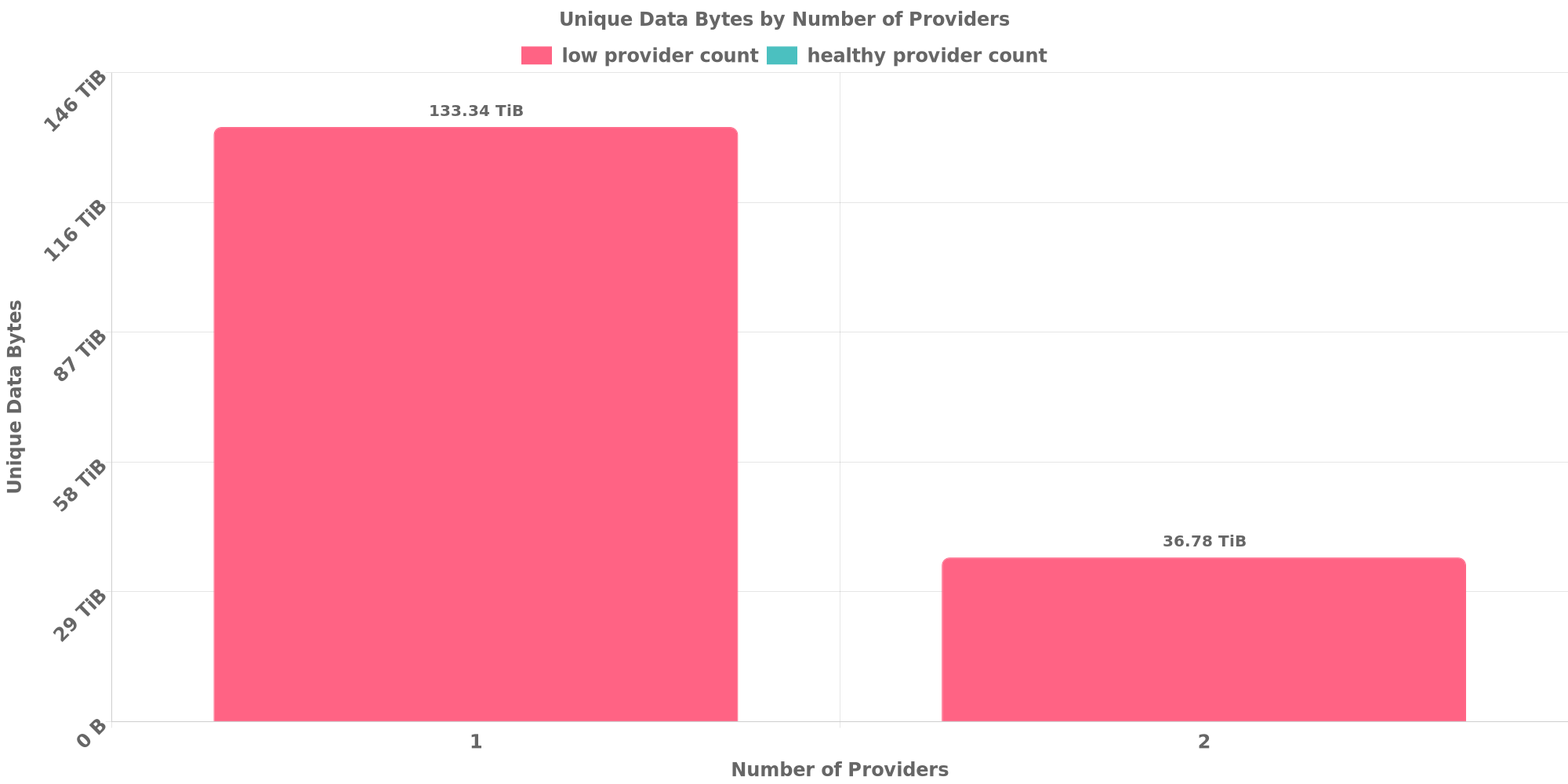

From the node connectivity check displayed by the check bot, except for the newly added node, other nodes are connected normally. But the regions are all together.

From the node connectivity check displayed by the check bot, except for the newly added node, other nodes are connected normally. But the regions are all together. @cryptowhizzard Already submited the form, pls check it.

@cryptowhizzard Already submited the form, pls check it. Maybe you need to confirm whether the data download is abnormal. In addition, the dispersion.

Become better and better.

Maybe you need to confirm whether the data download is abnormal. In addition, the dispersion.

Become better and better.

Large Dataset Notary Application

To apply for DataCap to onboard your dataset to Filecoin, please fill out the following.

Core Information

Please respond to the questions below by replacing the text saying "Please answer here". Include as much detail as you can in your answer.

Project details

Share a brief history of your project and organization.

What is the primary source of funding for this project?

What other projects/ecosystem stakeholders is this project associated with?

Use-case details

Describe the data being stored onto Filecoin

Where was the data in this dataset sourced from?

Can you share a sample of the data? A link to a file, an image, a table, etc., are good ways to do this.

Confirm that this is a public dataset that can be retrieved by anyone on the Network (i.e., no specific permissions or access rights are required to view the data).

What is the expected retrieval frequency for this data?

For how long do you plan to keep this dataset stored on Filecoin?

DataCap allocation plan

In which geographies (countries, regions) do you plan on making storage deals?

How will you be distributing your data to storage providers? Is there an offline data transfer process?

How do you plan on choosing the storage providers with whom you will be making deals? This should include a plan to ensure the data is retrievable in the future both by you and others.

How will you be distributing deals across storage providers?

Do you have the resources/funding to start making deals as soon as you receive DataCap? What support from the community would help you onboard onto Filecoin?