Pull request please :)

On Thu, Nov 21, 2019, 09:47 Diganta Misra notifications@github.com wrote:

Mish is a new novel activation function proposed in this paper https://arxiv.org/abs/1908.08681. It has shown promising results so far and has been adopted in several packages including:

- TensorFlow-Addons https://github.com/tensorflow/addons/tree/master/tensorflow_addons/activations

- SpaCy (Tok2Vec Layer) https://github.com/explosion/spaCy

- Thinc - SpaCy's official NLP based ML library https://github.com/explosion/thinc/releases/tag/v7.3.0

- Echo AI https://github.com/digantamisra98/Echo

- Eclipse's deeplearning4j https://github.com/eclipse/deeplearning4j/issues/8417

- Hasktorch https://github.com/hasktorch/hasktorch/blob/e5d4fb1b11663de3e032941f78b9d25c5da06f6d/hasktorch/src/Torch/Typed/Native.hs#L314

- CNTKX - Extension of Microsoft's CNTK https://github.com/delzac/cntkx

- FastAI-Dev https://github.com/fastai/fastai_dev/blob/0f613ba3205990c83de9dba0c8798a9eec5452ce/dev/local/layers.py#L441

- Darknet https://github.com/AlexeyAB/darknet/commit/bf8ea4183dc265ac17f7c9d939dc815269f0a213

- Yolov3 https://github.com/ultralytics/yolov3/commit/444a9f7099d4ff1aef12783704e3df9a8c3aa4b3

- BeeDNN - Library in C++ https://github.com/edeforas/BeeDNN

- Gen-EfficientNet-PyTorch https://github.com/rwightman/gen-efficientnet-pytorch

- dnet https://github.com/umangjpatel/dnet/blob/master/nn/activations.py

All benchmarks, analysis and links to official package implementations can be found in this repository https://github.com/digantamisra98/Mish

It would be nice to have Mish as an option within the activation function group.

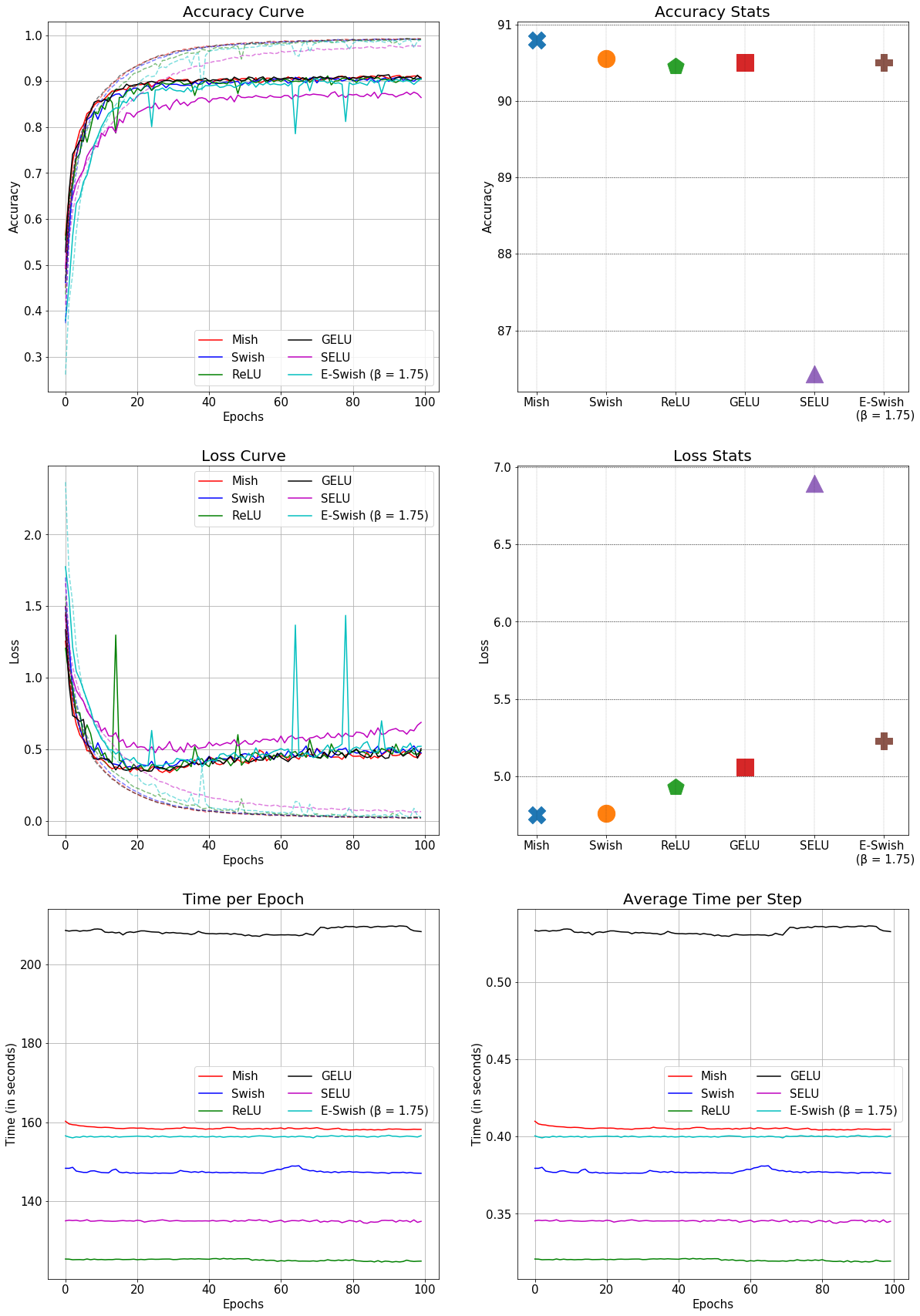

This is the comparison of Mish with other conventional activation functions in a SEResNet-50 for CIFAR-10: (Better accuracy and faster than GELU) [image: se50_1] https://user-images.githubusercontent.com/34192716/69002745-0de37980-091b-11ea-87da-ac8d17c79e07.png

— You are receiving this because you are subscribed to this thread. Reply to this email directly, view it on GitHub https://github.com/hughperkins/DeepCL/issues/152?email_source=notifications&email_token=AAA6FKEVHRELFRSPKVV4BWDQU2NOJA5CNFSM4JQDNCR2YY3PNVWWK3TUL52HS4DFUVEXG43VMWVGG33NNVSW45C7NFSM4H3D42MQ, or unsubscribe https://github.com/notifications/unsubscribe-auth/AAA6FKEHZ6IZSBZHYZRURC3QU2NOJANCNFSM4JQDNCRQ .

Mish is a new novel activation function proposed in this paper. It has shown promising results so far and has been adopted in several packages including:

All benchmarks, analysis and links to official package implementations can be found in this repository

It would be nice to have Mish as an option within the activation function group.

This is the comparison of Mish with other conventional activation functions in a SEResNet-50 for CIFAR-10: (Better accuracy and faster than GELU)