This is pretty crude, but these are the files I used in my demo:

https://github.com/jimpick/signed-exchange-test

Probably the only thing really re-usable in that is the service worker (sw-ipfs.js) which intercepts requests and loads the associated .sxg file from the ipfs.io gateway.

To be precise, a Web Bundle is a CBOR file with a

To be precise, a Web Bundle is a CBOR file with a

Background

Google is championing work on "Web Packaging" to solve MITM (aka "misattribution problem") of the AMP Project. Signed HTTP Exchanges (SXG) decouple the origin of the content from who distributes it. Content can be published on the web, without relying on a specific server, connection, or hosting service, which is highly relevant for IPFS, as it is great at distributing immutable bundles of data.

2018: Signed HTTP Exchanges

A longer overview can be found at developers.google.com: Signed HTTP Exchanges:

The Google Chrome team is working towards making this an IETF spec and have a prototype built for Chrome with an origin trial starting with Chrome 71.

It is worth noting that this is still a very PoC spec and current version of SXGs is considered harmful by Mozilla and the spec needs further work.

2019 Q3: Bundled HTTP Exchanges, AKA Web Bundles

Web Bundles, more formally known as Bundled HTTP Exchanges, are part of the Web Packaging proposal.

To be precise, a Web Bundle is a CBOR file with a

.wbnextension (by convention) which packages HTTP resources into a binary format, and is served with theapplication/webbundleMIME type.More at https://web.dev/web-bundles/

Potential IPFS Use Cases

How does this fit in with P2P distribution? Is the future of web publishing signed+versioned bundles over IPFS?

IPFS as transport for SXG / Web Bundles

Signed/Bundled Exchange provide means to separate the URL authority from the delivery mechanism of the document. This allows the IPFS to deliver documents on behalf of a third party which signed the exchange bundle and play nice with legacy PKI.

In simpler words: a bundle with entire website (or parts of it) can be loaded over IPFS and browser supporting signed exchange will validate signatures and render content with original domain and green lock in the location bar. Click below to watch 1 minute demo:

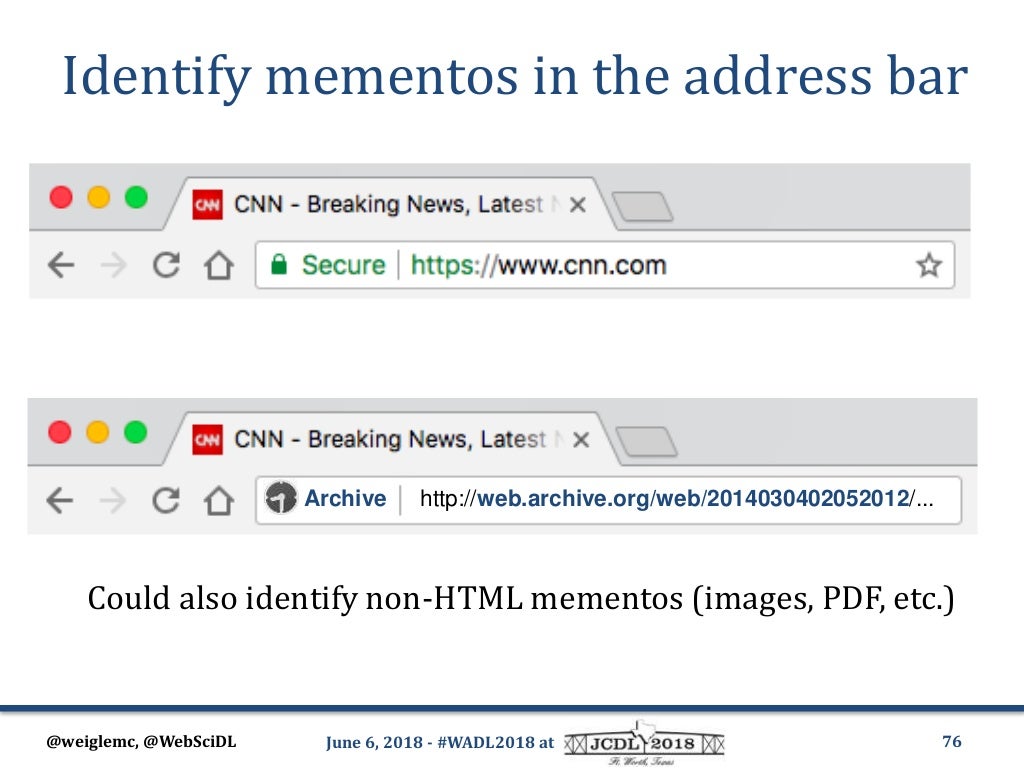

This means one could set up DNSLink pointing at Signed HTTP Exchange and users of IPFS Companion would load cached websites over IPFS while keeping "original" URLs in location bar

Digression: right now Chrome "lies" to user and displays "https://" as a protocol, which raises valid concerns. I suspect it will end up being "wpack://" or something like that.

Alternative way to use this, would be to create Service Worker orchestration that loads website via SXG snapshot fetched from IPFS as means of failover/workaround for DDoS or censorship scenarios. (See initial experimentation in https://github.com/ipfs/in-web-browsers/issues/121#issuecomment-444769959)

Archival Use Cases (Web Bundles)

Bundled Exchange file format could provide standardized means of creating future-proof website snapshots

? (add more ipfs-specific uses in comments below!)

Learning Materials

WebPackage 101

Fixing AMP URLs with Web Packaging (20min primer on Web Packaging)

Web Packaging Format Explainer

Use Cases and Requirements for Web Packages

Known Problems and Concerns

References

Web Packaging Primer

Additional Resources

cc @jimpick @mikeal