Without knowing more details about your setup there is not much I can do to help. Are you running behind a reverse proxy? Did you make sure these routes are properly setup for WebSockets? https://jitsi.github.io/handbook/docs/devops-guide/devops-guide-docker#running-behind-a-reverse-proxy

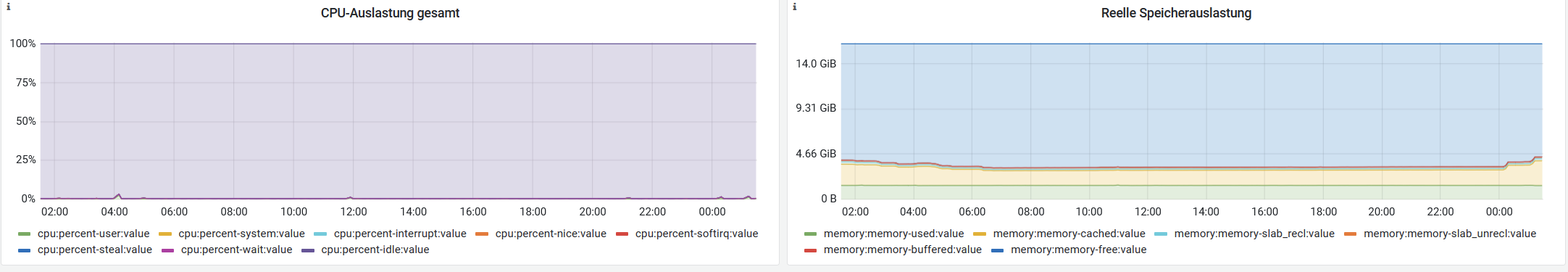

My Jitsi Meet instance has video performance problems and none of the provided workarounds by google worked. My server has plenty of resources left, so I don't think the server is the cause.

Maybe you could update the self hosting guide how to setup correctly with Docker.