Matrix-Vector Library Designed for Neural Network Construction. cuda (gpu) support, openmp (multithreaded cpu) support, partial support of BLAS, expression template based implementation PTX code generation identical to hand written kernels, and support for auto-differentiation

Mish is a new novel activation function proposed in this paper.

It has shown promising results so far and has been adopted in several packages including:

Mish is a new novel activation function proposed in this paper. It has shown promising results so far and has been adopted in several packages including:

All benchmarks, analysis and links to official package implementations can be found in this repository

It would be nice to have Mish as an option within the activation function group.

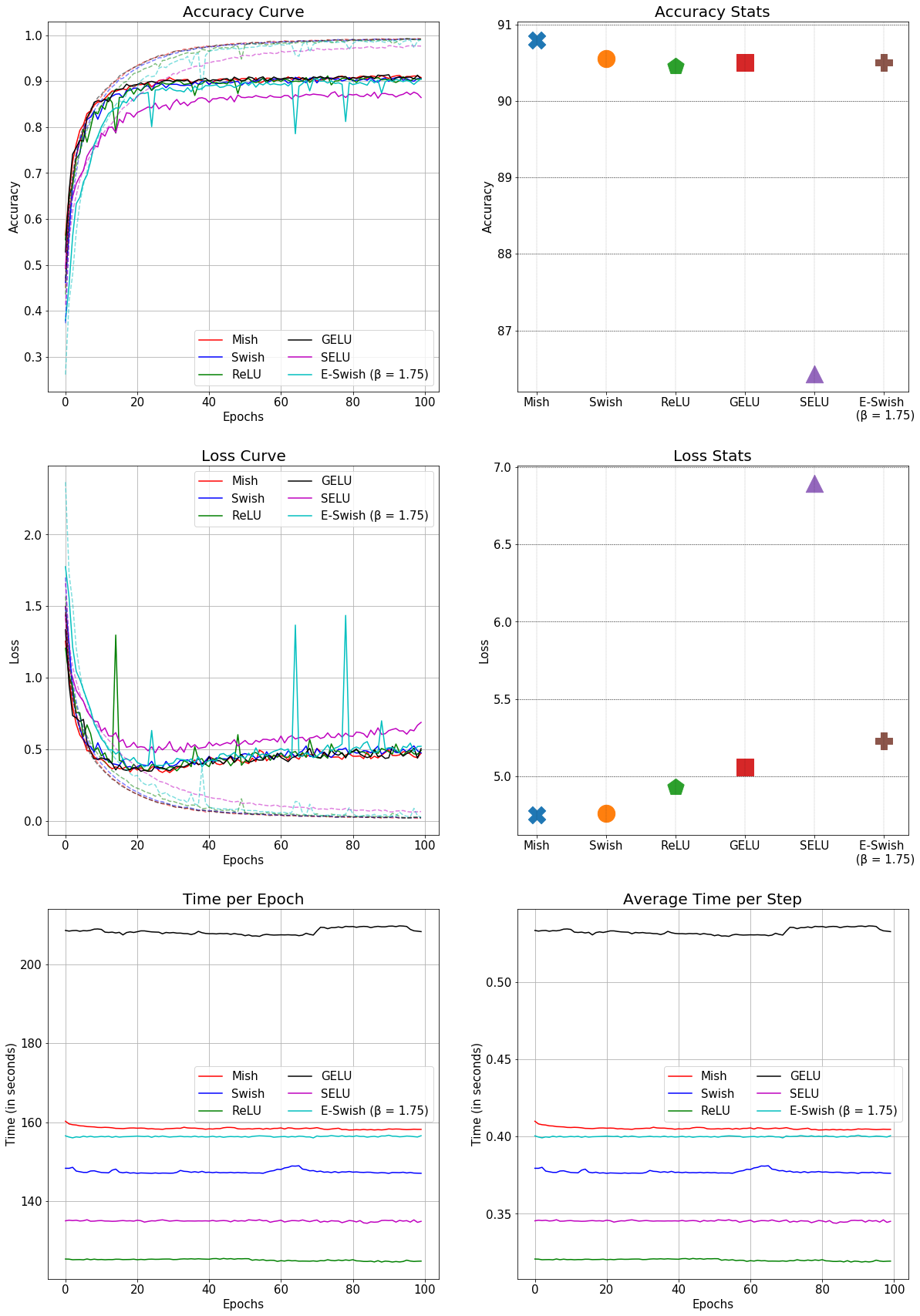

This is the comparison of Mish with other conventional activation functions in a SEResNet-50 for CIFAR-10: (Better accuracy and faster than GELU)