You launched way too many processes. You probably blew up your VRAM or something doing that many processes. Start with one.

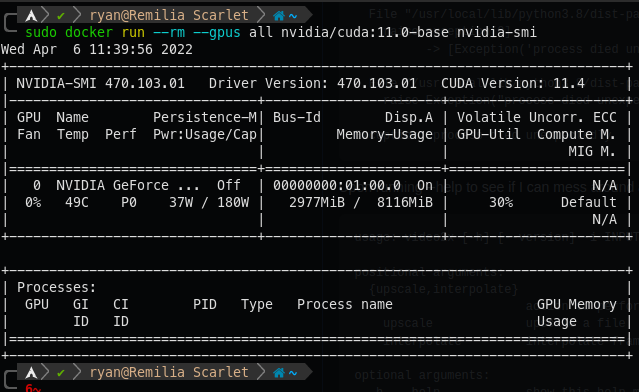

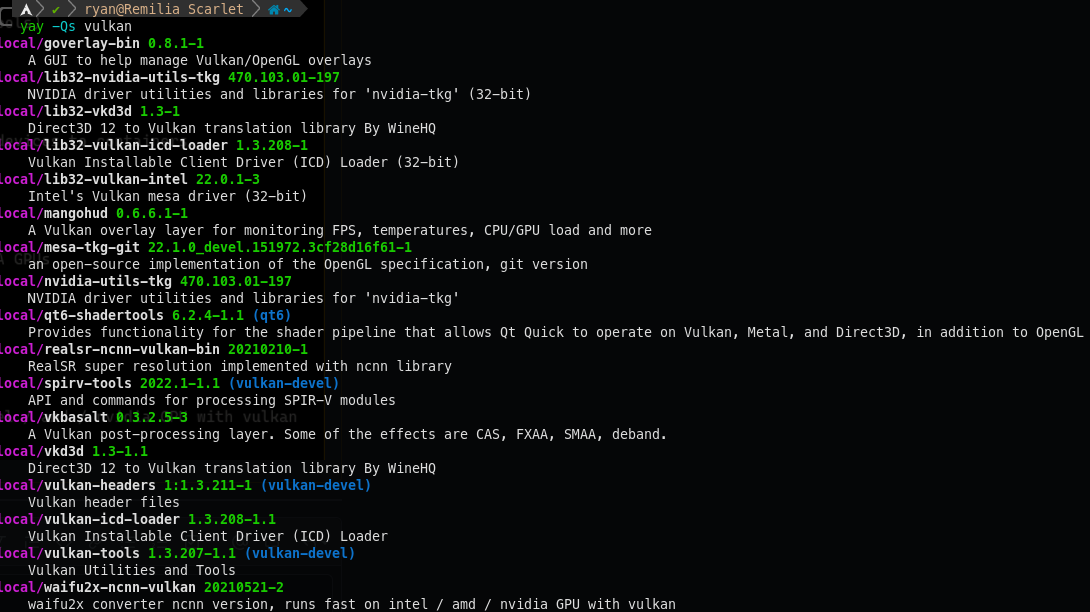

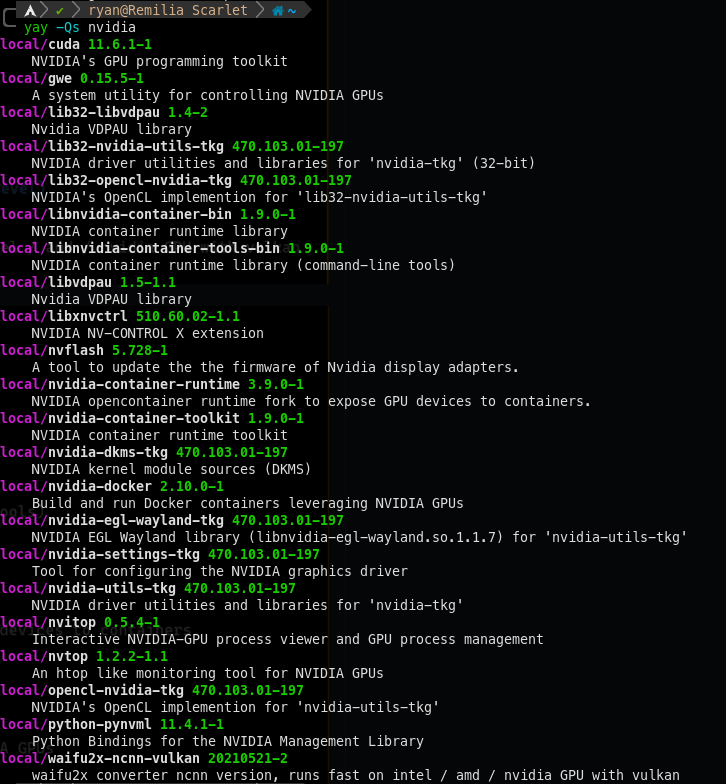

You do need to lower your process count, but I guess that's not the main problem since I missed the vkEnumeratePhysicalDevices earlier. I think it's more likely that you missed the nvidia-docker2 package? What system and GPU are you using?

I cannot get the Docker image to work. The syntax given is

docker run -it --rm -v $PWD:/host ghcr.io/k4yt3x/video2x:5.0.0-beta5 -i input.mp4 -o output.mp4 -p6 upscale -h 720 -a waifu2x -n3but this fails:Upon running --help to see if I can mess around and get things to work,

Almost none of the flags given in the documentation actually exist, and ones that do are wrong. For example

-hprints the help message, it does not set height (as it does in 4.3.1).