Cool, directly access to pod from control plane, it can collaborate with karmadactl get.

Like,

karmadactl logs <pod-name> -n <namespace> -c <cluster-name>

karmadactl exec pod <pod-name> -n <namespace> -c <cluster-name>Closed prodanlabs closed 1 year ago

Cool, directly access to pod from control plane, it can collaborate with karmadactl get.

Like,

karmadactl logs <pod-name> -n <namespace> -c <cluster-name>

karmadactl exec pod <pod-name> -n <namespace> -c <cluster-name>I am still interested in this feature, but I don't know actual needs of customers. Look forward to more discussions in this issue.

@prodanlabs I'm not sure I understand you correctly, can you give an example or more details about the use case?

I am still interested in this feature, but I don't know actual needs of customers. Look forward to more discussions in this issue.

I also think it's a nice feature, as it was discussed on kubeedge and a lot of people love it.

@prodanlabs I'm not sure I understand you correctly, can you give an example or more details about the use case?

Now the karmada control plane is unable to get the pod logs of the member cluster (pull mode) like

karmadactl logs <pod-name> -n <namespace> -c <cluster-name>or pods that cannot be entered into a member cluster (pull mode) via on the karmada control plane

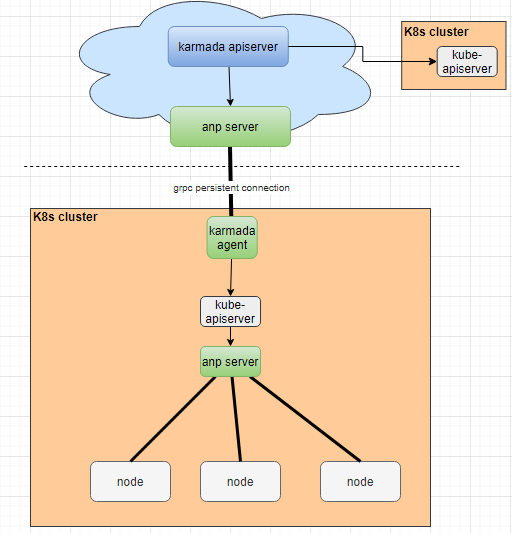

karmadactl exec pod <pod-name> -n <namespace> -c <cluster-name> My understand that for pull mode cluster, it is like Node that is One-sided communicate with control plane. For kubeedge, it seemingly erect a tunnel to access nodes.

Like in the cloud kubedge can use kubectl exec -it <pod_name> to enter the pod of the edge node

My understand that for pull mode cluster, it is like

Nodethat is One-sided communicate with control plane. For kubeedge, it seemingly erect a tunnel to access nodes.

Yes, that's right

But now, we also need to access apiserver of member cluster by third-party networking tools like ANP. Similarly, we also need to erect a extra tunnel to access pod.

Yes, I like the feature.

kubeedge does a lot of work on the cloud-edge network link, so this functionality is relatively easy for kubeedge. How does karmada implement this function elegantly, I currently have no good solution.

I think we need a network plugin that can support to access directly to nodes from control plane. This plugin can be installed according to the user's choice.

I have interest to research this function.

But now, we also need to access apiserver of member cluster by third-party networking tools like ANP. Similarly, we also need to erect a extra tunnel to access pod.

After the APIServer is connected, the pod can be accessed. Am I misunderstood?

If the network problem is caused by the internal cluster, the problem should be solved in cluster instead of karmada.

After the APIServer is connected, the pod can be accessed. Am I misunderstood?

There seems other port to access containers of pod for logs and exec commands rather than through apiserver.

refer https://kubernetes.io/docs/concepts/cluster-administration/logging/

We can logs and exec through clusters/proxy, why other port to access containers of pod?

We can

logsandexecthrouthclusters/proxy, whyother port to access containers of pod?

I don't know the exact mechanism yet. I am going to research it in this time, perhaps we can discuss it in next community meeting.

Thanks @lonelyCZ . /assign @lonelyCZ

If the network problem is caused by the internal cluster, the problem should be solved in cluster instead of karmada.

I agree with it!

I found that get logs of container in kubernetes only accessing api (like api/v1/namespaces/default/pods/nginx-6799fc88d8-9mpxn/log?container=nginx) that internally implements a set of methods to get logs.

In Kubeedge, because it cuts some of the functionality of K8S, it needs to implement log collection by itself. It redirects the interface for getting logs to its own implementation by modifying iptables .

So if logs are normally accessible in a member cluster, they can be accessed at a multi-cloud level using 'cluster-proxy'.

I implement a demo at #1597

I think we only introduce get、logs、exec、describe command to karmadactl, which can easily view the status of member clusters. We don't need to change the status of member clusters from karmada control plane, so there is no need to introduce other commands.

And the commands are optional for user, if you don't use karmadactl, you only need to configurate kubectl to support cluster-proxy.

What do you think? @GitHubxsy

@lonelyCZ Can we directly reuse kubectl?

1、kubectl binary(Tell kubectl binary path)

2、Reference the kubectl code.(Can we?)

In multi-cluster scenarios, you can run the kubectl command multiple times.

Karmada enable kubectl. It supports all the original commands of kubectl. Karmactl supports kubectl as a multi-cluster command tool.

Maybe we can reference gorunner's idea.

kubeconfig Format:

apiVersion: v1

clusters:

- cluster:

insecure-skip-tls-verify: true

server: https://x.x.x.x:5443/apis/cluster.karmada.io/v1alpha1/cluster/member1/proxy

name: member1

- cluster:

insecure-skip-tls-verify: true

server: https://x.x.x.x:5443/apis/cluster.karmada.io/v1alpha1/cluster/member2/proxy

name: member2

contexts:

- context:

cluster: member1

user: karmada-admin

name: member1

- context:

cluster: member2

user: karmada-admin

name: member2

current-context: ""

kind: Config

preferences: {}

users:

- name: karmada-admin

user:

client-certificate: fake-cert-file

client-key: fake-key-fileHi, @GitHubxsy , the scheme is cool, but I think we don't need to support all commands from kubectl in karmadactl.

We only introduce most frequently-used command to karmadactl to view the status of member clusters, we don't need to change the status of member clusters.

It is not recommended to use the karmadactl command to modify resources in member clusters, that is more used for controlling resources in karmada control plane, like taint cluster

Karmada enable kubectl. It supports all the original commands of kubectl. Karmactl supports kubectl as a multi-cluster command tool.

When we add a new cluster, we still need to modify the Kubeconfig file, so I think this is better left to the user to configure and use kubectl to operate each cluster.

Hi, @GitHubxsy , the scheme is cool, but I think we don't need to support all commands from

kubectlinkarmadactl.We only introduce most frequently-used command to

karmadactlto view the status of member clusters, we don't need to change the status of member clusters.It is not recommended to use the

karmadactlcommand to modify resources in member clusters, that is more used for controlling resources in karmada control plane, liketaint clusterKarmada enable kubectl. It supports all the original commands of kubectl. Karmactl supports kubectl as a multi-cluster command tool.

When we add a new cluster, we still need to modify the Kubeconfig file, so I think this is better left to the user to configure and use

kubectlto operate each cluster.

The kubeconfig file is generated by karmadactl and is stored in the temporary directory.

I personally think there are many similarities between pull mode and

kubeedgeedge, can karmada learn from kubeedge to enhance pull mode, e.g.get pod logs,exec pod. What do you think :)