We can request as many certs as we need, and the box is programmed to be able to do it (in case the box is serving so many domains they won't fit in a single cert), but requesting just one is a bit nicer internally - less moving parts, less opportunities for something to go wrong.

We could automatically group by zone, maybe. A zone is the highest-level domain the box knows about (so each domain and its subdomains would get a single cert).

But.... This is a nice-to-have, imo, not a crucial thing, so I don't really want to play around with this right now.

When enumerating a server for potential attack vectors one of the initial data gathering phases is an attempt to discover all the domains hosted on the server.

Up until this point it has been an inexact science where the best you could achieve was Google data mining, www mining for domain references or to use one of the "who is hosted on" services which try learn about the worlds DNS (and tend to do it not very well, charge you and/or never last long).

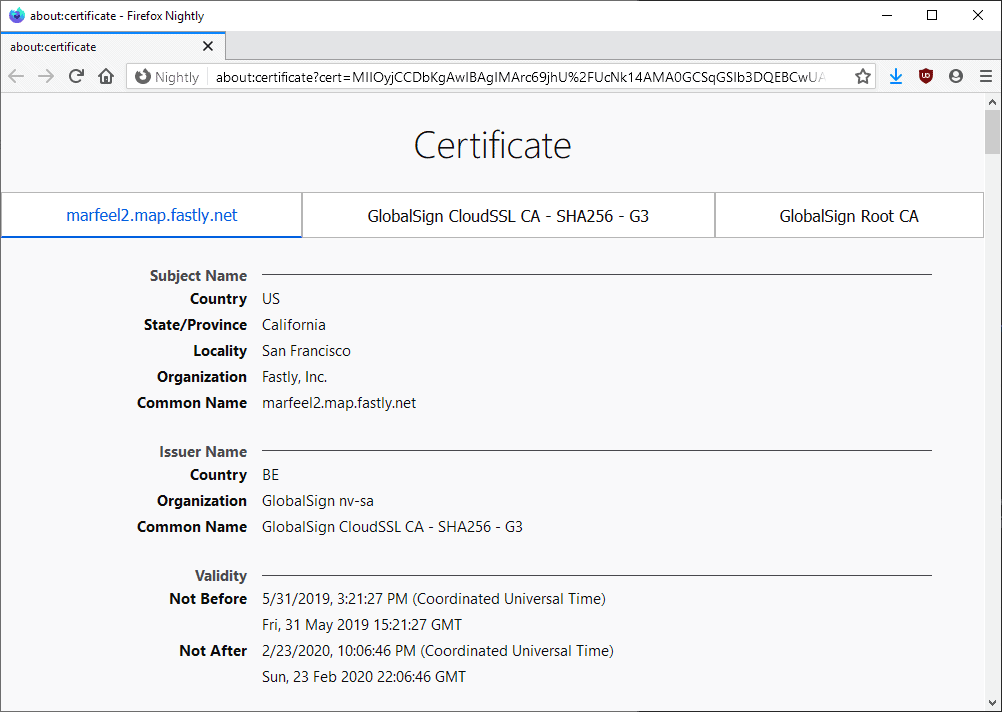

With the way letsencrypt works in MIAB by default all you need to do now is open the SSL cert and you get a complete server wide domain list.

Equally from a human standpoint you often dont want to make it easily known what personal "hobby" sites you run on the same server as you "small business/CV/Code" sites.

I dont want to go messing about with this without throwing this in here for comment. Ideally every site would have their own SSL or perhaps "groups of sites" is a better fit.

Is there any way to achieve this without hacking MIAB or beaching letsencrypt AUP this now?