Hi,

I didn't check everything but just thought about that: maybe easier to make sure MPTCP checksum is on and then modify data. The risk with modifying the option is to have the packet rejected.

If you don't see anything, best is to look at the SNMP counters (nstat) + /proc/net/mptcp_net/snmp.

Hi,

I have been testing MPTCP version_0.95 for various corner cases and one of which is TCP fall back. I have tested the TCP fallback that happened at the initial stage (due to middlebox or unsupported peer) and I can able to get the info via getsockopt(). Currently, I would like to simulate the rare scenario of TCP fall back that happened in the middle of the client<->server communication.

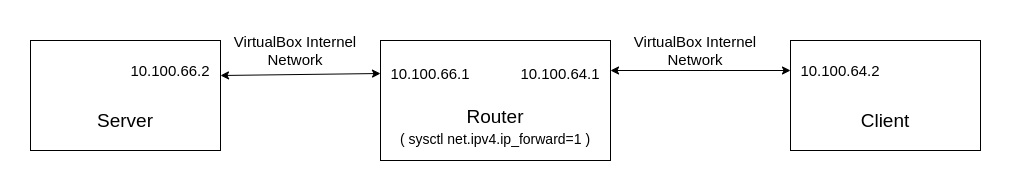

My test setup:

Both server and client has MPTCP enabled and the router doesn't MPTCP enabled (just for manipulating and forwarding packets).

On the router side, I tried to Manipulate the packets using scapy and NetfilterQueue.

Test steps:

Python script user at router:

Modified packet:

In the above test client and server successfully created an MPTCP connection at the beginning but once I run my python script at the router both the server and the client can't talk to each other and starts to do a lot of retransmission (but my expectation was either server or client will recognise this mptcp option manipulation at the middlebox and fall back to TCP).

Please check out the Wireshark captures at 3 VMs to understand it better (sorry for the .zip format because I can't attach the .pcapng file in Github).

In the above python script, I'm changing the mptcp option value field but I also tried by dropping the whole mptcp option itself but I get the same result.

Just to verify the python script I did forward the packets without changing anything and it doesn't interrupt the client <-> server communication. So I'm doing something wrong with that packet modifying step. If someone has knowledge of this please guide me in the right direction.

wireshark_cap.zip