- Is it possible to replace caffe (the slowest in the Python platform) with PyTorch (fastest overall) or MXNet (can beat PyTorch in parallel GPUs)

- Is it possible to replace VGG7 with Inception or ResNet, which out-performs VGG7?

Open DonaldTsang opened 6 years ago

Some idea: categorize images in the database into "pure", "single-JPG", "double-JPG", "multi-JPG" (JPG as in JPG compression).

Use that as the metric to how "noisy" an image is, and then proceed to apply the right amount of de-noising to not over-shoot.

Only the "pure" images should be used as the base dataset for testing reverse image compression and compression.

Reference: https://www.politesi.polimi.it/bitstream/10589/132721/1/2017_04_Chen.pdf

Is it possible to replace caffe (the slowest in the Python platform) with PyTorch (fastest overall) or MXNet (can beat PyTorch in parallel GPUs)

waifu2x is implemented in LuaJIT/Torch, not Caffe. Torch already seems to outdated, it is good to switch to PyTorch, but for now I don't have resource to do it. tsurumeso has released the chainer version. https://github.com/tsurumeso/waifu2x-chainer

Is it possible to replace VGG7 with Inception or ResNet, which out-performs VGG7?

ResNet model is already found in dev branch. benchmark: https://github.com/nagadomi/waifu2x/blob/dev/appendix/benchmark.md Unfortunately it is much slower than the current model, so it can not be used in web services.

Some idea: categorize images in the database into "pure", "single-JPG", "double-JPG", "multi-JPG" (JPG as in JPG compression).

It has already been realized. waifu2x can specify JPEG quality and compression times for real-time data augmentation at training. The dataset has been constructed with images that is not JPEG compressed.

@nagadomi

Unfortunately it is much slower than the current model

Maybe reduce the size of the ResNet by using less modules? And compare that with VGG5/7/9/16/19 to create a graph of epoch training speed compared to total training time and accuracy?

waifu2x can specify JPEG quality

what about auto-detection of JPEG quality? Could that be implemented as well?

Maybe reduce the size of the ResNet by using less modules? And compare that with VGG5/7/9/16/19 to create a graph of epoch training speed compared to total training time and accuracy?

Using shallow network, the accuracy is downgraded. I think it is related to the receptive field size (it depends on the number of layers and the filter size when use fully convolutional network). I think it may be solved with dilated convolution or progressive approach.

what about auto-detection of JPEG quality? Could that be implemented as well?

I already implemented it, but it is not an open source activity. JPEG noise level can be predicted with classification task, with sets of image patches.

@nagadomi what about using expert systems for JPEG noise level detection?

Looks like the resnet version is 2.3 times slower than upconv version. But get better quallity than the upcov with TTA (8 times slower). Which means it faster than the upcov with TTA but better quality. So it make sence to replace the normal TTA option. BTW, is there any plain to train an resnet art version model?

@2ji3150 @nagadomi New idea: NASNet

It looks like NASNet can out-perform most other neural network architecture with LESS computation.

As a reference: https://github.com/nagadomi/waifu2x/issues/216

(BTW thanks @Yolkis for suggesting that)

We should consider training speed and model generation speed.

Generally, in super resolution task, pooling layer can not be used. In network architectures for classification task, the input resolution decreases as the number of layers increases, but in super resolution task, it is not.

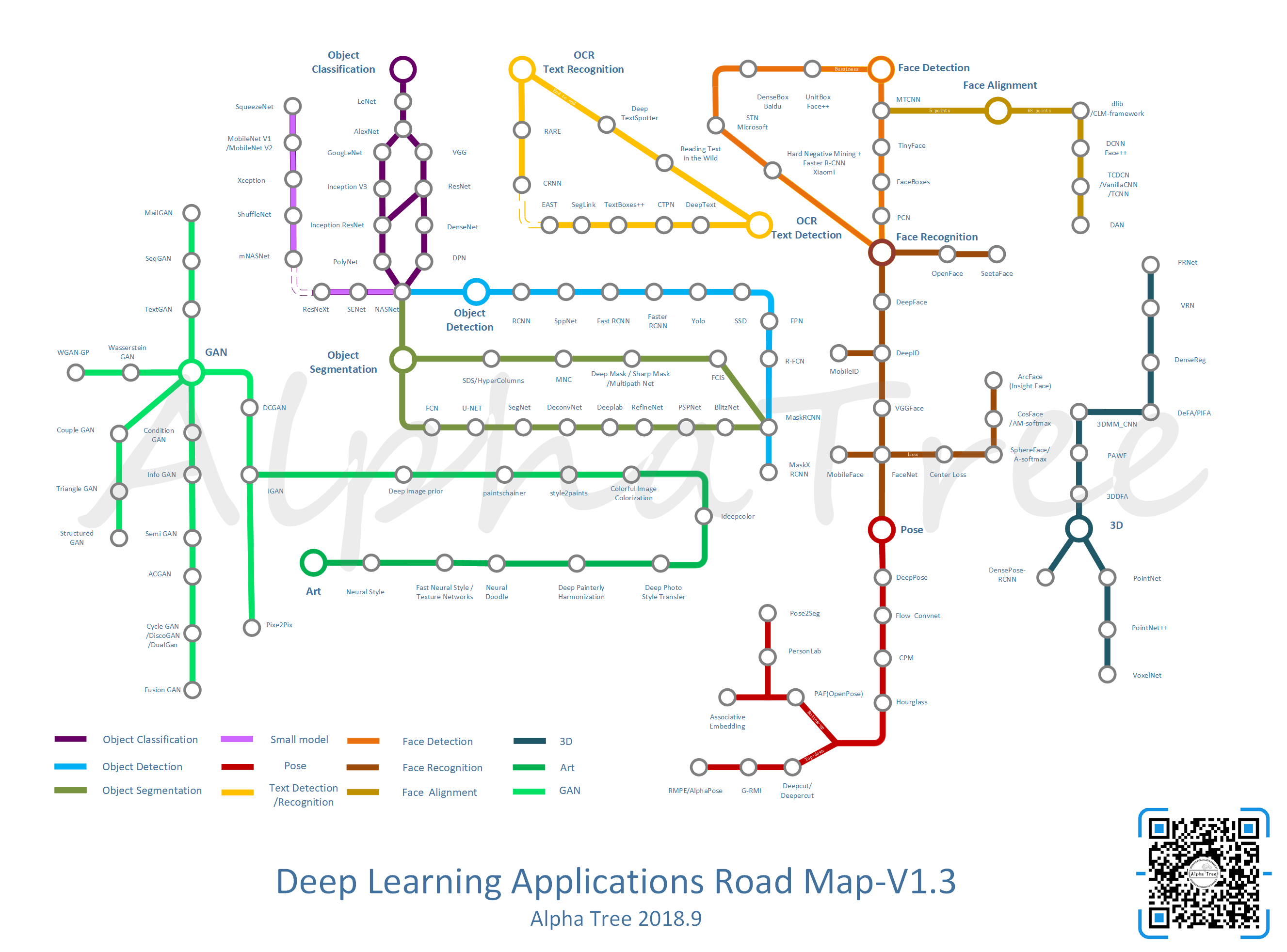

@nagadomi is it possible to see this graph (the purple parts) and see if there are alternatives for Waifu2x?

@DonaldTsang I added a new model last week. benchmark: https://github.com/nagadomi/waifu2x/blob/master/appendix/benchmark.md#art (cunet/art) It is two cascaded U-Net extended by SEBlock(Squeeze and Excitation Networks).

Edit: In the above figure, RefineNet (Stack-U-Net) is a similar model.

@nagadomi I come from this issue. Thanks for sharing the new model. Have you tried atrous convolutions on image up-scaling?

There is a paper using atrous conv to segment small objects on satellite images. The model increase the atrous rates and then decrease them. I code a similar model on my manga text segmentation project and find a clear improvement on accuracy. I am rewriting and testing a similar model on image up-scaling. The preliminary result seems acceptable, and I plan to train it thoroughly on a server.

@yu45020 I have tried dilated/atrous convolution. It is better than ordinary FCN, but it does not dramatically improve. Currently, I think that Residual U-Net(Concat replaced with Add) has better speed and accuracy than full dilated convolution networks.

I also develop OCR Engine for Manga, it is a closed source product so I can not describe the details, but there is a result on P59~ of this slide (Japanese).

@nagadomi Thanks for the advice! I will also check a U-Net like model before training.

Your project seems to complete what I desire. It is very interesting and seems to be comparable to the ABBYSS's engine. My project's in sample prediction achieves similar result, but my goal is to segment all text pixels only. Back to your product. I notice the slices come from a seminar. Do you plan to publish a technical report ?

@nagadomi @yu45020 any news? If yes, we can write something up in https://github.com/nagadomi/waifu2x/issues/251