Hi @lantzemil,

thanks for reporting.

I tried to reproduce this issue in a native environment (not in Ingress controller), but I was not able to do that.

If the database file isn't there, then I get an error message and Nginx won't start:

Mar 07 19:59:36 vm-debiansid nginx[42452]: 2024/03/07 19:59:36 [emerg] 42452#42452: "modsecurity_rules_file" directive Rules error. File: /etc/nginx/modsecurity.conf. Line: 273. Column: 19. Failed to load locate the GeoDB file>

Mar 07 19:59:36 vm-debiansid nginx[42452]: nginx: configuration file /etc/nginx/nginx.conf test failedHow do you control your Nginx process? I mean do you start it through systemd service file? (Sorry for the question, but I don't know Ingress.) May be that forces the reload again and again?

Describe the bug

If the SecGeoLookupDb /etc/nginx/geoip/GeoLite2-City.mmdb is present in the configuration and if for some reason the .mmdb file is not present, broken or cannot be read, this will stop the ingress-controller from starting, putting it into a reboot loop.

To Reproduce

Using nginx, ingress-controller and modsecurity WAF in Kubernetes.

Setup can be done using minikube and this guide: https://thelinuxnotes.com/index.php/how-to-install-and-configure-modsecurity-waf-in-kubernetes/

Add SecGeoLookupDb /etc/nginx/geoip/GeoLite2-City.mmdb and use-geoip2: "true" in your ConfigMap and apply it. Restart.

Logs and dumps

Run

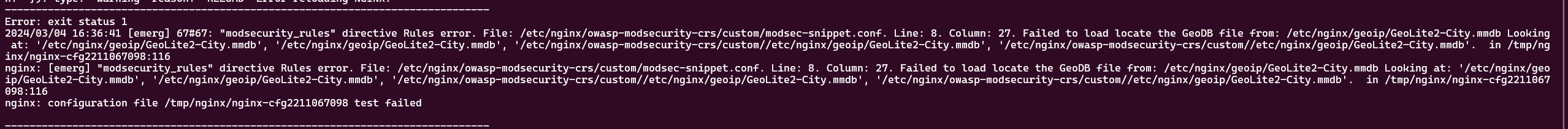

kubectl logs -f --namespace ingress-nginx --selector=app.kubernetes.io/component=controllerThe following error can be seen

kubectl get pods -AWill show that the pod is not ready and that the ingress-controller is down

Expected behavior

There should be some form of built-in failsafe in this. If the .mmdb file is not found, log an error and disable this functionality, but don't bring down the entire ingress-controller.