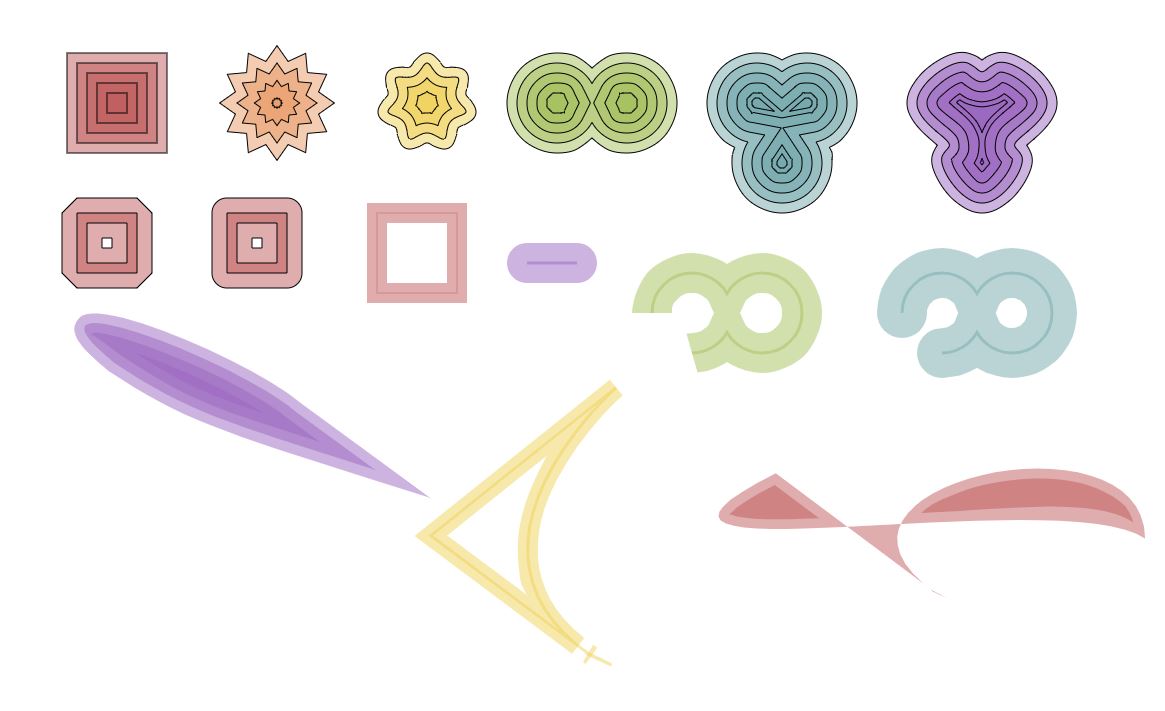

The variable-stroke-expansion that @microbians shows here is slick, to say the least.

Is this stroke expansion via geometric path-offsetting or is it a just stroke rendering trick provided by Flash itself?

Do the approaches you guys outlined above cover such functionality(variable-stroke-expansion)? It could work wonders for Pencil Tools and sketch-like drawings.

I've been following the library for some time, and have started a spare-time project based on it. I've been eagerly interested in the functionality to expand strokes into paths, particularly for bezier curves (where I know the problem is mathematically complex). Has a strategy / timeline for implementation been discussed? I may be able to contribute if new contributors are welcome.

I wasn't sure if the "outline" feature was separate from what I would describe in a CAD world as "offset". Is there some way to get equations for the position of the stroke edge that is not as complex as a full bezier-offset solution? See http://pomax.github.io/bezierinfo/#offsetting for a very detailed explanation of the math involved.

Thanks!