The Rust backend is not in a position to generate phis or code that uses SSA values directly in general.

Note that the optimized IR for the Rust code is also SSA and is also exploiting phis. When comparing optimized IR there is ultimately just one difference between the eq0 case and the Clang IR – Rust code has a bb2 block that acts as a phi springboard. That's what LLVM is ultimately unable to see through, it seems.

tl;dr: Current IR generated by && chain too hard to optimize for LLVM and always compiles to chain of jumps.

I started of investigation of this from this Reddit thread about lack of using SIMD instructions in PartialEq implementations.

Current Rust PartialEq

I assumed that PartialEq implementation generates code like:

handwritten eq

```rust pub struct Blueprint { pub fuel_tank_size: u32, pub payload: u32, pub wheel_diameter: u32, pub wheel_width: u32, pub storage: u32, } impl PartialEq for Blueprint{ fn eq(&self, other: &Self)->bool{ (self.fuel_tank_size == other.fuel_tank_size) && (self.payload == other.payload) && (self.wheel_diameter == other.wheel_diameter) && (self.wheel_width == other.wheel_width) && (self.storage == other.storage) } } ```

and it produce such asm:

```asm::eq:

mov eax, dword ptr [rdi]

cmp eax, dword ptr [rsi]

jne .LBB0_1

mov eax, dword ptr [rdi + 4]

cmp eax, dword ptr [rsi + 4]

jne .LBB0_1

mov eax, dword ptr [rdi + 8]

cmp eax, dword ptr [rsi + 8]

jne .LBB0_1

mov eax, dword ptr [rdi + 12]

cmp eax, dword ptr [rsi + 12]

jne .LBB0_1

mov ecx, dword ptr [rdi + 16]

mov al, 1

cmp ecx, dword ptr [rsi + 16]

jne .LBB0_1

ret

.LBB0_1:

xor eax, eax

ret

```

godbolt link for handwritten Eq,l:'5',n:'0',o:'Rust+source+%231',t:'0')),k:50,l:'4',n:'0',o:'',s:0,t:'0'),(g:!((h:compiler,i:(compiler:r1510,filters:(b:'0',binary:'1',commentOnly:'0',demangle:'0',directives:'0',execute:'1',intel:'0',libraryCode:'1',trim:'1'),fontScale:14,j:1,lang:rust,libs:!(),options:'-C+opt-level%3D3+-C+target-cpu%3Dhaswell',selection:(endColumn:12,endLineNumber:21,positionColumn:1,positionLineNumber:1,selectionStartColumn:12,selectionStartLineNumber:21,startColumn:1,startLineNumber:1),source:1),l:'5',n:'0',o:'rustc+1.51.0+(Editor+%231,+Compiler+%231)+Rust',t:'0')),k:50,l:'4',n:'0',o:'',s:0,t:'0')),l:'2',n:'0',o:'',t:'0')),version:4)

It is quite ineffective because have 5 branches which probably can be replaced by few SIMD instructions.

State on Clang land

So I decided to look how Clang compiles similar code (to know, if there some LLVM issue). So I written such code:

clang code and asm

```cpp #include

struct Blueprint{

uint32_t fuel_tank_size;

uint32_t payload;

uint32_t wheel_diameter;

uint32_t wheel_width;

uint32_t storage;

};

bool operator==(const Blueprint& th, const Blueprint& arg)noexcept{

return th.fuel_tank_size == arg.fuel_tank_size

&& th.payload == arg.payload

&& th.wheel_diameter == arg.wheel_diameter

&& th.wheel_width == arg.wheel_width

&& th.storage == arg.storage;

}

```

And asm

```asm

operator==(Blueprint const&, Blueprint const&): # @operator==(Blueprint const&, Blueprint const&)

movdqu xmm0, xmmword ptr [rdi]

movdqu xmm1, xmmword ptr [rsi]

pcmpeqb xmm1, xmm0

movd xmm0, dword ptr [rdi + 16] # xmm0 = mem[0],zero,zero,zero

movd xmm2, dword ptr [rsi + 16] # xmm2 = mem[0],zero,zero,zero

pcmpeqb xmm2, xmm0

pand xmm2, xmm1

pmovmskb eax, xmm2

cmp eax, 65535

sete al

ret

```

Also, godbolt with Clang code,l:'5',n:'0',o:'C%2B%2B+source+%231',t:'0')),k:50,l:'4',n:'0',o:'',s:0,t:'0'),(g:!((h:compiler,i:(compiler:clang1101,filters:(b:'0',binary:'1',commentOnly:'0',demangle:'0',directives:'0',execute:'1',intel:'0',libraryCode:'1',trim:'1'),fontScale:14,j:1,lang:c%2B%2B,libs:!(),options:'-O3',selection:(endColumn:1,endLineNumber:1,positionColumn:1,positionLineNumber:1,selectionStartColumn:1,selectionStartLineNumber:1,startColumn:1,startLineNumber:1),source:1),l:'5',n:'0',o:'x86-64+clang+11.0.1+(Editor+%231,+Compiler+%231)+C%2B%2B',t:'0')),k:50,l:'4',n:'0',o:'',s:0,t:'0')),l:'2',n:'0',o:'',t:'0')),version:4).

As you see, Clang successfully optimizes the code to use SIMD instructions and doesn't ever generates branches.

Rust variants of good asm generation

I checked other code variants in Rust.

Rust variants and ASM

```rust pub struct Blueprint { pub fuel_tank_size: u32, pub payload: u32, pub wheel_diameter: u32, pub wheel_width: u32, pub storage: u32, } // Equivalent of #[derive(PartialEq)] pub fn eq0(a: &Blueprint, b: &Blueprint)->bool{ (a.fuel_tank_size == b.fuel_tank_size) && (a.payload == b.payload) && (a.wheel_diameter == b.wheel_diameter) && (a.wheel_width == b.wheel_width) && (a.storage == b.storage) } // Optimizes good but changes semantics pub fn eq1(a: &Blueprint, b: &Blueprint)->bool{ (a.fuel_tank_size == b.fuel_tank_size) & (a.payload == b.payload) & (a.wheel_diameter == b.wheel_diameter) & (a.wheel_width == b.wheel_width) & (a.storage == b.storage) } // Optimizes good and have same semantics as PartialEq pub fn eq2(a: &Blueprint, b: &Blueprint)->bool{ if a.fuel_tank_size != b.fuel_tank_size{ return false; } if a.payload != b.payload{ return false; } if a.wheel_diameter != b.wheel_diameter{ return false; } if a.wheel_width != b.wheel_width{ return false; } if a.storage != b.storage{ return false; } true } ``` ```asm example::eq0: mov eax, dword ptr [rdi] cmp eax, dword ptr [rsi] jne .LBB0_1 mov eax, dword ptr [rdi + 4] cmp eax, dword ptr [rsi + 4] jne .LBB0_1 mov eax, dword ptr [rdi + 8] cmp eax, dword ptr [rsi + 8] jne .LBB0_1 mov eax, dword ptr [rdi + 12] cmp eax, dword ptr [rsi + 12] jne .LBB0_1 mov ecx, dword ptr [rdi + 16] mov al, 1 cmp ecx, dword ptr [rsi + 16] jne .LBB0_1 ret .LBB0_1: xor eax, eax ret example::eq1: mov eax, dword ptr [rdi + 16] cmp eax, dword ptr [rsi + 16] vmovdqu xmm0, xmmword ptr [rdi] sete cl vpcmpeqd xmm0, xmm0, xmmword ptr [rsi] vmovmskps eax, xmm0 cmp al, 15 sete al and al, cl ret example::eq2: vmovdqu xmm0, xmmword ptr [rdi] vmovd xmm1, dword ptr [rdi + 16] vmovd xmm2, dword ptr [rsi + 16] vpxor xmm1, xmm1, xmm2 vpxor xmm0, xmm0, xmmword ptr [rsi] vpor xmm0, xmm0, xmm1 vptest xmm0, xmm0 sete al ret ```

And godbolt link with variants

Function

eq0uses&&,eq1uses&so has different semantics,eq2has similar semantics but optimized better.eq1is very simple case (we have one block generated by rustc which easily optimized) and has different semantics so we skip it. We would useeq0andeq2further.Investigation of LLVM IR

clangandeq2cases successfully proved that LLVM capable to optimization of && so I started to investigate generated LLVM IR and optimized LLVM IR. I decided to check differences of IR for clang, eq0 and eq2.I would put first generated LLVM IR, its diagram, and optimized LLVM IR for cases. Also, I used different code in files than godbolt so function names aren't match for case names.

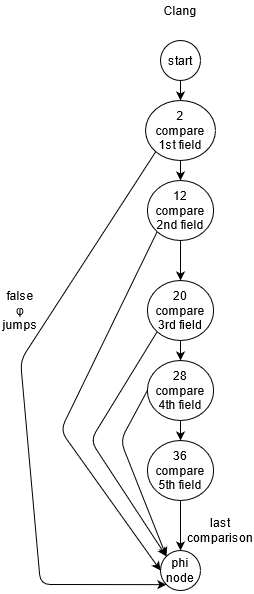

Clang IR

I compiled code to LLVM IR using

clang++ is_sorted.cpp -O0 -S -emit-llvm, removedoptnoneattribut manually, then looked optimizations usingopt -O3 -print-before-all -print-after-all 2>passes.llCode and diagrams

real code

```cpp #include

struct Blueprint{

uint32_t fuel_tank_size;

uint32_t payload;

uint32_t wheel_diameter;

uint32_t wheel_width;

uint32_t storage;

};

bool operator==(const Blueprint& th, const Blueprint& arg)noexcept{

return th.fuel_tank_size == arg.fuel_tank_size

&& th.payload == arg.payload

&& th.wheel_diameter == arg.wheel_diameter

&& th.wheel_width == arg.wheel_width

&& th.storage == arg.storage;

}

```

As you see, Clang original code doesn't changed much, it removed only copying from source structs to temporary locals. Original control flow is very clear and last block utilizes single phi node with a lot of inputs to fill result value.

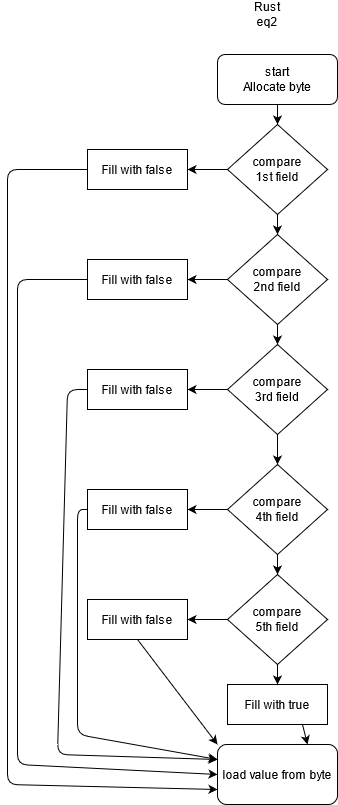

Rust eq2 case

I generated LLVM code using such command:

rustc +nightly cmp.rs --emit=llvm-ir -C opt-level=3 -C codegen-units=1 --crate-type=rlib -C 'llvm-args=-print-after-all -print-before-all' 2>passes.llRust eq2 case IR and graphs

real code

```rust pub struct Blueprint { pub fuel_tank_size: u32, pub payload: u32, pub wheel_diameter: u32, pub wheel_width: u32, pub storage: u32, } impl PartialEq for Blueprint{ fn eq(&self, other: &Self)->bool{ if self.fuel_tank_size != other.fuel_tank_size{ return false; } if self.payload != other.payload{ return false; } if self.wheel_diameter != other.wheel_diameter{ return false; } if self.wheel_width != other.wheel_width{ return false; } if self.storage != other.storage{ return false; } true } } ```In general, algorithm can be described as

falseinto the byte and jump to end.This indirect usage of byte is optimized in mem2reg phase to pretty SSA form and control flow remains forward only.

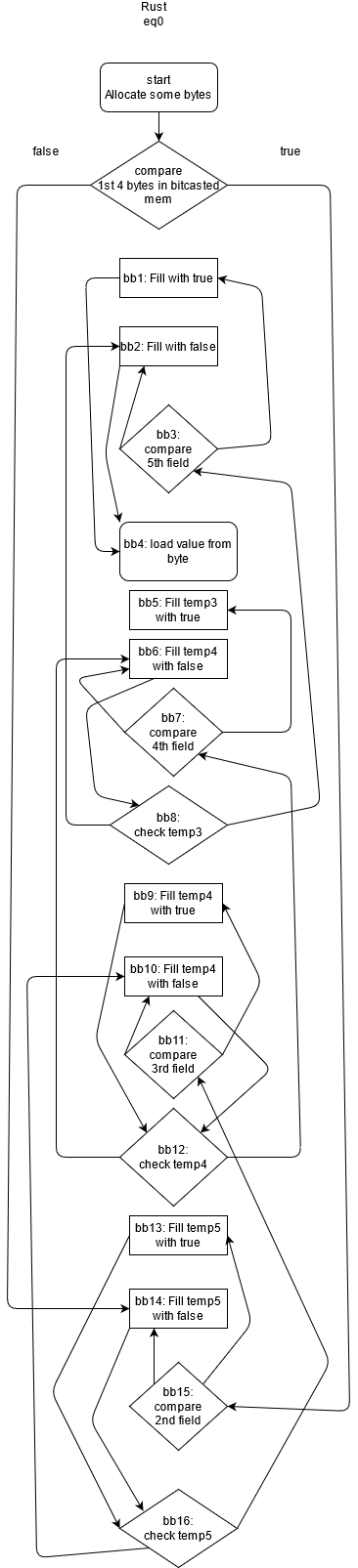

Rust eq0 case (which used in reality very often and optimizes bad)

IR code and control flow diagrams

Real code

```rust pub struct Blueprint { pub fuel_tank_size: u32, pub payload: u32, pub wheel_diameter: u32, pub wheel_width: u32, pub storage: u32, } impl PartialEq for Blueprint{ fn eq(&self, other: &Self)->bool{ (self.fuel_tank_size == other.fuel_tank_size) && (self.payload == other.payload) && (self.wheel_diameter == other.wheel_diameter) && (self.wheel_width == other.wheel_width) && (self.storage == other.storage) } } ```Well, it is really hard to tell whats going on in the generated code. Control flow operators placed basically in reversed order (first checked condition put in last position, then it jumps in both cases back than jumps against after condition), and such behaviour doesn't change during optimization passes and in final generated ASM we end with a lot of jumps and miss SIMD usage. It looks like that LLVM fails to reorganize this blocks in more natural order and probably fails to understand many temporary allocas.

Conclusions of LLVM IR research

Let's look the control flow diagrams last time.

Clang:

Rust eq2 (with manual early returns)

Rust eq0 with usage of

&&operator.Finally, I have 2 ideas of new algorithms which can be generated by proper codegen for

&&chains:First approach

We need exploit φ nodes with lots of inputs from Clang approach: Pseudocode:

Probably, it is the best solution because LLVM tends to handle Clang approach better and this code is already in SSA form which is loved by optimizations.

Second approach

Pseudocode

This version is less friendly to the optimizer because we use pointer here but it would be converted to SSA form in mem2reg phase of optimization.

Implementing of such algorithms would probably require handling of chains of && operators as one prefix operator with many arguments e.g.

&&(arg0, arg1, ..., argN).I don't know which part of pipeline is needed to be changed to fix this and which my suggested generated code is easier to produce.

Also, very I expect same problem with

||operator implementation too.Importance and final thoughts

This bug effectively prevents SIMD optimizations in most

#[derive(PartialEq)]and some other places too so fixing it could lead to big performance gains. Hope my investigation of this bug would help to fix it.Also, sorry for possible weird wording and grammar mistakes because English isn't native language for me.

And finally, which rustc and clang I used: