I'm thinking maybe an extra featureStatistics method on FeatureExtractor, so this is done post transformation. We can build one Algebord Moment per column easily.

Optionally we can also let user opt-in a subset of transformers, but that's extra complexity.

OTOH not sure if we can compute stats pre-transformation though, since it doesn't make sense for all inputs, e.g. strings, vector.

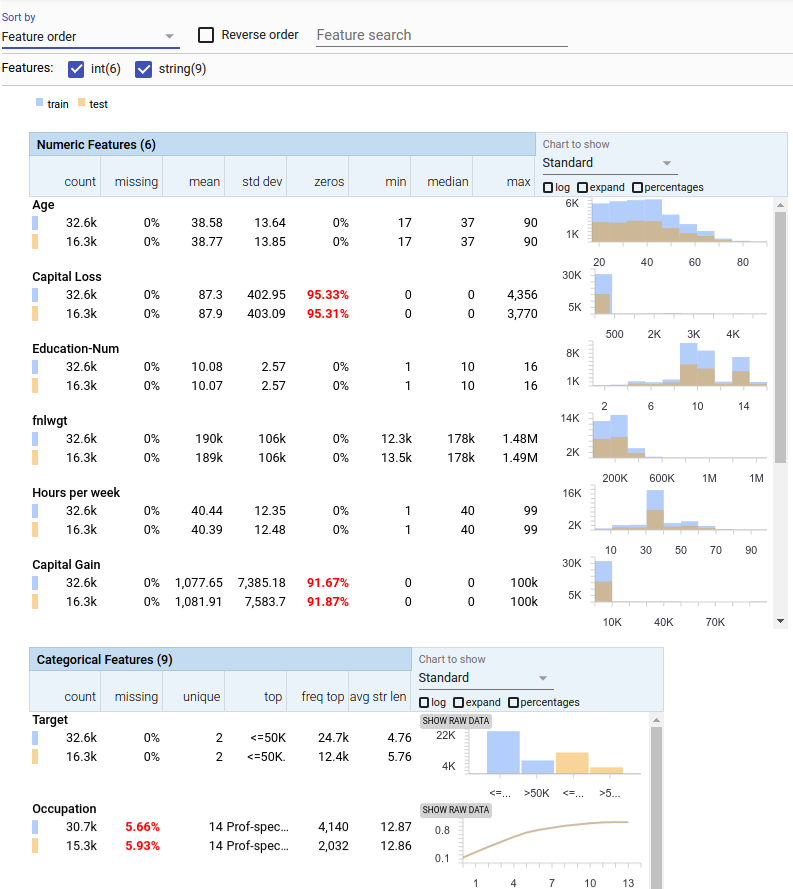

Could be useful for debugging. A couple of thoughts