I used a my_training.txt file that includes a list of training images instead of a path to the folder of images and annotations, but it always returns AssertionError: No images found in /path_to_my_txt_file/my_training.txt. Could anyone kindly give some pointers to where it went wrong? Thanks

📚 This guide explains how to train your own custom dataset with YOLOv5 🚀. See YOLOv5 Docs for additional details. UPDATED 13 April 2023.

Before You Start

Clone repo and install requirements.txt in a Python>=3.7.0 environment, including PyTorch>=1.7. Models and datasets download automatically from the latest YOLOv5 release.

Train On Custom Data

Creating a custom model to detect your objects is an iterative process of collecting and organizing images, labeling your objects of interest, training a model, deploying it into the wild to make predictions, and then using that deployed model to collect examples of edge cases to repeat and improve.

1. Create Dataset

YOLOv5 models must be trained on labelled data in order to learn classes of objects in that data. There are two options for creating your dataset before you start training:

Use Roboflow to create your dataset in YOLO format ⭐

### 1.1 Collect Images Your model will learn by example. Training on images similar to the ones it will see in the wild is of the utmost importance. Ideally, you will collect a wide variety of images from the same configuration (camera, angle, lighting, etc.) as you will ultimately deploy your project. If this is not possible, you can start from [a public dataset](https://universe.roboflow.com/?ref=ultralytics) to train your initial model and then [sample images from the wild during inference](https://blog.roboflow.com/computer-vision-active-learning-tips/?ref=ultralytics) to improve your dataset and model iteratively. ### 1.2 Create Labels Once you have collected images, you will need to annotate the objects of interest to create a ground truth for your model to learn from.

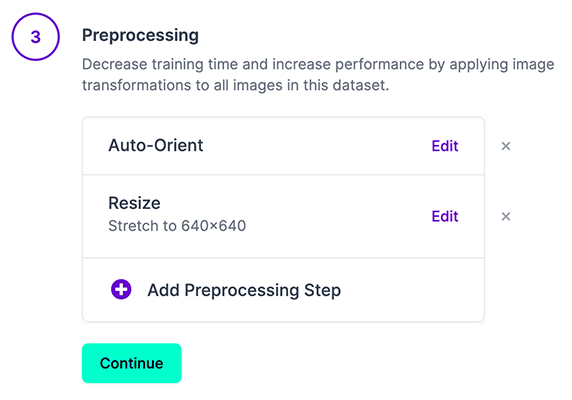

[Roboflow Annotate](https://roboflow.com/annotate?ref=ultralytics) is a simple web-based tool for managing and labeling your images with your team and exporting them in [YOLOv5's annotation format](https://roboflow.com/formats/yolov5-pytorch-txt?ref=ultralytics). ### 1.3 Prepare Dataset for YOLOv5 Whether you [label your images with Roboflow](https://roboflow.com/annotate?ref=ultralytics) or not, you can use it to convert your dataset into YOLO format, create a YOLOv5 YAML configuration file, and host it for importing into your training script. [Create a free Roboflow account](https://app.roboflow.com/?model=yolov5&ref=ultralytics) and upload your dataset to a `Public` workspace, label any unannotated images, then generate and export a version of your dataset in `YOLOv5 Pytorch` format. Note: YOLOv5 does online augmentation during training, so we do not recommend applying any augmentation steps in Roboflow for training with YOLOv5. But we recommend applying the following preprocessing steps:

* **Auto-Orient** - to strip EXIF orientation from your images. * **Resize (Stretch)** - to the square input size of your model (640x640 is the YOLOv5 default). Generating a version will give you a point in time snapshot of your dataset so you can always go back and compare your future model training runs against it, even if you add more images or change its configuration later.

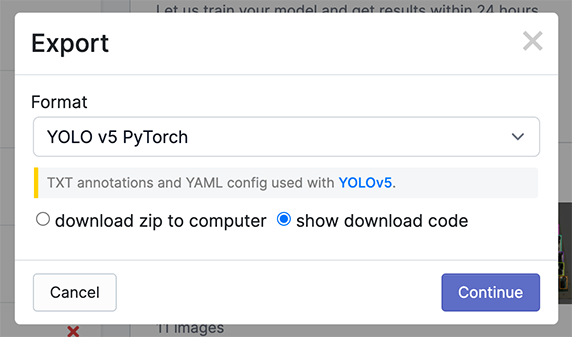

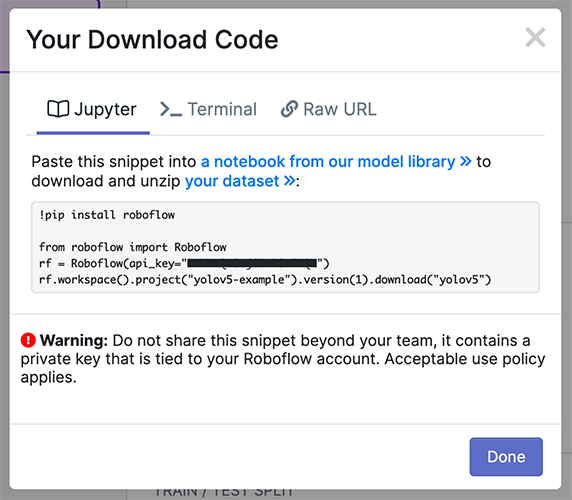

Export in `YOLOv5 Pytorch` format, then copy the snippet into your training script or notebook to download your dataset.

Now continue with `2. Select a Model`.Or manually prepare your dataset

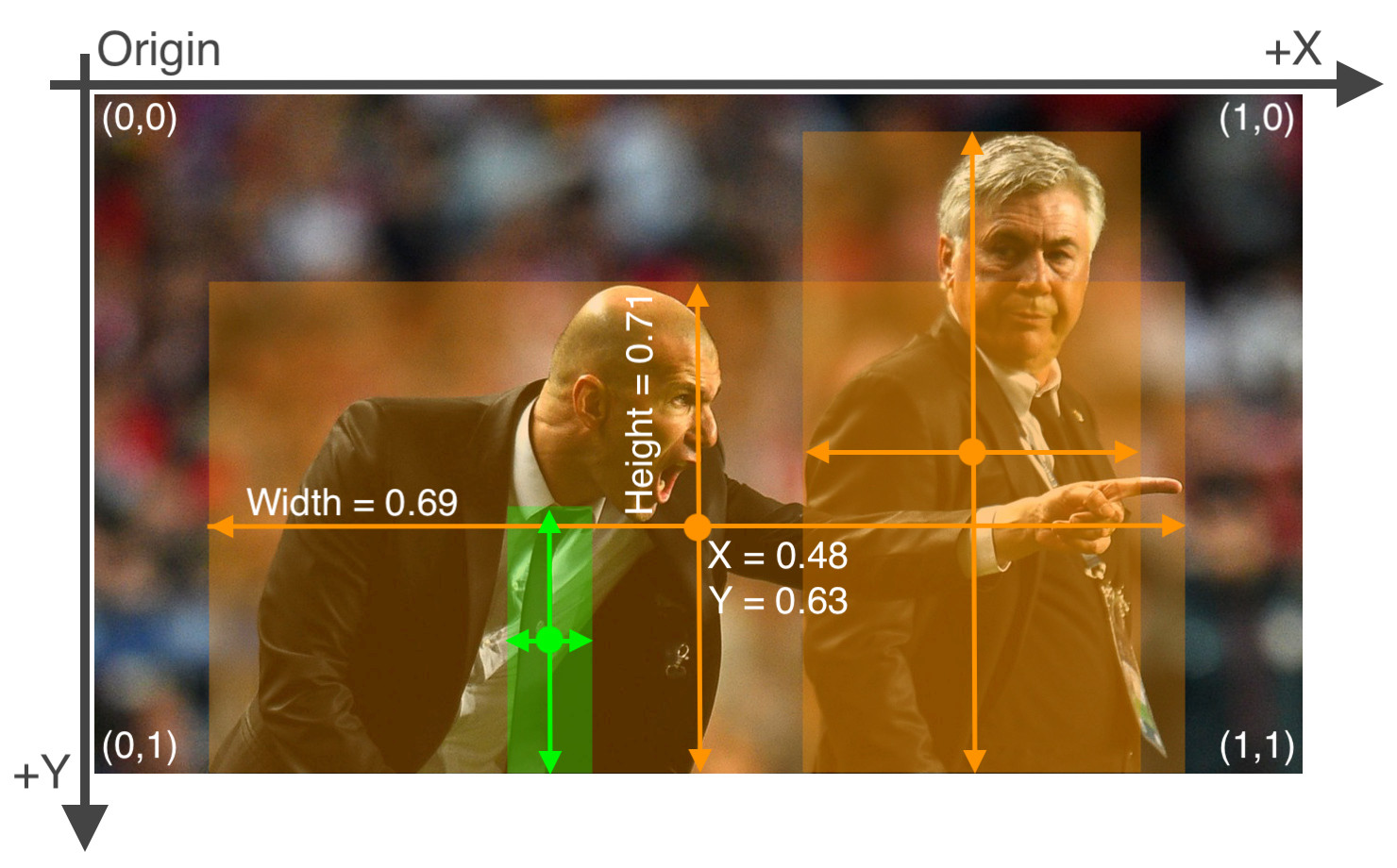

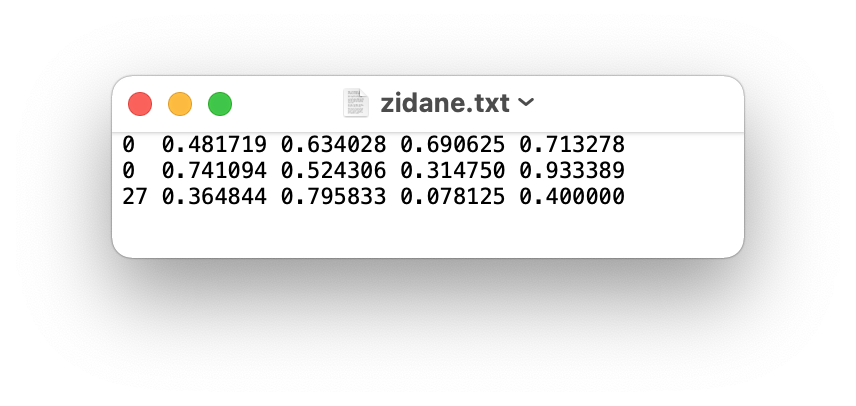

### 1.1 Create dataset.yaml [COCO128](https://www.kaggle.com/ultralytics/coco128) is an example small tutorial dataset composed of the first 128 images in [COCO](http://cocodataset.org/#home) train2017. These same 128 images are used for both training and validation to verify our training pipeline is capable of overfitting. [data/coco128.yaml](https://github.com/ultralytics/yolov5/blob/master/data/coco128.yaml), shown below, is the dataset config file that defines 1) the dataset root directory `path` and relative paths to `train` / `val` / `test` image directories (or *.txt files with image paths) and 2) a class `names` dictionary: ```yaml # Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..] path: ../datasets/coco128 # dataset root dir train: images/train2017 # train images (relative to 'path') 128 images val: images/train2017 # val images (relative to 'path') 128 images test: # test images (optional) # Classes (80 COCO classes) names: 0: person 1: bicycle 2: car ... 77: teddy bear 78: hair drier 79: toothbrush ``` ### 1.2 Create Labels After using an annotation tool to label your images, export your labels to **YOLO format**, with one `*.txt` file per image (if no objects in image, no `*.txt` file is required). The `*.txt` file specifications are: - One row per object - Each row is `class x_center y_center width height` format. - Box coordinates must be in **normalized xywh** format (from 0 - 1). If your boxes are in pixels, divide `x_center` and `width` by image width, and `y_center` and `height` by image height. - Class numbers are zero-indexed (start from 0).

The label file corresponding to the above image contains 2 persons (class `0`) and a tie (class `27`):

### 1.3 Organize Directories Organize your train and val images and labels according to the example below. YOLOv5 assumes `/coco128` is inside a `/datasets` directory **next to** the `/yolov5` directory. **YOLOv5 locates labels automatically for each image** by replacing the last instance of `/images/` in each image path with `/labels/`. For example: ```bash ../datasets/coco128/images/im0.jpg # image ../datasets/coco128/labels/im0.txt # label ```2. Select a Model

Select a pretrained model to start training from. Here we select YOLOv5s, the second-smallest and fastest model available. See our README table for a full comparison of all models.

3. Train

Train a YOLOv5s model on COCO128 by specifying dataset, batch-size, image size and either pretrained

--weights yolov5s.pt(recommended), or randomly initialized--weights '' --cfg yolov5s.yaml(not recommended). Pretrained weights are auto-downloaded from the latest YOLOv5 release.💡 ProTip: Add

--cache ramor--cache diskto speed up training (requires significant RAM/disk resources).💡 ProTip: Always train from a local dataset. Mounted or network drives like Google Drive will be very slow.

All training results are saved to

runs/train/with incrementing run directories, i.e.runs/train/exp2,runs/train/exp3etc. For more details see the Training section of our tutorial notebook.4. Visualize

Comet Logging and Visualization 🌟 NEW

Comet is now fully integrated with YOLOv5. Track and visualize model metrics in real time, save your hyperparameters, datasets, and model checkpoints, and visualize your model predictions with Comet Custom Panels! Comet makes sure you never lose track of your work and makes it easy to share results and collaborate across teams of all sizes!

Getting started is easy:

To learn more about all of the supported Comet features for this integration, check out the Comet Tutorial. If you'd like to learn more about Comet, head over to our documentation. Get started by trying out the Comet Colab Notebook:

ClearML Logging and Automation 🌟 NEW

ClearML is completely integrated into YOLOv5 to track your experimentation, manage dataset versions and even remotely execute training runs. To enable ClearML:

pip install clearmlclearml-initto connect to a ClearML server (deploy your own open-source server here, or use our free hosted server here)You'll get all the great expected features from an experiment manager: live updates, model upload, experiment comparison etc. but ClearML also tracks uncommitted changes and installed packages for example. Thanks to that ClearML Tasks (which is what we call experiments) are also reproducible on different machines! With only 1 extra line, we can schedule a YOLOv5 training task on a queue to be executed by any number of ClearML Agents (workers).

You can use ClearML Data to version your dataset and then pass it to YOLOv5 simply using its unique ID. This will help you keep track of your data without adding extra hassle. Explore the ClearML Tutorial for details!

Local Logging

Training results are automatically logged with Tensorboard and CSV loggers to

runs/train, with a new experiment directory created for each new training asruns/train/exp2,runs/train/exp3, etc.This directory contains train and val statistics, mosaics, labels, predictions and augmentated mosaics, as well as metrics and charts including precision-recall (PR) curves and confusion matrices.

Results file

results.csvis updated after each epoch, and then plotted asresults.png(below) after training completes. You can also plot anyresults.csvfile manually:Next Steps

Once your model is trained you can use your best checkpoint

best.ptto:Environments

YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including CUDA/CUDNN, Python and PyTorch preinstalled):

Status

If this badge is green, all YOLOv5 GitHub Actions Continuous Integration (CI) tests are currently passing. CI tests verify correct operation of YOLOv5 training, validation, inference, export and benchmarks on MacOS, Windows, and Ubuntu every 24 hours and on every commit.