@mikecr96 hey buddy, there's actually a better solution that creates a YOLOv5 TF2/Keras model from scratch, transfers over the PyTorch weights, and then exports to TFLite in PR https://github.com/ultralytics/yolov5/pull/1127

Closed mikecr96 closed 3 years ago

@mikecr96 hey buddy, there's actually a better solution that creates a YOLOv5 TF2/Keras model from scratch, transfers over the PyTorch weights, and then exports to TFLite in PR https://github.com/ultralytics/yolov5/pull/1127

Thank you very much @glenn-jocher I could convert my pt model into tflite. Now I just have to test how much faster my model has become (running on arm architecture).

I just have two questions:

Which one is better (faster) for raspberry, fp16 or int8 and why? and

How to convert my model exactly? My model is about detecting people who's wearing or not face mask, and it has around 1500 images. Let's say I did use yolov5s.yaml model to train it (300 epochs in Colab Pro) so my weights' path is /content/drive/MyDrive/yolov5/runs/train/exp6/weights/best.pt and all my dataset of images is on /content/drive/MyDrive/ModeloCubrebocas/images. To convert my weight into tflite using int8 code should be: !PYTHONPATH=. python models/tf.py --weight /content/drive/MyDrive/yolov5/runs/train/exp6/weights/best.pt --cfg models/yolov5s.yaml --img 320 --tfl-int8 --source /content/drive/MyDrive/ModeloCubrebocas/images --ncalib 100 I guess this is not correct since when I run !python detect.py --weight /content/drive/MyDrive/yolov5/runs/train/exp6/weights/best-int8.tflite --img 320 --tfl-int8 output is:

image 1/2 /content/drive/MyDrive/yolov5tf/yolov5/data/images/bus.jpg: 320x320 1 bicycles, Done. (7.365s) image 2/2 /content/drive/MyDrive/yolov5tf/yolov5/data/images/zidane.jpg: 320x320 1 bicycles, Done. (7.258s) so it is not detecting my objects but yolov5s.pt objects.

Thank you in advanced.

@mikecr96 I think before you consider any export you should first evaluate the results of your PyTorch model and adjust your training to achieve the results you want. Once you are seeing acceptable performance in PyTorch, only then should you start investigating your export options. A full guide is below.

👋 Hello! Thanks for asking about improving training results. Most of the time good results can be obtained with no changes to the models or training settings, provided your dataset is sufficiently large and well labelled. If at first you don't get good results, there are steps you might be able to take to improve, but we always recommend users first train with all default settings before considering any changes. This helps establish a performance baseline and spot areas for improvement.

If you have questions about your training results we recommend you provide the maximum amount of information possible if you expect a helpful response, including results plots (train losses, val losses, P, R, mAP), PR curve, confusion matrix, training mosaics, test results and dataset statistics images such as labels.png. All of these are located in your project/name directory, typically yolov5/runs/train/exp.

We've put together a full guide for users looking to get the best results on their YOLOv5 trainings below.

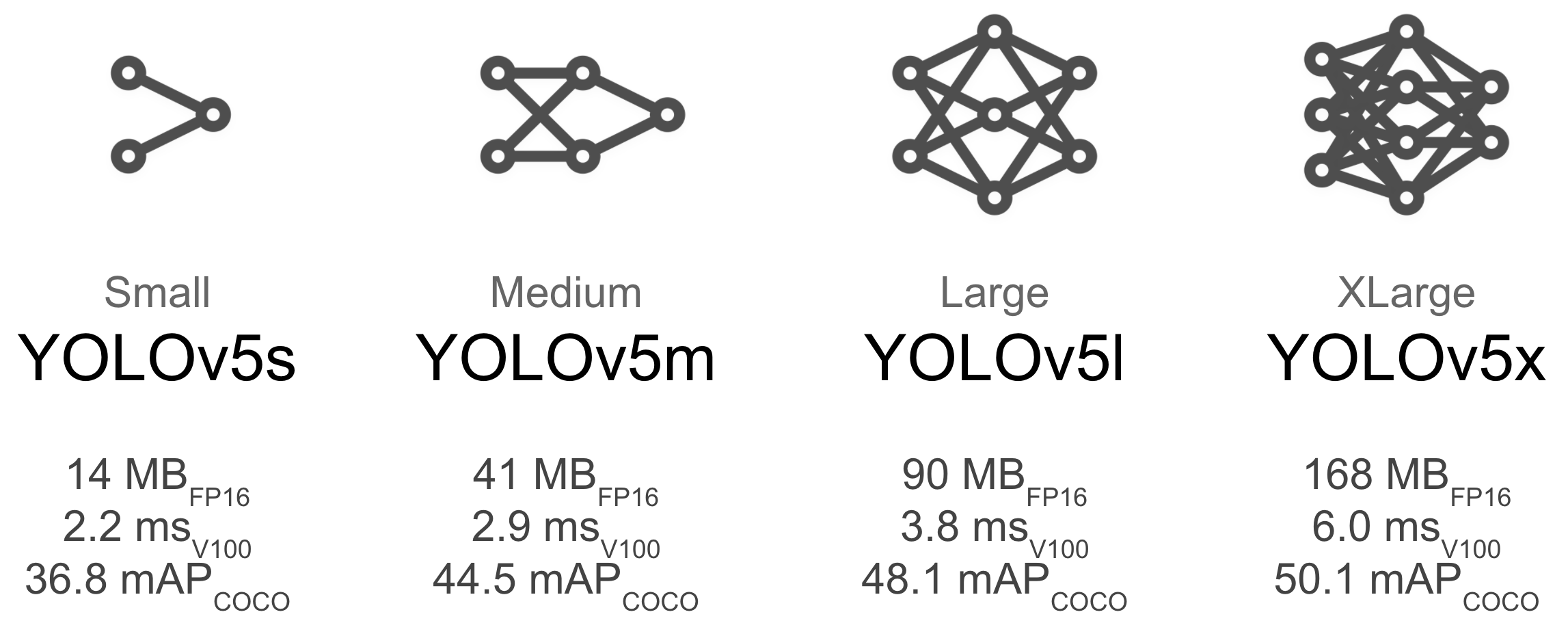

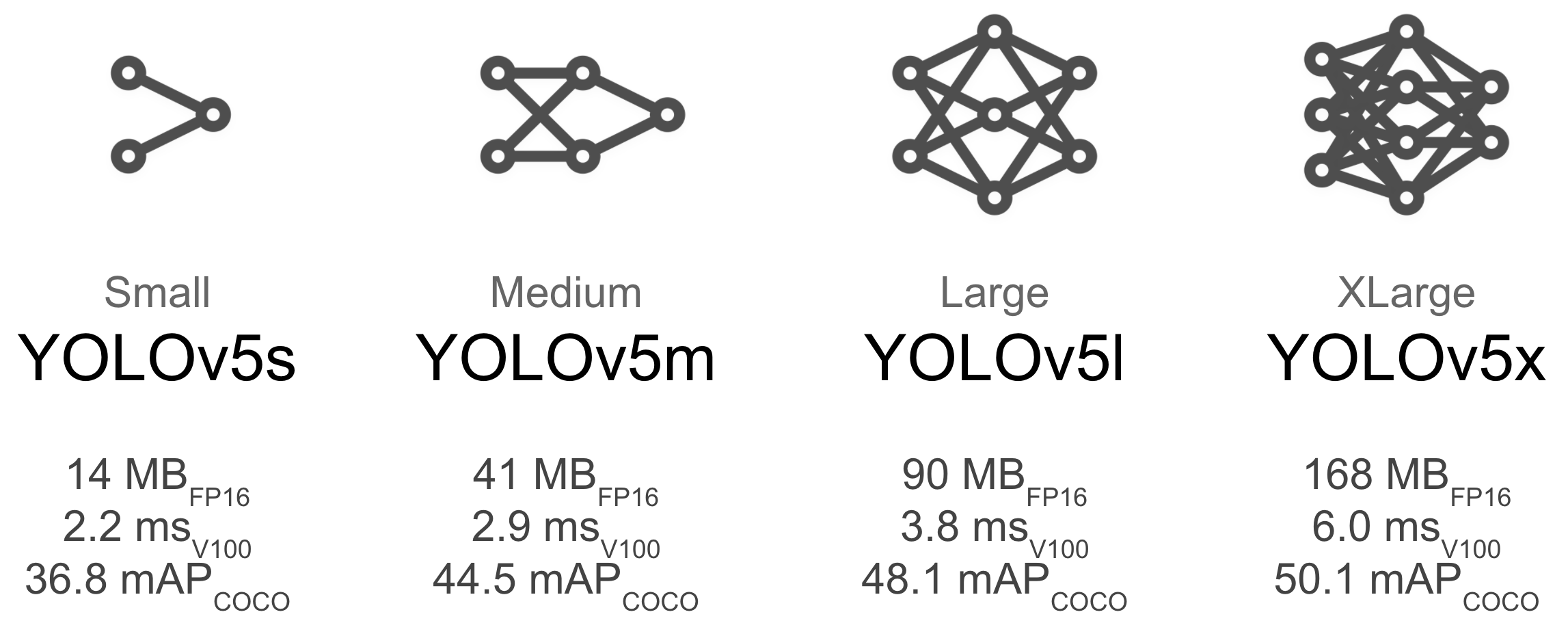

Larger models like YOLOv5x will produce better results in nearly all cases, but have more parameters and are slower to run. For mobile applications we recommend YOLOv5s/m, for cloud or desktop applications we recommend YOLOv5l/x. See our README table for a full comparison of all models.

To start training from pretrained weights simply pass the name of the model to the --weights argument. Models download automatically from the latest YOLOv5 release.

python train.py --data custom.yaml --weights yolov5s.pt

yolov5m.pt

yolov5l.pt

yolov5x.pt

Before modifying anything, first train with default settings to establish a performance baseline. A full list of train.py settings can be found in the train.py argparser.

--img 640, though due to the high amount of small objects in the dataset it can benefit from training at higher resolutions such as --img 1280. If there are many small objects then custom datasets will benefit from training at native or higher resolution. Best inference results are obtained at the same --img as the training was run at, i.e. if you train at --img 1280 you should also test and detect at --img 1280.--batch-size that your hardware allows for. Small batch sizes produce poor batchnorm statistics and should be avoided.hyp['obj'] will help reduce overfitting in those specific loss components. For an automated method of optimizing these hyperparameters, see our Hyperparameter Evolution Tutorial.If you'd like to know more a good place to start is Karpathy's 'Recipe for Training Neural Networks', which has great ideas for training that apply broadly across all ML domains: http://karpathy.github.io/2019/04/25/recipe/

@mikecr96 I think before you consider any export you should first evaluate the results of your PyTorch model and adjust your training to achieve the results you want. Once you are seeing acceptable performance in PyTorch, only then should you start investigating your export options. A full guide is below.

👋 Hello! Thanks for asking about improving training results. Most of the time good results can be obtained with no changes to the models or training settings, provided your dataset is sufficiently large and well labelled. If at first you don't get good results, there are steps you might be able to take to improve, but we always recommend users first train with all default settings before considering any changes. This helps establish a performance baseline and spot areas for improvement.

If you have questions about your training results we recommend you provide the maximum amount of information possible if you expect a helpful response, including results plots (train losses, val losses, P, R, mAP), PR curve, confusion matrix, training mosaics, test results and dataset statistics images such as labels.png. All of these are located in your

project/namedirectory, typicallyyolov5/runs/train/exp.We've put together a full guide for users looking to get the best results on their YOLOv5 trainings below.

Dataset

- Images per class. ≥1.5k images per class

- Instances per class. ≥10k instances (labeled objects) per class total

- Image variety. Must be representative of deployed environment. For real-world use cases we recommend images from different times of day, different seasons, different weather, different lighting, different angles, different sources (scraped online, collected locally, different cameras) etc.

- Label consistency. All instances of all classes in all images must be labelled. Partial labelling will not work.

- Label accuracy. Labels must closely enclose each object. No space should exist between an object and it's bounding box. No objects should be missing a label.

- Background images. Background images are images with no objects that are added to a dataset to reduce False Positives (FP). We recommend about 0-10% background images to help reduce FPs (COCO has 1000 background images for reference, 1% of the total).

Model Selection

Larger models like YOLOv5x will produce better results in nearly all cases, but have more parameters and are slower to run. For mobile applications we recommend YOLOv5s/m, for cloud or desktop applications we recommend YOLOv5l/x. See our README table for a full comparison of all models.

To start training from pretrained weights simply pass the name of the model to the

--weightsargument. Models download automatically from the latest YOLOv5 release.python train.py --data custom.yaml --weights yolov5s.pt yolov5m.pt yolov5l.pt yolov5x.pt

Training Settings

Before modifying anything, first train with default settings to establish a performance baseline. A full list of train.py settings can be found in the train.py argparser.

- Epochs. Start with 300 epochs. If this overfits early then you can reduce epochs. If overfitting does not occur after 300 epochs, train longer, i.e. 600, 1200 etc epochs.

- Image size. COCO trains at native resolution of

--img 640, though due to the high amount of small objects in the dataset it can benefit from training at higher resolutions such as--img 1280. If there are many small objects then custom datasets will benefit from training at native or higher resolution. Best inference results are obtained at the same--imgas the training was run at, i.e. if you train at--img 1280you should also test and detect at--img 1280.- Batch size. Use the largest

--batch-sizethat your hardware allows for. Small batch sizes produce poor batchnorm statistics and should be avoided.- Hyperparameters. Default hyperparameters are in hyp.scratch.yaml. We recommend you train with default hyperparameters first before thinking of modifying any. In general, increasing augmentation hyperparameters will reduce and delay overfitting, allowing for longer trainings and higher final mAP. Reduction in loss component gain hyperparameters like

hyp['obj']will help reduce overfitting in those specific loss components. For an automated method of optimizing these hyperparameters, see our Hyperparameter Evolution Tutorial.Further Reading

If you'd like to know more a good place to start is Karpathy's 'Recipe for Training Neural Networks', which has great ideas for training that apply broadly across all ML domains: http://karpathy.github.io/2019/04/25/recipe/

Hey buddy thanks a lot for your reply. I've already evaluated my results since I tried with different models such as yolov5s, 5s6, 5m and 3tiny. All of them trained at 300 epochs, 64 batch size ( I have Colab pro so I always try to use all the available resources).

I have tested all my weights in both Raspberry and Colab. The fastest one on Raspberry so far is 3tiny that can make inference over 141 images (--img 640) in around 75 seconds (with no Ubuntu's GUI and with CPU overclocked at 1.75GHz) but it is also the less accurate. 5s is much better since it's more accurate, but too slow on Raspberry (like 200 seconds in same conditions than 3tiny).

Here's my 5s' labels.jpg and results.jpg

So as you can see I've already done some tests, that's why I am looking for better results (speed and accuracy are important). I'd choose 5s.pt if I went to run it on PC or Colab but since I have to run this on a Raspberry Pi 4 due to my project requirements, I'd love to make 5s faster on Rasp, that's why I'm trying to export my pytorch model into tflite model so I can have same accuracy but faster (or that's what I'm expecting).

Thank yo again. Regards.

@mikecr96 the important thing to remember is that at similar mAP YOLOv5s will be much faster than YOLOv3-tiny, which is the real comparison you want to make, so in practice there is no comparison. For R Pi I would recommend simply running inference at reduced --img-size for faster results.

78ms per image and 38.5 mAP

(venv) (base) glennjocher@Glenns-iMac yolov5 % python test.py

Namespace(augment=False, batch_size=32, conf_thres=0.001, data='data/coco128.yaml', device='', exist_ok=False, img_size=320, iou_thres=0.6, name='exp', project='runs/test', save_conf=False, save_hybrid=False, save_json=False, save_txt=False, single_cls=False, task='val', verbose=False, weights='yolov5s.pt')

YOLOv5 🚀 v5.0-47-g0824388 torch 1.8.1 CPU

Fusing layers...

Model Summary: 224 layers, 7266973 parameters, 0 gradients, 17.0 GFLOPS

val: Scanning '../coco128/labels/train2017.cache' images and labels... 128 found, 0 missing, 2 empty, 0 corrupted: 100%|█████████████████████████████████████████████████████████████████| 128/128 [00:00<?, ?it/s]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100%|██████████████████████████████████████████████████████████████████████████████████| 4/4 [00:14<00:00, 3.63s/it]

all 128 929 0.624 0.532 0.582 0.385

Speed: 78.4/3.4/81.8 ms inference/NMS/total per 320x320 image at batch-size 32

Results saved to runs/test/exp188ms per image and 22.8 mAP

(venv) (base) glennjocher@Glenns-iMac yolov3 % python test.py

Namespace(augment=False, batch_size=32, conf_thres=0.001, data='data/coco128.yaml', device='', exist_ok=False, img_size=640, iou_thres=0.6, name='exp', project='runs/test', save_conf=False, save_hybrid=False, save_json=False, save_txt=False, single_cls=False, task='val', verbose=False, weights='yolov3-tiny.pt')

YOLOv3 🚀 v9.5.0-3-g331df67 torch 1.8.1 CPU

Fusing layers...

Model Summary: 48 layers, 8849182 parameters, 0 gradients, 13.2 GFLOPS

val: Scanning '../coco128/labels/train2017.cache' images and labels... 128 found, 0 missing, 2 empty, 0 corrupted: 100%|██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 128/128 [00:07<?, ?it/s]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 4/4 [00:27<00:00, 6.96s/it]

all 128 929 0.523 0.396 0.441 0.228

Speed: 188.7/1.8/190.5 ms inference/NMS/total per 640x640 image at batch-size 32

Results saved to runs/test/exp2Hey buddy It's me (again). I have new results and want to share 'em with you (but also I need to ask you something).

Here are my results. All of them using --img 320

Running my model using 5s for training:

Running my model using 5s for training but converted to tflite using PR #1127 (FP16):

Running my model using 5s for training but converted to tflite using PR #1127 (int8):

So as you can see tflite is much more faster (at least on RPi4) than PyTorch. But I keep having OpenBLAS warning as I described before on #2698 when I run inferences on your yolov5 version. This warning doesn't show up when I run yolov5 from @zldrobit

So finally, my question is: if I converted my model from 5s to tflite, why tflite model doesn't have the correct labels? I mean, when I run inference using my 5s.pt on your yolov5, results show up correct labels (which must be: 'Uso', 'NO uso' and 'Incorrecto' but running tflite model shows up 'bicycle', 'car' and 'person' which are the labels used on your pretrained model 5s.pt even though results are correct (they're well detected but wrongly labeled).

I hope you can understand me since English isn't my first language and it is difficult to me to explain myself. Thank you again.

Best regards.

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions.

@mikecr96 You could change the names in https://github.com/zldrobit/yolov5/blob/tf-only-export/detect.py#L52-L53 with your own class names

@mikecr96 You could change the names in https://github.com/zldrobit/yolov5/blob/tf-only-export/detect.py#L52-L53 with your own class names

Thank you, dude. Fortunately I was following your GitHub repo and someone already commented this issue, and someone replied them so I already knew the solution. Anyways thank you very much.

🚀 Feature

models/export.pycurrently export pt models to onnx and other formats. Since convert from onnx to tflite is possible, I guess it should be easy to implement onnx to tflite conversion and/or to keras model.Motivation

I'm trying yolov5 on a Raspberry Pi 4 Model B (4GB RAM). Actually it works fine but slow so I want to try to make yolo works with tflite in order to make inference a little bit faster on ARM processor(s).

Pitch

Whether to just export to tflite model or add official support to yolo to works with tflite models (I guess this should be harder).