@alicera single-class datasets do not incur classification loss. This is correct.

Closed alicera closed 2 years ago

@alicera single-class datasets do not incur classification loss. This is correct.

But formula # conf = obj_conf * cls_conf". use the cls

@alicera yes that's correct. Everything is as intended. cls_conf=1.0 if nc=1.

So when the netowrk have only one class, the network output of cls head will be ignore.

It will always set to 1.

For example, the conf = obj_conf * cls_conf

where cls_conf=1

Only the obj_conf affect the conf, rigth?

@alicera yes that's correct. The cls output heads are initialized with sufficient pre-sigmoid bias that the classification confidences will always = 1.0, so they are effectively not used when nc=1.

👋 Hello, this issue has been automatically marked as stale because it has not had recent activity. Please note it will be closed if no further activity occurs.

Access additional YOLOv5 🚀 resources:

Access additional Ultralytics ⚡ resources:

Feel free to inform us of any other issues you discover or feature requests that come to mind in the future. Pull Requests (PRs) are also always welcomed!

Thank you for your contributions to YOLOv5 🚀 and Vision AI ⭐!

@alicera yes that's correct. The cls output heads are initialized with sufficient pre-sigmoid bias that the classification confidences will always = 1.0, so they are effectively not used when nc=1.

@glenn-jocher if we have images with objects and no objects in that case what should be the nc 1 or 2

@jaideep11061982 👋 Hello! Thanks for asking about YOLOv5 🚀 dataset formatting. To train correctly your data must be in YOLOv5 format. Please see our Train Custom Data tutorial for full documentation on dataset setup and all steps required to start training your first model. A few excerpts from the tutorial:

COCO128 is an example small tutorial dataset composed of the first 128 images in COCO train2017. These same 128 images are used for both training and validation to verify our training pipeline is capable of overfitting. data/coco128.yaml, shown below, is the dataset config file that defines 1) the dataset root directory path and relative paths to train / val / test image directories (or *.txt files with image paths), 2) the number of classes nc and 3) a list of class names:

# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: ../datasets/coco128 # dataset root dir

train: images/train2017 # train images (relative to 'path') 128 images

val: images/train2017 # val images (relative to 'path') 128 images

test: # test images (optional)

# Classes

nc: 80 # number of classes

names: [ 'person', 'bicycle', 'car', 'motorcycle', 'airplane', 'bus', 'train', 'truck', 'boat', 'traffic light',

'fire hydrant', 'stop sign', 'parking meter', 'bench', 'bird', 'cat', 'dog', 'horse', 'sheep', 'cow',

'elephant', 'bear', 'zebra', 'giraffe', 'backpack', 'umbrella', 'handbag', 'tie', 'suitcase', 'frisbee',

'skis', 'snowboard', 'sports ball', 'kite', 'baseball bat', 'baseball glove', 'skateboard', 'surfboard',

'tennis racket', 'bottle', 'wine glass', 'cup', 'fork', 'knife', 'spoon', 'bowl', 'banana', 'apple',

'sandwich', 'orange', 'broccoli', 'carrot', 'hot dog', 'pizza', 'donut', 'cake', 'chair', 'couch',

'potted plant', 'bed', 'dining table', 'toilet', 'tv', 'laptop', 'mouse', 'remote', 'keyboard', 'cell phone',

'microwave', 'oven', 'toaster', 'sink', 'refrigerator', 'book', 'clock', 'vase', 'scissors', 'teddy bear',

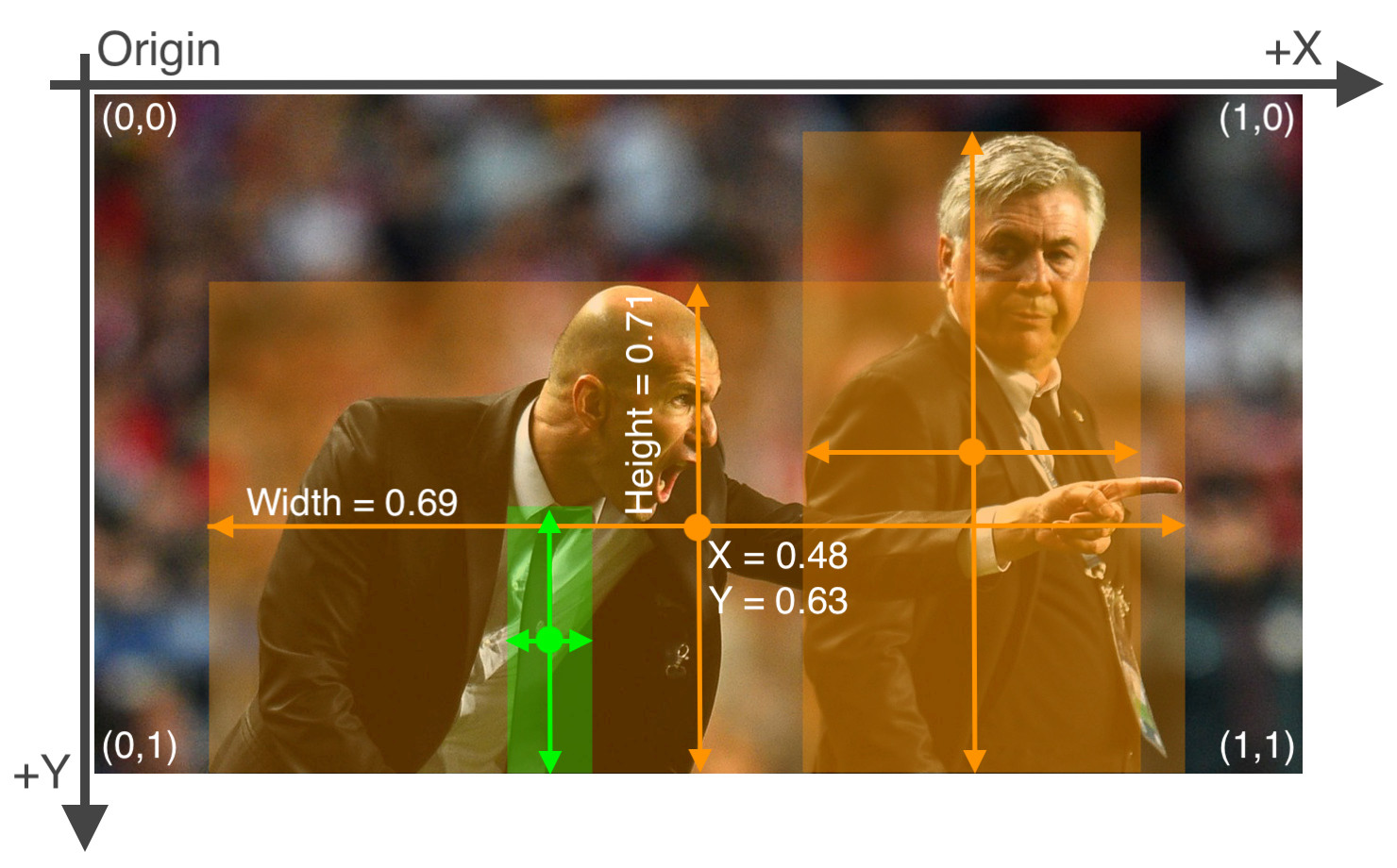

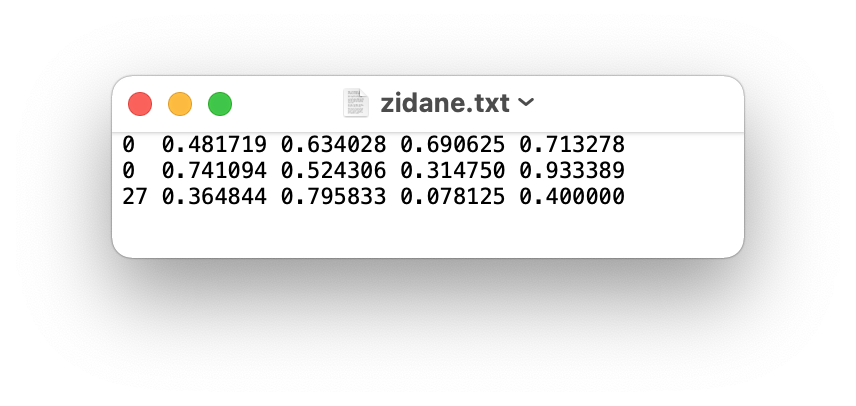

'hair drier', 'toothbrush' ] # class namesAfter using a tool like Roboflow Annotate to label your images, export your labels to YOLO format, with one *.txt file per image (if no objects in image, no *.txt file is required). The *.txt file specifications are:

class x_center y_center width height format.x_center and width by image width, and y_center and height by image height.

The label file corresponding to the above image contains 2 persons (class 0) and a tie (class 27):

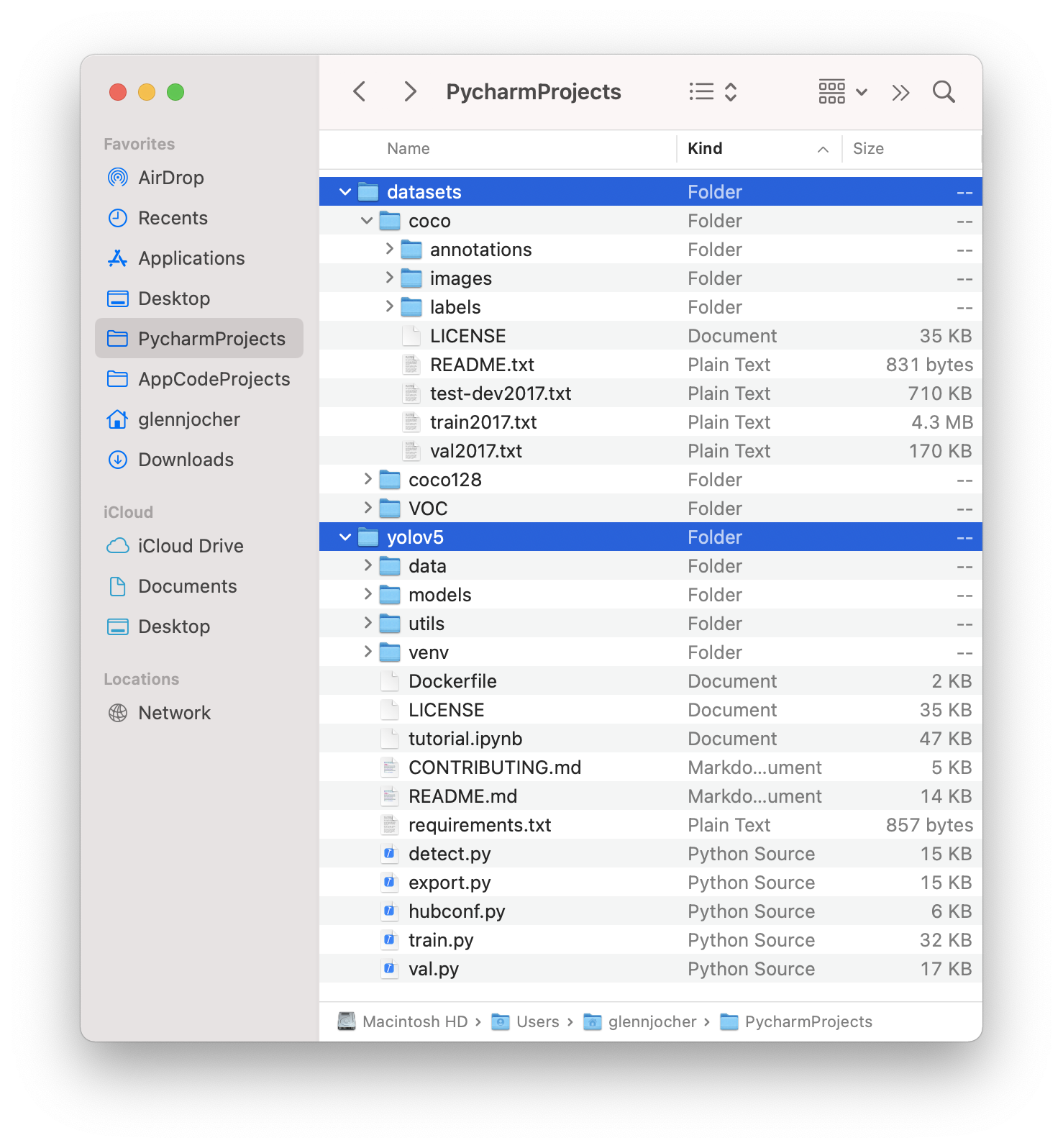

Organize your train and val images and labels according to the example below. YOLOv5 assumes /coco128 is inside a /datasets directory next to the /yolov5 directory. YOLOv5 locates labels automatically for each image by replacing the last instance of /images/ in each image path with /labels/. For example:

../datasets/coco128/images/im0.jpg # image

../datasets/coco128/labels/im0.txt # label

Good luck 🍀 and let us know if you have any other questions!

Search before asking

Question

If I have only one class, the "ps[:, 5:]" will not be used. The lcls loss is always 0. https://github.com/ultralytics/yolov5/blob/47fac9ff73aceedd267db1e734a98de122fc9430/utils/loss.py#L149

But the inference use the "x[:, 5:] = x[:, 4:5] # conf = obj_conf cls_conf". https://github.com/ultralytics/yolov5/blob/47fac9ff73aceedd267db1e734a98de122fc9430/utils/general.py#L684

How to make sure the cls_conf have learn with loss?

Additional

No response