@purvang3 👋 Hello! Thanks for asking about handling inference results. YOLOv5 🚀 PyTorch Hub models allow for simple model loading and inference in a pure python environment without using detect.py.

Simple Inference Example

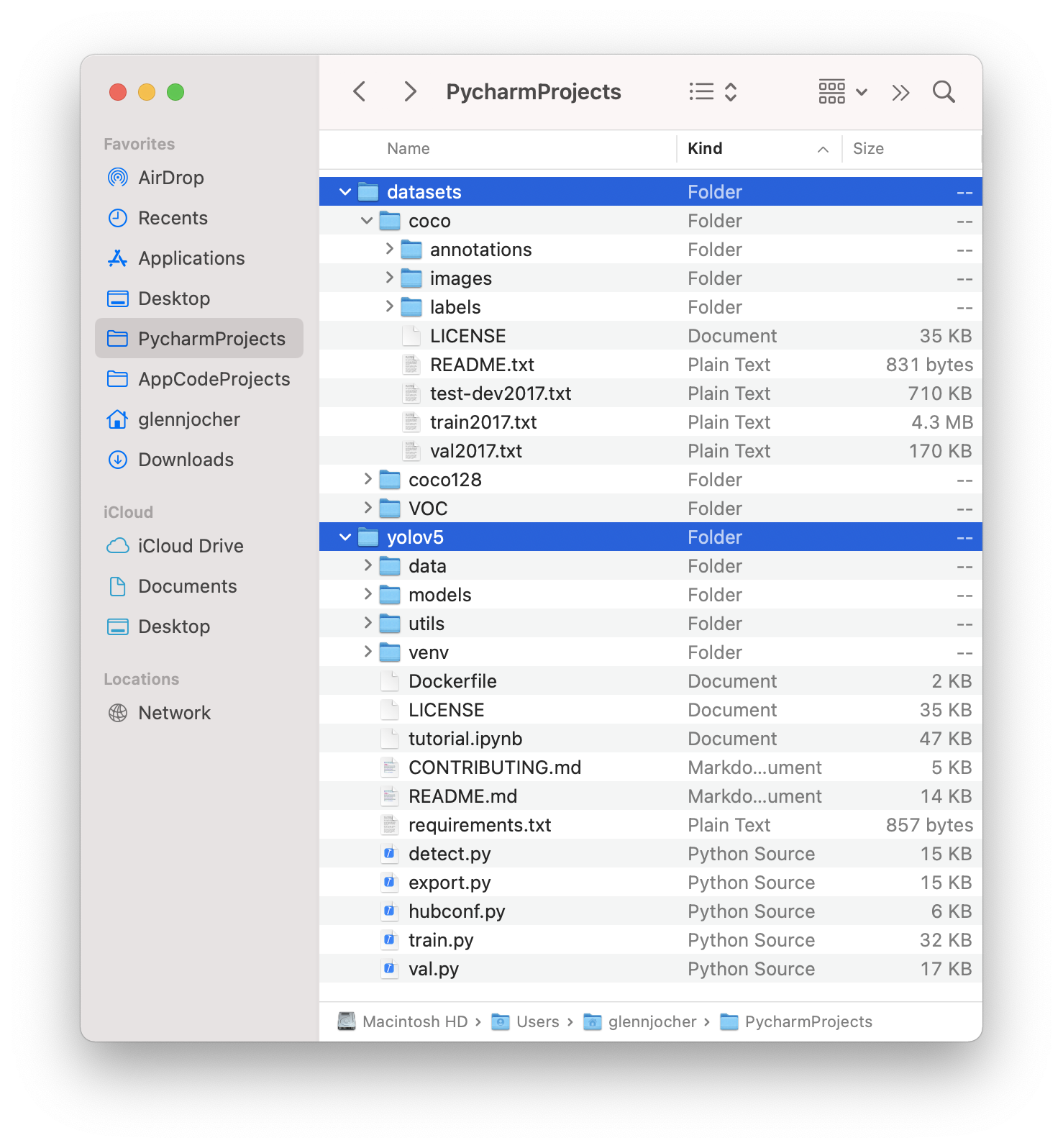

This example loads a pretrained YOLOv5s model from PyTorch Hub as model and passes an image for inference. 'yolov5s' is the YOLOv5 'small' model. For details on all available models please see the README. Custom models can also be loaded, including custom trained PyTorch models and their exported variants, i.e. ONNX, TensorRT, TensorFlow, OpenVINO YOLOv5 models.

import torch

# Model

model = torch.hub.load('ultralytics/yolov5', 'yolov5s') # or yolov5m, yolov5l, yolov5x, etc.

# model = torch.hub.load('ultralytics/yolov5', 'custom', 'path/to/best.pt') # custom trained model

# Images

im = 'https://ultralytics.com/images/zidane.jpg' # or file, Path, URL, PIL, OpenCV, numpy, list

# Inference

results = model(im)

# Results

results.print() # or .show(), .save(), .crop(), .pandas(), etc.

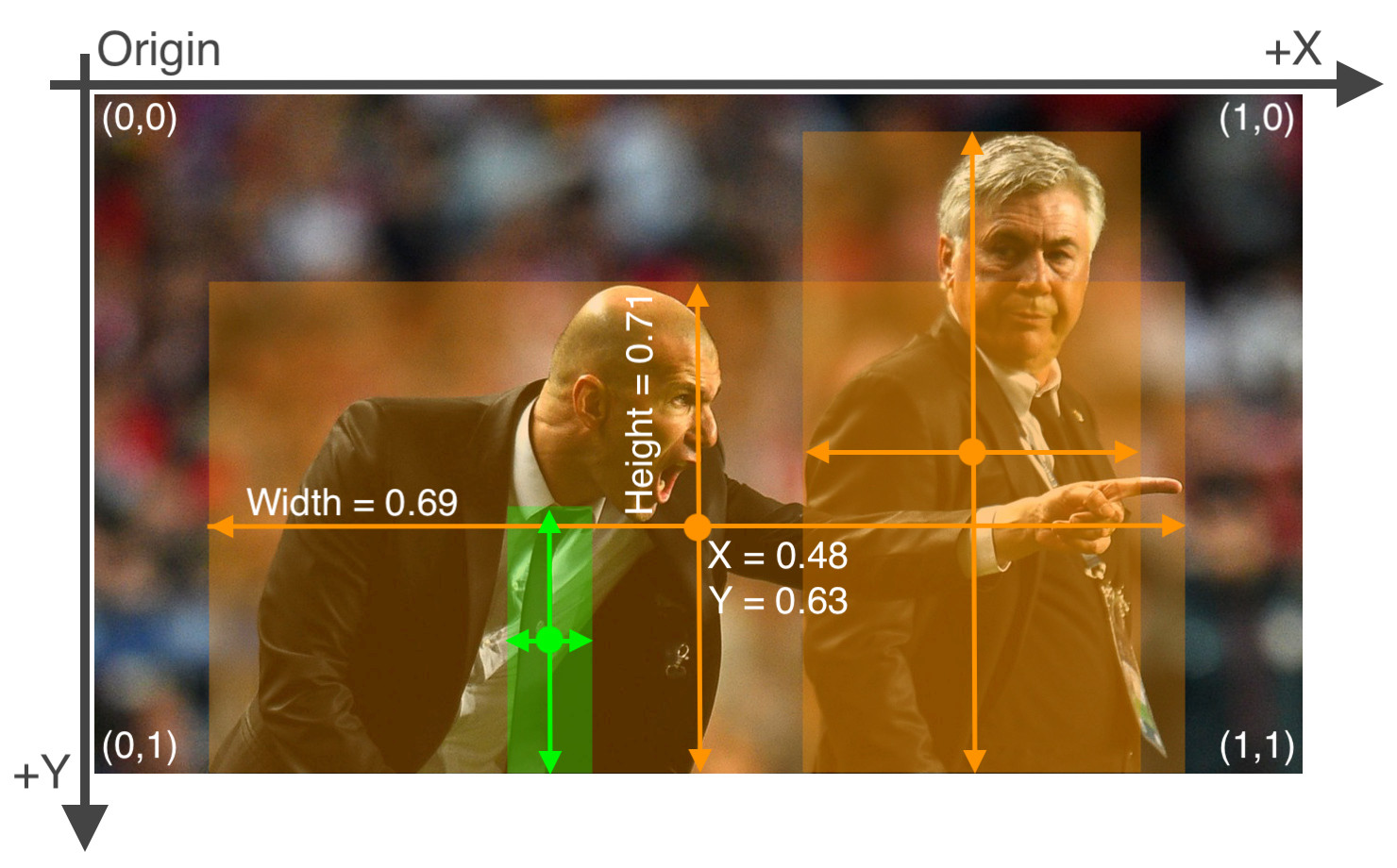

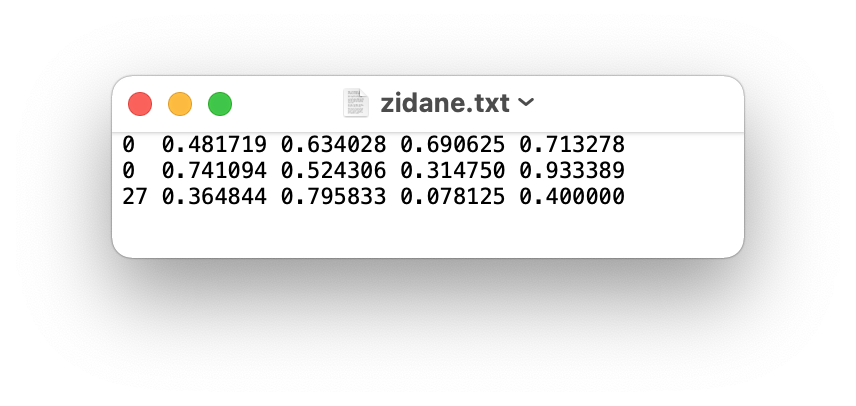

results.xyxy[0] # im predictions (tensor)

results.pandas().xyxy[0] # im predictions (pandas)

# xmin ymin xmax ymax confidence class name

# 0 749.50 43.50 1148.0 704.5 0.874023 0 person

# 2 114.75 195.75 1095.0 708.0 0.624512 0 person

# 3 986.00 304.00 1028.0 420.0 0.286865 27 tie

See YOLOv5 PyTorch Hub Tutorial for details.

Good luck 🍀 and let us know if you have any other questions!

While analyzing validation images and also converting trained model to onnx and visualizing predictions, I see bounding boxes are off by quit margin from ground truth boxes. During training, loss landscape and mAP metric appears to be behaving as expected with following coco metric result.

below is code snippet, I am using for predictions.

""" self.model = converted onnx model.

"""

Is there any problem with visualization? What are the recommendations to improve localization? Let me know if need more information.

Thank you