👋 Hello @Carolinejone, thank you for your interest in YOLOv5 🚀! Please visit our ⭐️ Tutorials to get started, where you can find quickstart guides for simple tasks like Custom Data Training all the way to advanced concepts like Hyperparameter Evolution.

If this is a 🐛 Bug Report, please provide screenshots and minimum viable code to reproduce your issue, otherwise we can not help you.

If this is a custom training ❓ Question, please provide as much information as possible, including dataset images, training logs, screenshots, and a public link to online W&B logging if available.

For business inquiries or professional support requests please visit https://ultralytics.com or email support@ultralytics.com.

Requirements

Python>=3.7.0 with all requirements.txt installed including PyTorch>=1.7. To get started:

git clone https://github.com/ultralytics/yolov5 # clone

cd yolov5

pip install -r requirements.txt # installEnvironments

YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including CUDA/CUDNN, Python and PyTorch preinstalled):

- Google Colab and Kaggle notebooks with free GPU:

- Google Cloud Deep Learning VM. See GCP Quickstart Guide

- Amazon Deep Learning AMI. See AWS Quickstart Guide

- Docker Image. See Docker Quickstart Guide

Status

If this badge is green, all YOLOv5 GitHub Actions Continuous Integration (CI) tests are currently passing. CI tests verify correct operation of YOLOv5 training (train.py), validation (val.py), inference (detect.py) and export (export.py) on macOS, Windows, and Ubuntu every 24 hours and on every commit.

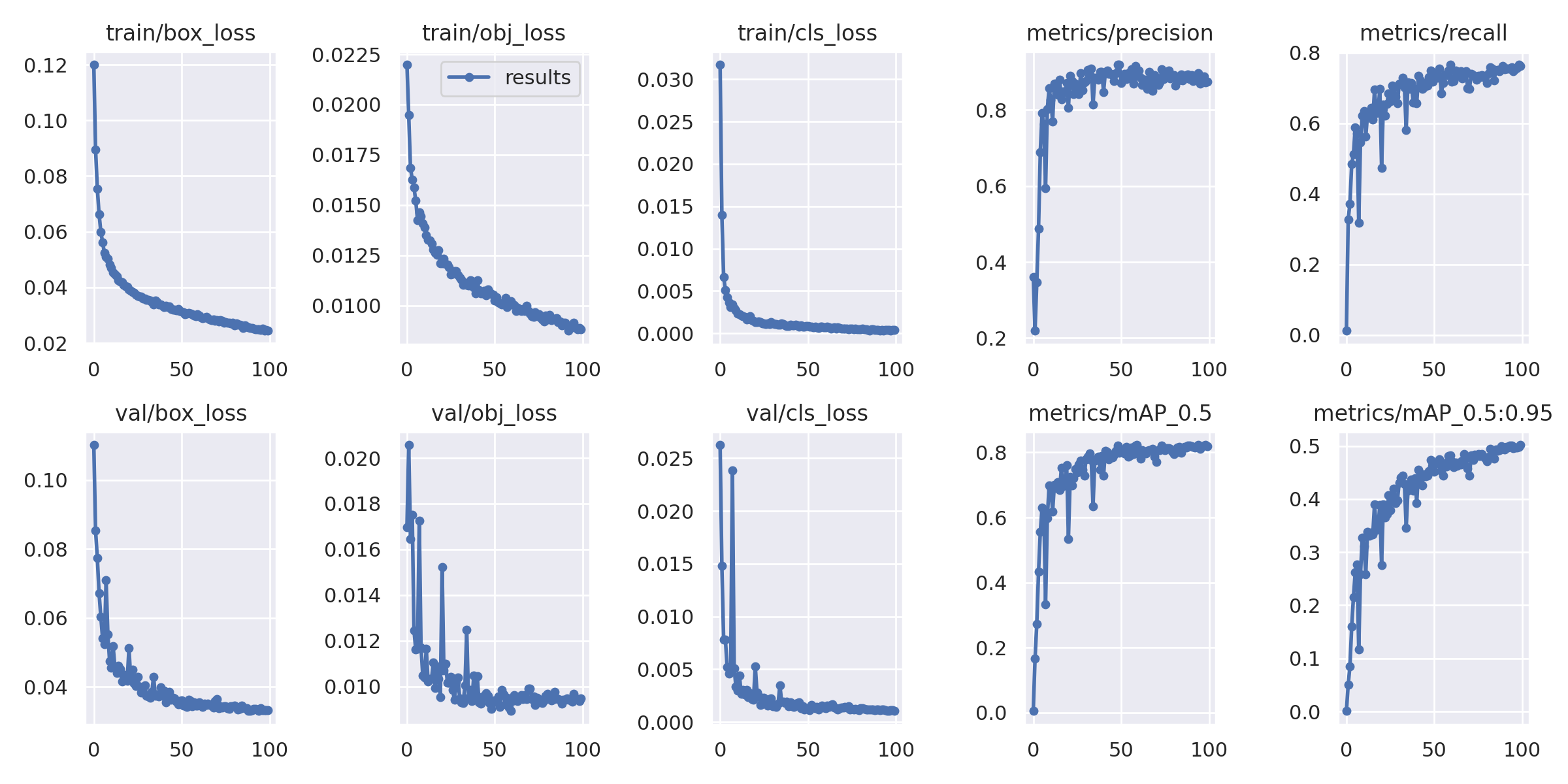

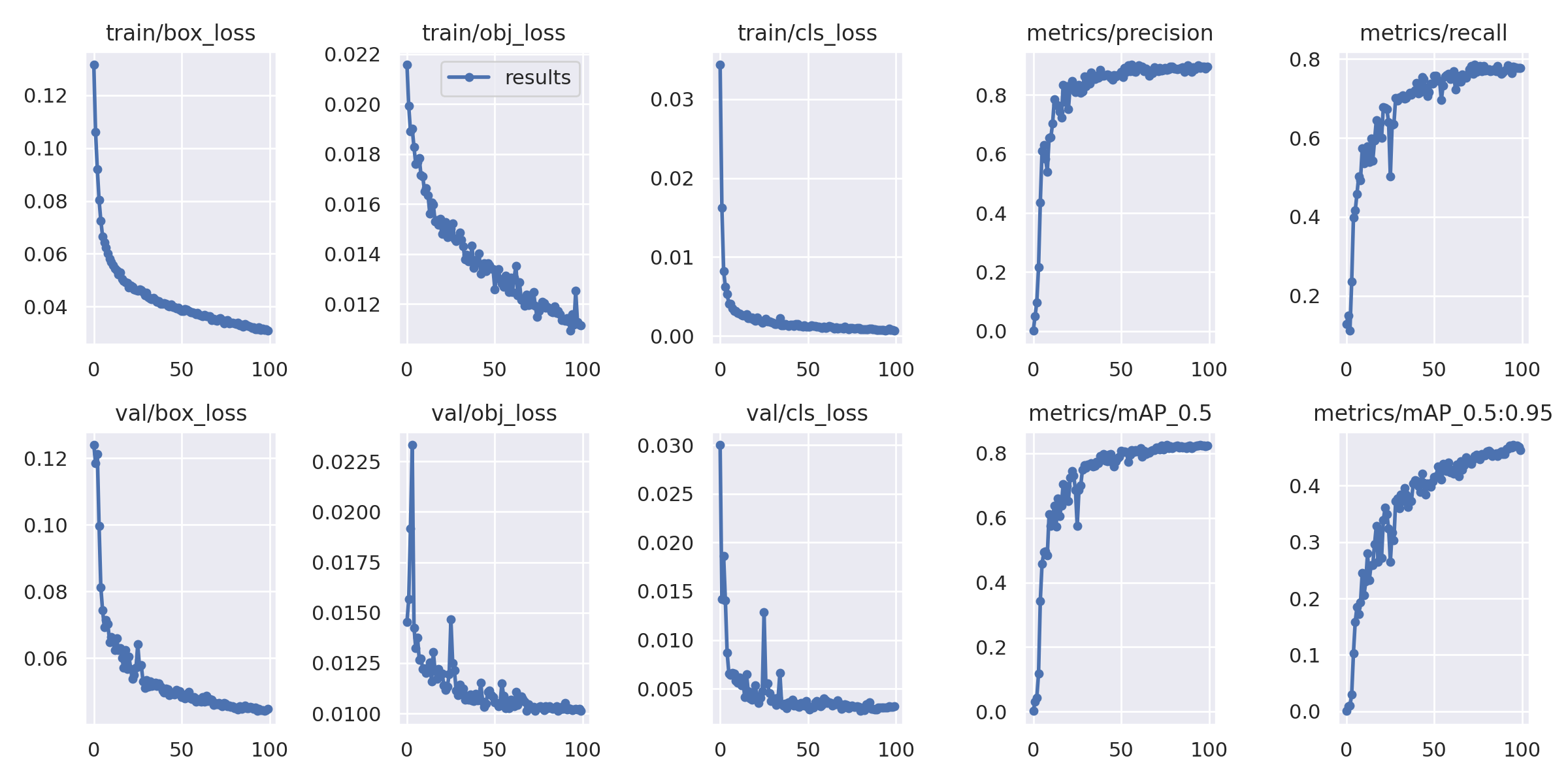

Hello, I'm new to AI. I would like to ask for some guidance on my research. I'm doing my master thesis with YOLOv5. I'm trying to detect the anomaly of stay cables of cable-type bridges. I used two datasets; Dataset- 1 has 2049 source images and 4159 images in total after combining with some augmented images to the training dataset, and Dataset- 2 has 1823 source images and 3673 images in total after combining with some augmented images to the training dataset. Train, validation and test dataset split is as follow, Dataset -1, 3.2k: 614: 380 Dataset -2, 2.9k: 552: 299 I followed custom YOLOv5 training from Roboflow and also used the Roboflow annotator with rectangular bounding boxes. I used batch size 64 and epoch 100 with pre-trained weight YOLOv5s6 and trained on COLAB pro plus for both datasets and the rest is the same as the custom YOLOv5 training tutorial. This is the result for Dataset-1 This is the result for Dataset-2

This is the result for Dataset-2

My question is,