yes there are multiple issues with that:

Pre-knowledge:

webpack tries to treat WASM like ESM. We try to apply all rules/assumptions that apply to ESM to WASM too. We assume that in future the WASM JS API may be superseeded by a integration of WASM into the ESM module graph.

This means the imports section in the WASM file is resolved like import statements in ESM and the export section is treated like export in ESM.

WASM modules also have a start section, which is executed when the WASM is instantiated.

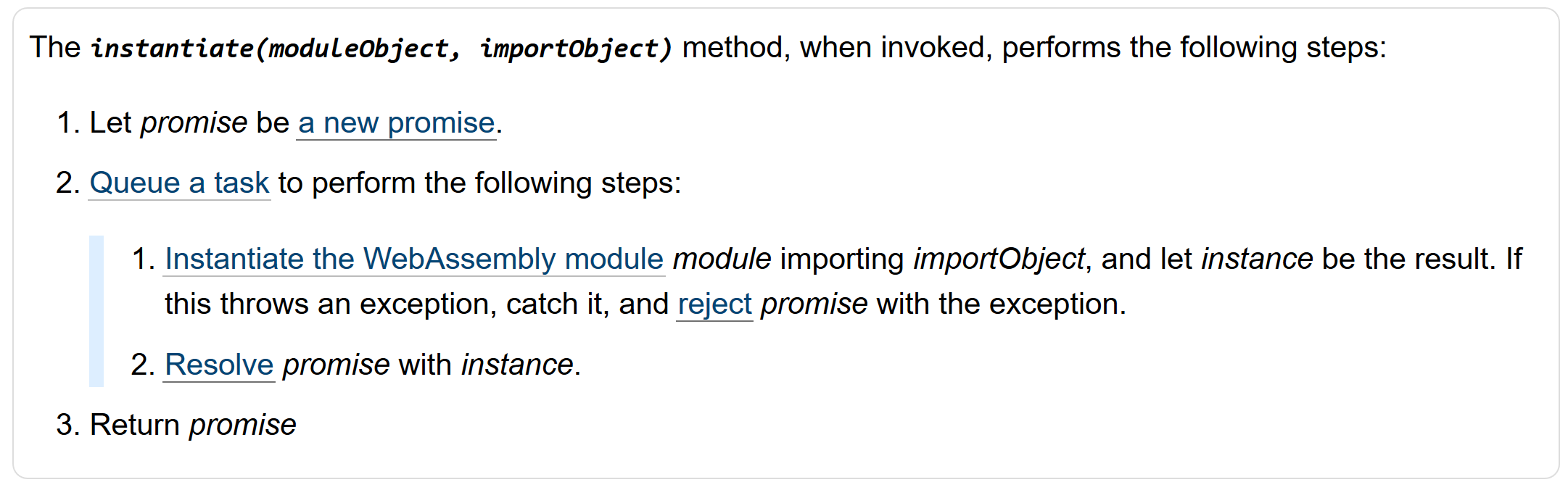

In the WASM JS API imports are passed via importsObject to the instantiate method.

The ESM spec specifies multiple phases. One phase is the ModuleEvaluation. In this phase all modules are evaulated in the well defined order. This phase is synchronous. All modules evaluate in the same "tick".

When WASM are in the module graph this means:

- the

startsection is executed in the same "tick" - all dependencies of the WASM are executed in the same "tick"

- the ESM importing the WASM is executed in the same "tick"

This behavior is not possible when using the Promise-version of instantiate. A Promise always delays it's fulfillment into another "tick".

It's only possible when using a sync version of instantiate (WebAssembly.Instance).

Note: Technically there could be a WASM without start section and without dependencies. In this case this doesn't apply. But we can't assume this is always the case.

webpack wants to download and compile the WASM in parallel to downloading the JS code. Using instantiateStreaming won't allow this (when the WASM has dependencies), because instantiate requires passing a importsObject. Creating the importsObject requires evaluating all dependencies/imports of the WASM, so these dependencies need to be downloaded before starting to download the WASM.

When using compileStreaming + new WebAssembly.Instance parallel download and compile is possible, because compileStreaming doesn't require an importsObject. The importsObject can be created when WASM and JS download has finished.

I also want to quote the WebAssembly spec. It states that compilation happens in a background thread in compile and instantiation happens on the main thread.

It's not clearly stated but in my optinion JSC's behavior is not spec-comform.

Other notes:

WASM also lacks the ability to use live bindings of imported identifiers. Instead the importsObject is spec'ed to be copied. This could have weird problems with circular dependencies and WASM.

It would be great to support getters in the importsObject and the be able to get the exports before the start section is executed.

Unfortunately there are performance issues with

WebAssembly.compileStreamingon iOS. On iOS devices, there is a limited amount of faster memory. Because of this, the engine doesn't know which kind of memory to compile for untilinstantiateis called.If I understand correctly, for the JSC engine

compileStreamingwill just do a memcopy of the .wasm. It's only wheninstantiateis called that the Memory object is created. Then the engine knows whether it will be using the fast memory, or just a normal malloc'd Memory that needs bounds checks. At this point, it can generate the code appropriately.Because of this, we plan to recommend that all bundlers use

instantiateStreaminginstead. Does this cause any issues for webpack?