I'm not sure we actually want to do this after all:

- I had to specifically tell it to avoid 'big5' and 'big5hkscs', otherwise it would detect pretty much everything encoded in Windows-1251 as Big5. Seeing as Windows-1251 is very relevant to us (it's what the Russian version of the game uses), and Big5 isn't relevant at all, that's a problem.

-

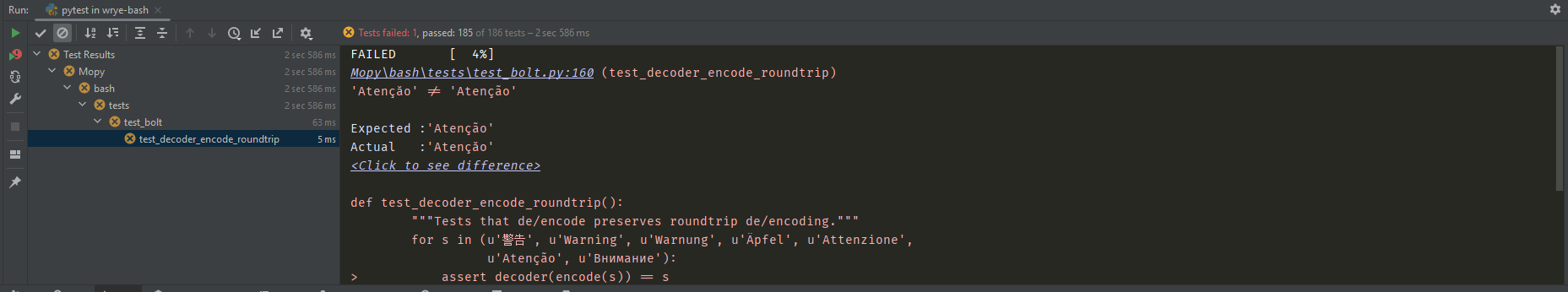

Most of the unit test failures resulting from this are easily fixed and not a concern at all - down to it just doing a better job of detecting 'weird' encodings like Windows-932 - but this one is:

That demonstrates exactly the concern I had in the 'Disadvantages' section. For

decoder, this library probably works pretty well, but forencodethis doesn't really work at all since it'll happily write out nonsense like 'Russian encoded in Windows-932', then correctly detect the weird encoding, which leadsencodeto think this is definitely the right encoding for the job.

I'll just shelve this for now. Maybe once we've completely rethought our encoding handling for plugins - see #42 and #46 - we can take another look at this.

Ideally we'd have per-plugin encodings, with a command that explicitly performs the autodetection once and stores the result as the per-plugin encoding. That option could then read every encoded string in the plugin with an unknown encoding, join them all with newlines into one long bytestring, then call our charset detection library on that one long string. That would give the library much more data to work with for determing the encoding - which must be the same between all those plugin strings.

charset-normalizer claims to be more accurate and faster than chardet. It's used by some notable big projects already (e.g. requests).

Advantages:

Disadvantages:

Quote from the readme:

That might not gel well with our use case, especially when we then use the found encoding to write with afterwards. Would need to do some tests to see for sure.