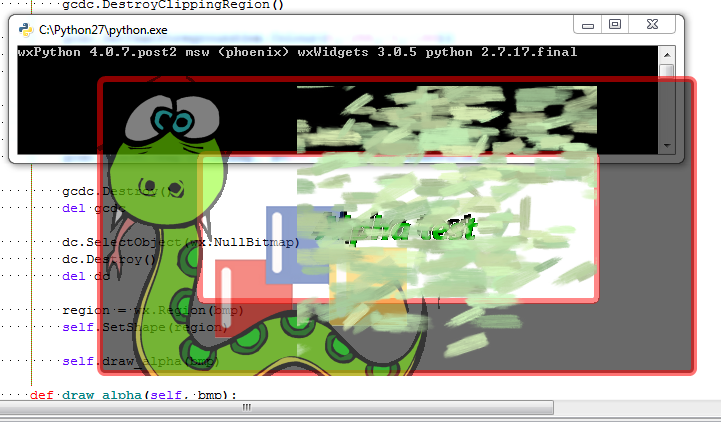

Hmmm well whatdayaknow... it sorta works. Still has the BLACK issue on wxPy4.1 tho.... I'm beginning to think that whatever changed in wxWidgets is what may be causing the performance issues on wxPy4.1 also, but am not sure...

brushwithalpha.png

Collapsible Content - Click to expand

```python #!/usr/bin/env python # -*- coding: utf-8 -*- """""" # Imports.-------------------------------------------------------------------- # -Python Imports. import os import sys import ctypes from ctypes.wintypes import LONG, HWND, INT, HDC, HGDIOBJ, BOOL, DWORD # -wxPython Imports. import wx wxVER = 'wxPython %s' % wx.version() pyVER = 'python %d.%d.%d.%s' % sys.version_info[0:4] versionInfos = '%s %s' % (wxVER, pyVER) UBYTE = ctypes.c_ubyte GWL_EXSTYLE = -20 WS_EX_LAYERED = 0x00080000 ULW_ALPHA = 0x00000002 AC_SRC_OVER = 0x00000000 AC_SRC_ALPHA = 0x00000001 class POINT(ctypes.Structure): _fields_ = [ ('x', LONG), ('y', LONG) ] class SIZE(ctypes.Structure): _fields_ = [ ('cx', LONG), ('cy', LONG) ] class BLENDFUNCTION(ctypes.Structure): _fields_ = [ ('BlendOp', UBYTE), ('BlendFlags', UBYTE), ('SourceConstantAlpha', UBYTE), ('AlphaFormat', UBYTE) ] user32 = ctypes.windll.User32 gdi32 = ctypes.windll.Gdi32 # LONG GetWindowLongW( # HWND hWnd, # int nIndex # ); GetWindowLongW = user32.GetWindowLongW GetWindowLongW.restype = LONG # LONG SetWindowLongW( # HWND hWnd, # int nIndex, # LONG dwNewLong # ); SetWindowLongW = user32.SetWindowLongW SetWindowLongW.restype = LONG # HDC GetDC( # HWND hWnd # ); GetDC = user32.GetDC GetDC.restype = HDC # HWND GetDesktopWindow(); GetDesktopWindow = user32.GetDesktopWindow GetDesktopWindow.restype = HWND # HDC CreateCompatibleDC( # HDC hdc # ); CreateCompatibleDC = gdi32.CreateCompatibleDC CreateCompatibleDC.restype = HDC # HGDIOBJ SelectObject( # HDC hdc, # HGDIOBJ h # ); SelectObject = gdi32.SelectObject SelectObject.restype = HGDIOBJ # BOOL UpdateLayeredWindow( # HWND hWnd, # HDC hdcDst, # POINT *pptDst, # SIZE *psize, # HDC hdcSrc, # POINT *pptSrc, # COLORREF crKey, # BLENDFUNCTION *pblend, # DWORD dwFlags # ); UpdateLayeredWindow = user32.UpdateLayeredWindow UpdateLayeredWindow.restype = BOOL COLORREF = DWORD def RGB(r, g, b): return COLORREF(r | (g << 8) | (b << 16)) class Frame(wx.Frame): def __init__(self, parent, id=wx.ID_ANY, title=wx.EmptyString, pos=wx.DefaultPosition, size=wx.DefaultSize, style=wx.FRAME_SHAPED | wx.NO_BORDER | wx.FRAME_NO_TASKBAR | wx.STAY_ON_TOP , name='frame'): wx.Frame.__init__(self, parent, id, title, pos, size, style, name) self.delta = (0, 0) self.Bind(wx.EVT_LEFT_DOWN, self.OnLeftDown) self.Bind(wx.EVT_LEFT_UP, self.OnLeftUp) self.Bind(wx.EVT_MOTION, self.OnMouseMove) self.Bind(wx.EVT_RIGHT_UP, self.OnExit) self.Bind(wx.EVT_ERASE_BACKGROUND, lambda x: None) self.Bind(wx.EVT_PAINT, self.OnPaint) def OnExit(self, event): self.Close() def OnLeftDown(self, event): self.CaptureMouse() x, y = self.ClientToScreen(event.GetPosition()) originx, originy = self.GetPosition() dx = x - originx dy = y - originy self.delta = ((dx, dy)) def OnLeftUp(self, event): if self.HasCapture(): self.ReleaseMouse() def OnMouseMove(self, event): if event.Dragging() and event.LeftIsDown(): x, y = self.ClientToScreen(event.GetPosition()) fp = (x - self.delta[0], y - self.delta[1]) self.Move(fp) def OnPaint(self, event): width, height = self.GetClientSize() text = 'Alpha test' font = self.GetFont() font.SetPointSize(font.GetPointSize() + 16) font = font.MakeBold() font = font.MakeItalic() bmp = wx.Bitmap(width, height) bmp2 = wx.Bitmap(width, height) bmp3 = wx.Bitmap(width, height) dc = wx.MemoryDC() dc.SelectObject(bmp3) dc.SetTextForeground(wx.Colour(255, 255, 255, 255)) dc.SetFont(font) text_width, text_height = dc.GetFullTextExtent(text)[:2] text_x = (width / 2) - (text_width / 2) text_y = (height / 2) - (text_height / 2) dc.DrawText(text, text_x, text_y) dc.SelectObject(bmp2) dc.SetPen(wx.Pen(wx.Colour(255, 255, 255, 255), 6)) dc.SetBrush(wx.Brush(wx.Colour(255, 255, 255, 255))) dc.DrawRoundedRectangle(103, 78, width - 200 - 3, height - 150 - 3, 5) dc.SelectObject(bmp) gc = wx.GraphicsContext.Create(dc) gcdc = wx.GCDC(gc) region1 = wx.Region(bmp) region2 = wx.Region(bmp2, wx.Colour(0, 0, 0, 0)) region1.Subtract(region2) gcdc.SetDeviceClippingRegion(region1) gc.SetPen(wx.Pen(wx.Colour(255, 0, 0, 140), 6)) gc.SetBrush(wx.Brush(wx.Colour(0, 0, 0, 150))) gc.DrawRoundedRectangle(3, 3, width-6, height-6, 5) gcdc.DestroyClippingRegion() gc.SetPen(wx.Pen(wx.Colour(255, 0, 0, 120), 6)) gc.SetBrush(wx.Brush(wx.Colour(0, 0, 0, 0))) gc.DrawRoundedRectangle(103, 78, width - 200 - 3, height - 150 - 3, 5) region1 = wx.Region(bmp) region2 = wx.Region(bmp3, wx.Colour(0, 0, 0, 0)) region1.Subtract(region2) gcdc.SetDeviceClippingRegion(region1) gcdc.SetTextForeground(wx.Colour(0, 0, 0, 130)) gcdc.SetTextBackground(wx.TransparentColour) gcdc.SetFont(font) gc.DrawText(text, text_x - 4, text_y - 4) gcdc.DestroyClippingRegion() gcdc.SetTextForeground(wx.Colour(0, 255, 0, 150)) gcdc.SetTextBackground(wx.TransparentColour) gc.DrawText(text, text_x, text_y) ## vippibmp = wx.Bitmap('Vippi50.png', wx.BITMAP_TYPE_PNG) ## gcdc.DrawBitmap(vippibmp, x=0, y=0, useMask=False) brushbmp = wx.Bitmap('brushwithalpha.png', wx.BITMAP_TYPE_PNG) gcdc.DrawBitmap(brushbmp, x=200, y=10, useMask=False) gcdc.Destroy() del gcdc dc.SelectObject(wx.NullBitmap) dc.Destroy() del dc region = wx.Region(bmp) self.SetShape(region) self.draw_alpha(bmp) def draw_alpha(self, bmp): hndl = self.GetHandle() style = GetWindowLongW(HWND(hndl), INT(GWL_EXSTYLE)) SetWindowLongW(HWND(hndl), INT(GWL_EXSTYLE), LONG(style | WS_EX_LAYERED)) hdcDst = GetDC(GetDesktopWindow()) hdcSrc = CreateCompatibleDC(HDC(hdcDst)) pptDst = POINT(*self.GetPosition()) psize = SIZE(*self.GetClientSize()) pptSrc = POINT(0, 0) crKey = RGB(0, 0, 0) pblend = BLENDFUNCTION(AC_SRC_OVER, 0, 255, AC_SRC_ALPHA) SelectObject(HDC(hdcSrc), HGDIOBJ(bmp.GetHandle())) UpdateLayeredWindow( HWND(hndl), HDC(hdcDst), ctypes.byref(pptDst), ctypes.byref(psize), HDC(hdcSrc), ctypes.byref(pptSrc), crKey, ctypes.byref(pblend), DWORD(ULW_ALPHA) ) if __name__ == '__main__': print(versionInfos) app = wx.App() frame = Frame(None, size=(600, 300), pos=(400, 400)) frame.Show() app.MainLoop() ```I'm guessing you ripped the constants out of win32 to make it purepython. Not sure what you would need to do that with linux or mac...

With the stacked frames approach I made I eventually got to a point where I rage quit about 10 times because flattening out the font into a png made the stroke look worse and worse as I went.

Then I came across the left edge wasn't rendering the first pixels on each line for who knows what reason. Hardcoding -1 didn't work either... I figured I could paint the logo into a walrus sitting on a couch, and combine it with my animated python powered apng and rerender it so it might be able to be ripped into a throbber for a animated splash screen. Needless to say, I kept getting frustrated by the little problems.

part of the issue is that the font isn't free so that's why I was rendering it as a png.

part of the issue is that the font isn't free so that's why I was rendering it as a png.

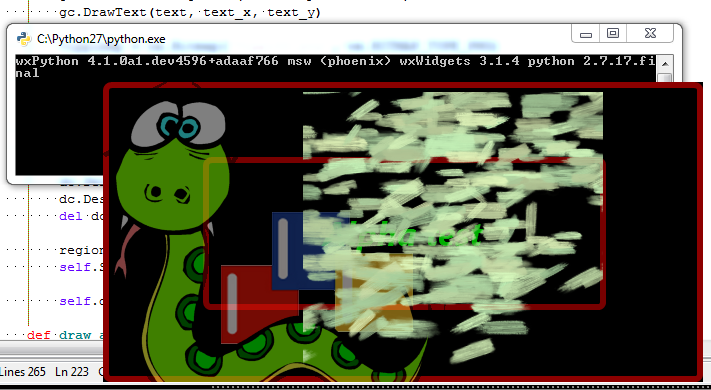

I think with your approach combined with 2 stacked frames it will be possible to do a fancy animated splash with alpha. I even spent the time to write a custom fade in timer for it that looks nice. ... But yea I might have to add +1 or +2 to the frame size to work around the edge issue. That likely will uglyfy the code a bit by scattering extra variables everywhere which most folks will then ask "Why did you add this hardcoded +2?"

Also with your approach live painting directly from krita onto a frame will now support a alpha background, which is nice.

This one renders slow on browsers, since a gif.

This one renders slow on browsers, since a gif. .

.

If you can get the edges to look proper on the 2 with the transparency, then I think everything else looks fine with the python powered one with this iteration.

If you can get the edges to look proper on the 2 with the transparency, then I think everything else looks fine with the python powered one with this iteration.

Operating system:

wxPython version & source:

Python version & source:

Description of the problem: There are a few issues I am running into. I have attached an image to show the problems that are taking place.

I am not able to draw any text that has an alpha channel. Well let me correct that. It draws the text, however the alpha channel is not used. You can see this in the attached image. Every color used in that image has an alpha channel. I did a screen capture with the frame placed over a white background so you would be able to see the problems. The white is not part of the example code below.

I have tried with GCDC.DrawText and also GraphicsContext.DrawText and neither of them support an alpha channel.

I am drawing a simple drop shadow using wx.Region to subtract the actual text from the bitmap where the shadow is drawn. The appearance starts out fine but as the text continues there ends up being areas that get improperly clipped, this issue gets worse as the text continues. you can see this problem in 1, 2 and 3 of the attached image.

I have studied this fairly closely and if you look at the text you will see the text being antialiased to black. I am not sure why this is taking place, I have set the background text dolor to

wx.TransparentColourI may have mentioned this before, I do not remember if I have or not. This problem only becomes evident when using an alpha channel. When rendering a rectangle the filled in area of the rectangle can be seen under the outline. This can be seen in the image item 5, you may need to zoom into the image to see it better.

If you look at item 4 you do not see this issue because the filled in area has an alpha channel of 0.

There needs to be some kind of a check to see if the pen has an alpha level is 0 < alpha < 255 and if that is the case then the filled in area needs to be adjusted to be smaller. I am sure this same issue also exists with the other functions as well. There is also another issue regarding the use of these types of functions. If you take this example

The resulting rectangle is NOT 150, 150. it ends up being 154, 154. I do know this problem has existed for a very long time and to correct it would cause a lot of problems for projects already using wxPython. Perhaps the addition of a global flag that can be set that would tell wxPython to render to exact sizes.

I may be doing something incorrect, tho I do not think that I am.

Here is the frame as seen without the white behind it.

This example script will ONLY run on Windows.

@Metallicow Here is an example how how to overcome the alpha issue with shaped frames, this example only works on Windows. I would think that there are similiar API functions for OSX and Linux that can be used to achieve the same results