-

## Checklist

- [x] I'm reporting a bug in Sherlock's functionality

- [ ] The bug I'm reporting is not a false positive or a false negative

- [x] I've verified that I'm running the latest …

-

When i use bolt quantization to infer with file ppo_cnn_int8_q.bolt, i meet this problem.

[ERROR] thread 24754 file /home/xys/bolt-master/inference/engine/include/factory.hpp line 242: this librar…

-

### Description

tf version is 2.10

int8 tflite model has 4 Conv2DTranspose Layer.

int8 tflite model has uint8 input and output

edgetpu compiler version 16.x

When I edgetpu compile the tflite mo…

-

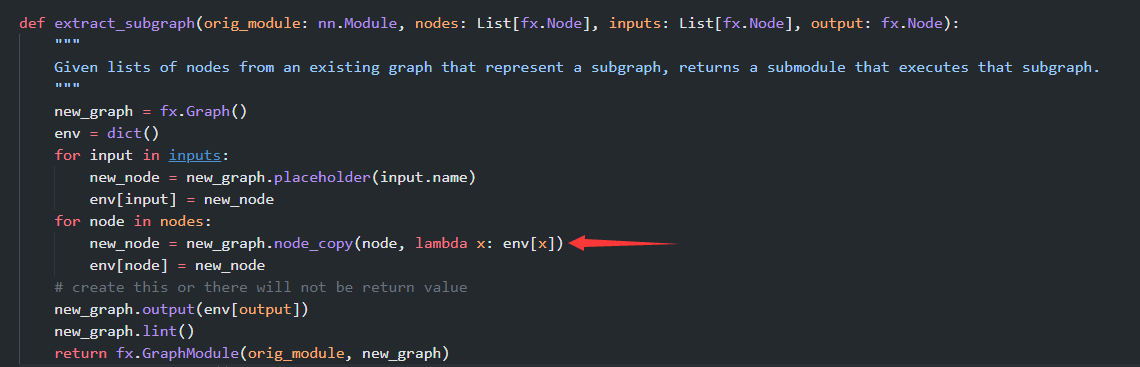

Hi, When I use advanced_ptq of mqbench to quantify my model, I get the keyvalue error problem in this place.

The adaround optimization operati…

-

Can you please let me know what's the cause of this issue

-

### Search before asking

- [X] I have searched the Yolov5_StrongSORT_OSNet [issues](https://github.com/mikel-brostrom/Yolov5_StrongSORT_OSNet/issues) and [discussions](https://github.com/mikel-bros…

-

Hello teams,

I try to quantize all the parameters of my model in a `per_tensor` way.

And I found that the final output quantization model still contains layers `per_channel`.

the `yaml` file is…

-

### Description

We have tested working industrial safety monitoring solution on a RPi and now need to port our TFlite model and inference solution to an industrial IMX8 gateway. By chasing the variou…

-

### 🐛 Describe the bug

I follow the guidelines in https://pytorch.org/blog/quantization-in-practice/#post-training-static-quantization-ptq and run the code snippet:

```

# Static quantization of …

-

## Description

Using the `pytorch-quantization` toolkit of TensorRT to do PTQ on a pytorch model, gives 0 scale values for some nodes, which causes ONNX parse error when trying to loading into Tens…