Holmes 🔎 is benchmark dedicated to asses the linguistic competence of language models and features:

- Comprehensive analysis of 66 linguistic phenomena covering morphology, syntax, semantics, reasoning, and discourse abilities based on over 200 datasets.

- Benchmark evaluations of 59 language models. Dive into the insights via Holmes Leaderboard 🚀 and Holmes Explorer 🔎.

- Source code for evaluating new language models using the comprehensive Holmes 🔎 or the streamlined FlashHolmes ⚡.

Missing a specific language model? Either email us or evaluate it yourself. 👇

🔎 How does it work?

🔎️ Setting up the environment

To evaluate your chosen language model using the Holmes 🔎 or FlashHolmes ⚡ benchmarks, please ensure your setup meets the following requirements:

- Python version 3.10.

- Install all necessary packages using pip install -r requirements.txt`.

- If you want to load language models with

four_bit,installbitsandbytes.If you have trouble installing it, use the version0.42.0and verify the installation withpython3 -m bitsandbytes.

🔎 Getting the data

Don't worry about parsing linguistic corpora and composing probing datasets: we already did that. Find the download instructions for Holmes 🔎 (here) and for FlashHolmes ⚡ (here).

🔎 Investigate your language model using one command

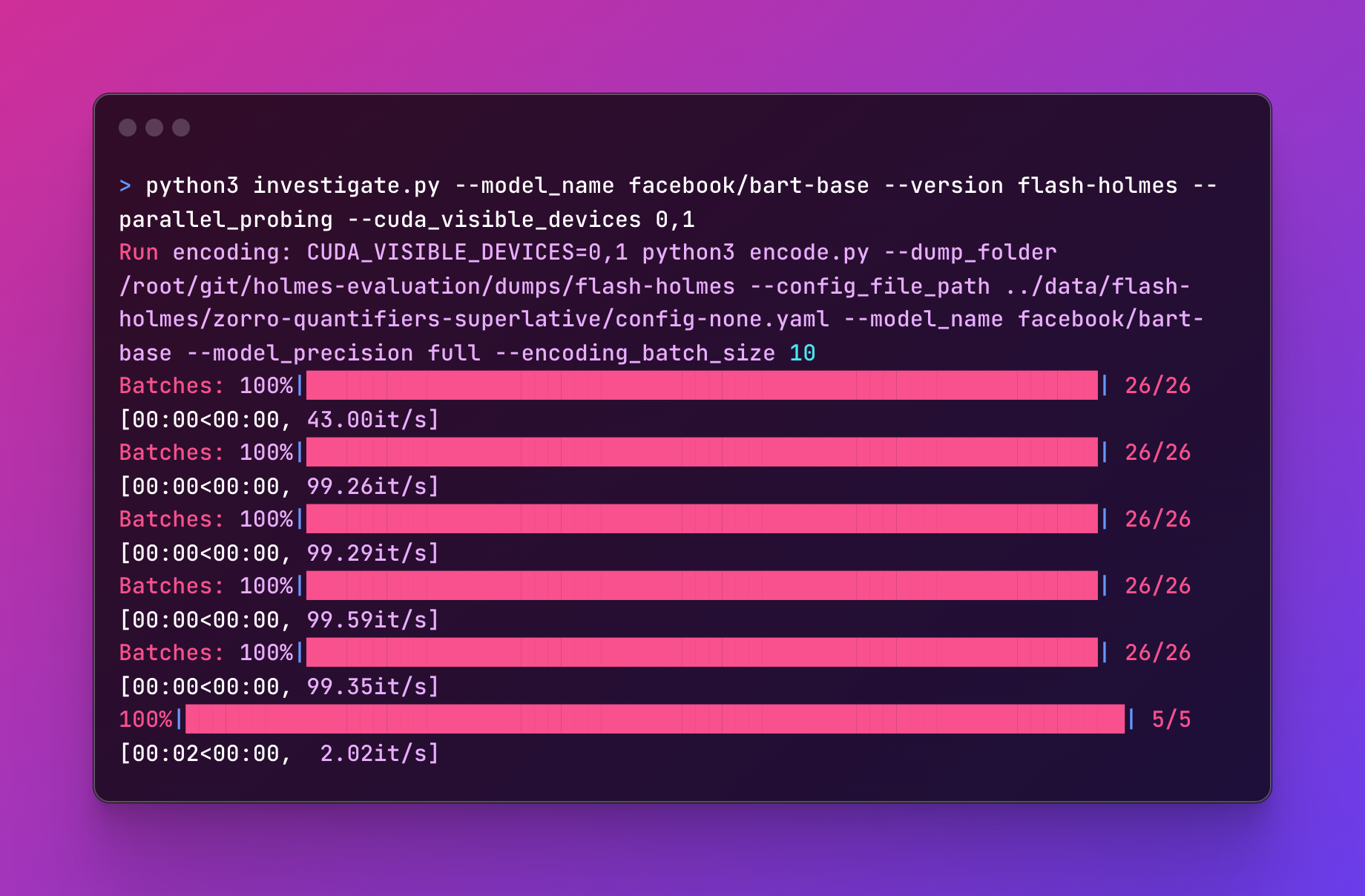

For ease of use, you can evaluate a language model with no more than on command like:

The investigation script (src/investigate.py) requires the following essential commands:

-

--model_nameis the huggingface tag of the model to investigate, for examplefacebook/bart-base. -

--versionis the specific benchmark version on which to evaluate. This corresponds to the data folder, either (holmes) for Holmes 🔎 or (flash-holmes) for FlashHolmes ⚡. -

--parallel_probingadd this flag parameter if you are in a hurry and want to parallelize stuff. -

--cuda_visible_devicesspecifies the GPU devices to use, for example, with0,1the evaluation will us GPU 0 and 1.Additional parameters you may need.

-

--dump_folder(default./dumps) is the folder used to save the encoded probing datasets. -

--force_encodingadd this flag parameter if you want to replace the dumped encodings of the probing dataset. Otherwise, we skip probing datasets when they are already encoded. -

--model_precision(defaultfull) specifies the precision to use when loading the language model, eitherfull,half, orfour_bit. Make sure to installbitsandbyteswhen you want to usefour_bit. -

--encoding_batch_size(default10) is the batch size when we encode the probing datasets. Lower this if you encounter out-of-memory errors on the GPU. -

--in_filter(default`) defines a string filter to only consider probing datasets matching this filter. For example, when setting torst, we only consider probing datasets likerst-edu-depth`. -

--control_task_types(defaultnone) whether to apply specific control tasks (Hewitt et al., 2019:noneno control task is applied,perminput words will be shuffled randomly,rand-weightsrun the probes with random language model weights, andrandomizationrun the probes with randomized labels. -

--run_probe(defaultTrue) run the default linear probe. -

--run_mdl_probe(defaultFalse) run the probe including minimal description length as in Voita and Titov, 2020 -

--num_hidden_layers(default0) hidden layers to consider within the probe. For example, with0,1, we evaluate the probes once with none (linear model) and once with one intermediate layer (MLP). -

--seeds(default0,1,2,3,4) seeds to consider when probing. With0,1,2,3,4, we run every probe five-time using these seeds. -

--results_folder(default./results) is the folder to save the probing results. -

--force_probingadd this flag parameter if you want to re-probe and replace already evaluated probing datasets. Otherwise, we skip already probed datasets. -

--dump_predsuse this flag parameter when you want to dump instance-level predictions for every probe for all probing datasets.

After running all probes an evaluations, you will find the aggregated results in the results folder. Either in results_holmes.csv for Holmes 🔎 or results_flash-holmes.csv for FlashHolmes ⚡.

🔎Disclaimer

We provide datasets in a specific format without endorsing their quality, fairness, or confirming your licensing rights. Users must verify their permissions under the dataset's license and properly credit the dataset owner.

If you own a dataset and want to update or remove it from our library, please contact us. Additionally, if you wish to include your dataset or model for evaluation, feel free contact as well!

References

Hewitt, J., & Liang, P. (2019, November). Designing and Interpreting Probes with Control Tasks. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP) (pp. 2733-2743).

Voita, E., & Titov, I. (2020). Information-theoretic probing with minimum description length. In EMNLP 2020-2020 Conference on Empirical Methods in Natural Language Processing, Proceedings of the Conference (pp. 183-196).