Alpaca

Alpaca is an Ollama client where you can manage and chat with multiple models, Alpaca provides an easy and begginer friendly way of interacting with local AI, everything is open source and powered by Ollama.

[!WARNING] This project is not affiliated at all with Ollama, I'm not responsible for any damages to your device or software caused by running code given by any AI models.

[!IMPORTANT] Please be aware that GNOME Code of Conduct applies to Alpaca before interacting with this repository.

Features!

- Talk to multiple models in the same conversation

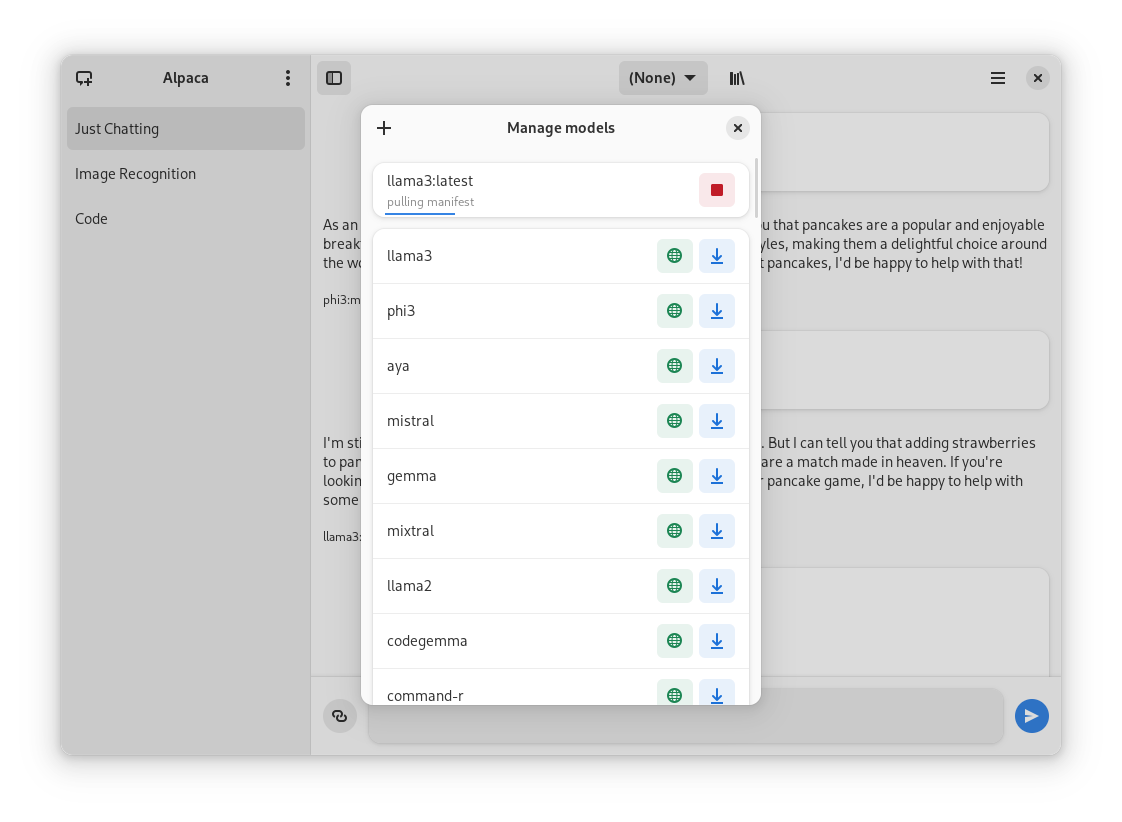

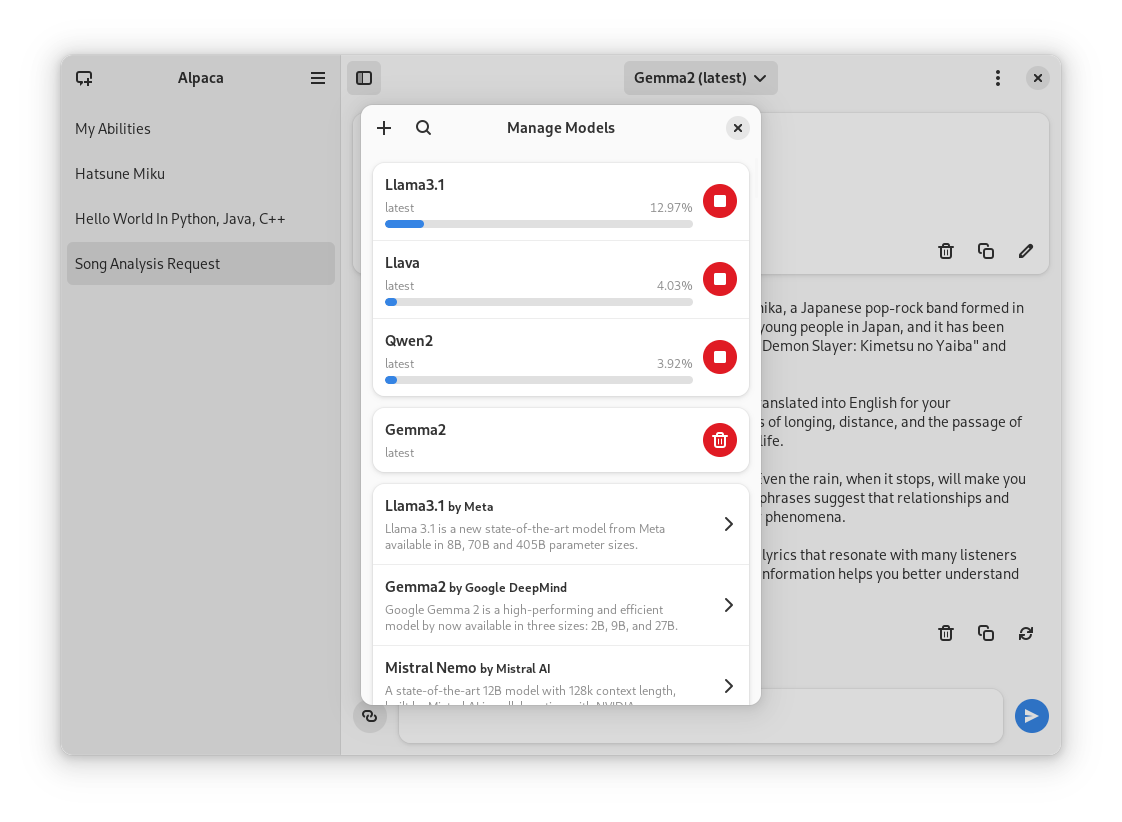

- Pull and delete models from the app

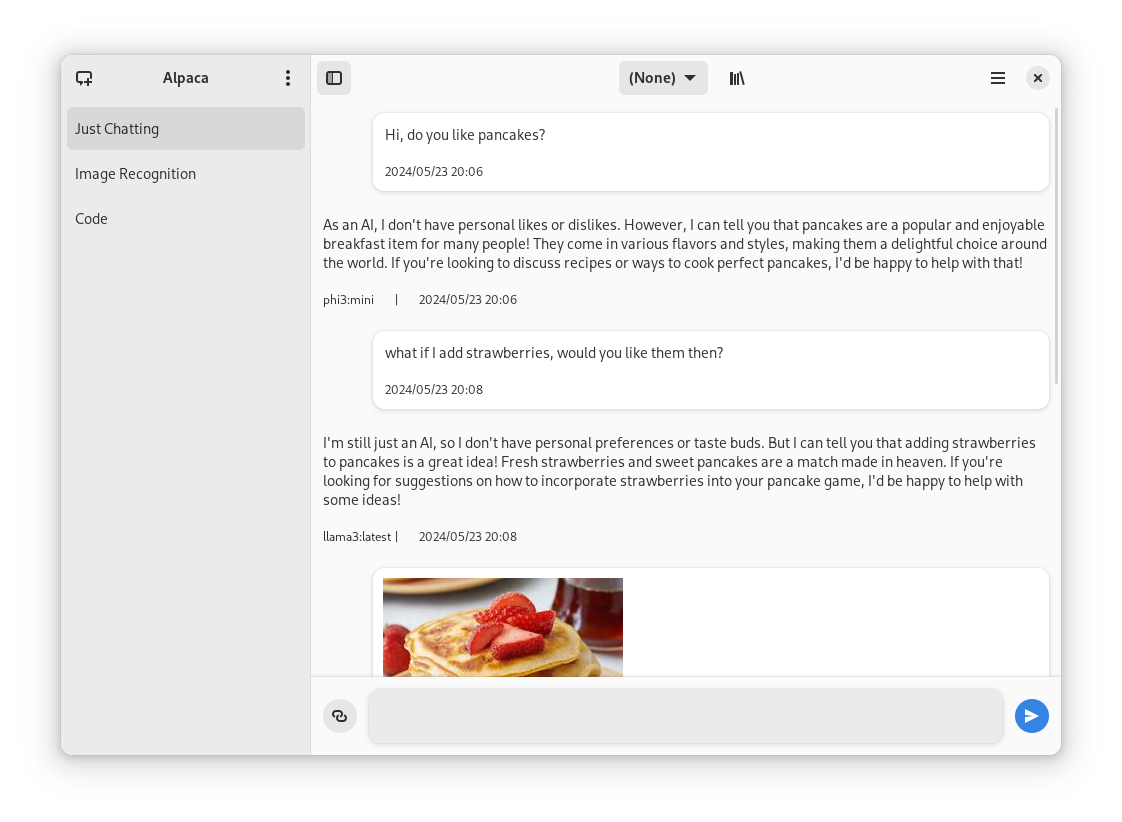

- Image recognition

- Document recognition (plain text files)

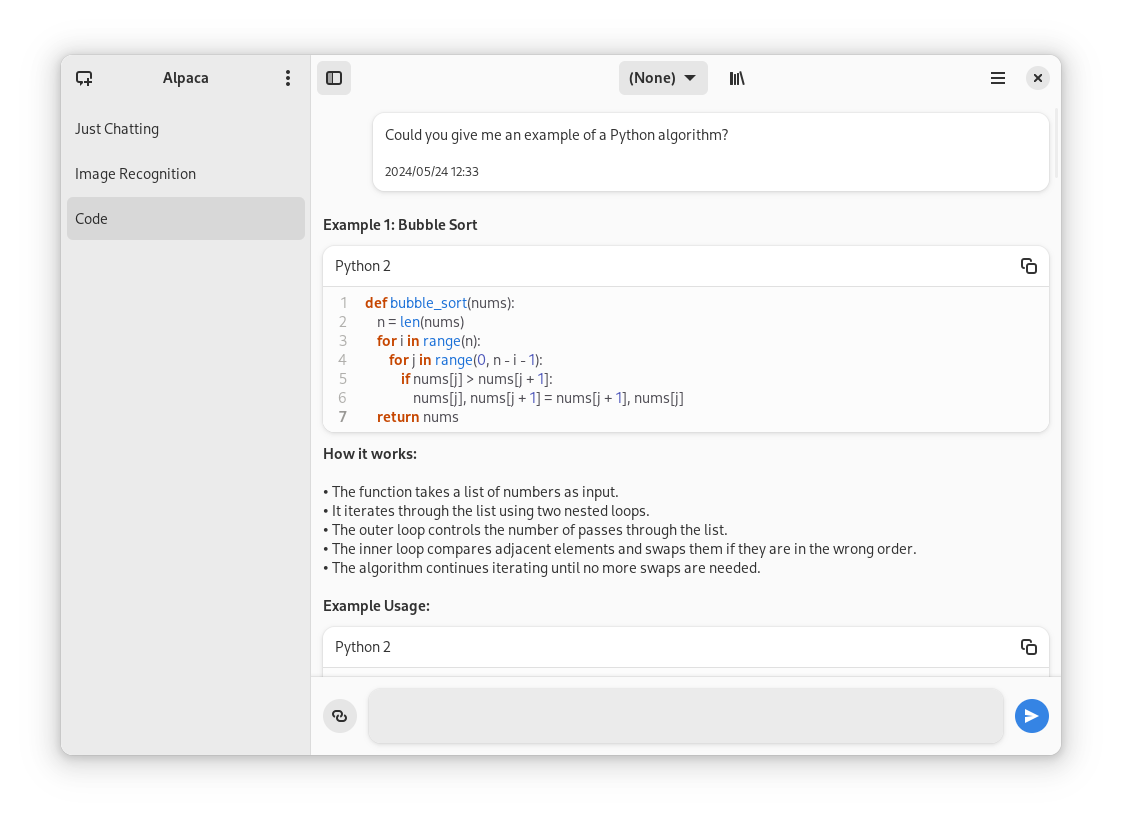

- Code highlighting

- Multiple conversations

- Notifications

- Import / Export chats

- Delete / Edit messages

- Regenerate messages

- YouTube recognition (Ask questions about a YouTube video using the transcript)

- Website recognition (Ask questions about a certain website by parsing the url)

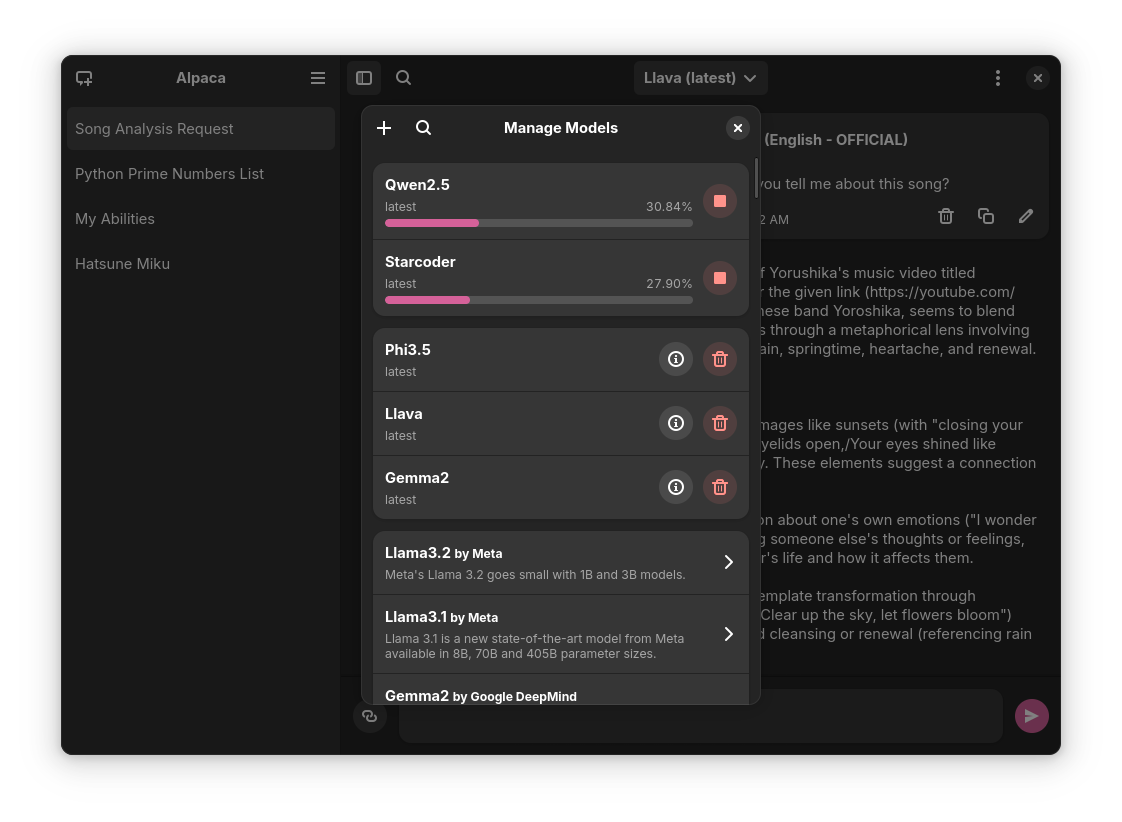

Screenies

| Normal conversation | Image recognition | Code highlighting | YouTube transcription | Model management |

|---|---|---|---|---|

|

|

|

|

|

Installation

Flathub

You can find the latest stable version of the app on Flathub

Flatpak Package

Everytime a new version is published they become available on the releases page of the repository

Snap Package

You can also find the Snap package on the releases page, to install it run this command:

sudo snap install ./{package name} --dangerousThe --dangerous comes from the package being installed without any involvement of the SnapStore, I'm working on getting the app there, but for now you can test the app this way.

Nix

Alpaca is also available in Nixpkgs. See package info for installation instructions.

Notes:

- The package is not maintained by the author, but by @Aleksanaa, thus any issues uncertain whether related to packaging or not, should be reported to Nixpkgs issues.

- Alpaca is automatically updated in Nixpkgs, but with a delay, and new updates will only be available after testing.

- Alpaca in Nixpkgs does not come with sandboxing by default, which means if you want to directly execute the scripts generated by ollama (without even reviewing them first), we do not provide any isolation to prevent you from shooting yourself in the foot. You can still leverage Nixpak or Firejail for a convenient sandboxing.

Building Git Version

Note: This is not recommended since the prerelease versions of the app often present errors and general instability.

- Clone the project

- Open with Gnome Builder

- Press the run button (or export if you want to build a Flatpak package)

Translators

| Language | Contributors |

|---|---|

| 🇷🇺 Russian | Alex K |

| 🇪🇸 Spanish | Jeffry Samuel |

| 🇫🇷 French | Louis Chauvet-Villaret , Théo FORTIN |

| 🇧🇷 Brazilian Portuguese | Daimar Stein , Bruno Antunes |

| 🇳🇴 Norwegian | CounterFlow64 |

| 🇮🇳 Bengali | Aritra Saha |

| 🇨🇳 Simplified Chinese | Yuehao Sui , Aleksana |

| 🇮🇳 Hindi | Aritra Saha |

| 🇹🇷 Turkish | YusaBecerikli |

| 🇺🇦 Ukrainian | Simon |

| 🇩🇪 German | Marcel Margenberg |

| 🇮🇱 Hebrew | Yosef Or Boczko |

| 🇮🇳 Telugu | Aryan Karamtoth |

| 🇮🇹 Italian | Edoardo Brogiolo |

Want to add a language? Visit this discussion to get started!

Thanks

- not-a-dev-stein for their help with requesting a new icon and bug reports

- TylerLaBree for their requests and ideas

- Imbev for their reports and suggestions

- Nokse for their contributions to the UI and table rendering

- Louis Chauvet-Villaret for their suggestions

- Aleksana for her help with better handling of directories

- Gnome Builder Team for the awesome IDE I use to develop Alpaca

- Sponsors for giving me enough money to be able to take a ride to my campus every time I need to <3

- Everyone that has shared kind words of encouragement!