Thanks for your request!

Heads up, you’re requesting more than the typical weekly onboarding rate of DataCap!Closed NDLABS-Leo closed 1 year ago

Thanks for your request!

Heads up, you’re requesting more than the typical weekly onboarding rate of DataCap!Thanks for your request! Everything looks good. :ok_hand:

A Governance Team member will review the information provided and contact you back pretty soon.

Thanks for your request!

Heads up, you’re requesting more than the typical weekly onboarding rate of DataCap!Thanks for your request! Everything looks good. :ok_hand:

A Governance Team member will review the information provided and contact you back pretty soon.

Dear Applicant,

Due to the increased amount of erroneous/wrong Filecoin+ data recently, on behalf of the entire community, we feel compelled to go deeper into datacap requests. Hereby to ensure that the overall value of the Filecoin network and Filecoin+ program increases and is not abused.

Please answer the questions below as comprehensively as possible.

Customer data

We expect that for the onboarding of customers with the scale of an LDN there would have been at least multiple email and perhaps several chat conversations preceding it. A single email with an agreement does not qualify here.

Did the customer specify the amount of data involved in this relevant correspondence?

Why does the customer in question want to use the Filecoin+ program?

Should this only be soley for acquiring datacap this is of course out of the question. The customer must have a legitimate reason for wanting to use the Filecoin+ program which is intended as a program to store useful and public datasets on the network.

(As an intermediate solution Filecoin offers the FIL-E program or the glif.io website for business datasets that do not meet the requirements for a Filecoin+ dataset)

Files and Processing

Hopefully you understand the caution the overall community has for onboarding the wrong data. We understand the increased need for Filecoin+, however, we must not allow the program to be misused. Everything depends on a valuable and useful network, let's do our best to make this happen. Together.

Hello @NDLABS-OFFICE

Thank you for this application. Great to see that you want to store real data of value for humanity.

How do you plan to prepare the dataset IPFS, lotus

If you answered "other/custom tool" in the previous question, enter the details here No response

Can you give is some more detail here? Is your plan to redistribute dataset's that you have already downloaded from other data preparers? I ask because most of these set's have already been put on the network numerous times and are sitting with 50+ SP's i think.

If not, what software do you intend to use to start creating this dataset's for distribution?

@cryptowhizzard @herrehesse Thank you for your questions and I hope that my answers explain clearly what we are doing now. We also want to fully inform the community about the following information. If there are any other questions, please let me know. We are in favour of full transparency and openness.

Client Information. ND LABS has been continuously involved in the construction and growth of the Fil+ since its V2 notary. We have invited many web2 and web3 clients to store their real open data on the IPFS network, with datasets containing media videos, web3 NFT-related project data, e-commerce data, think tanks, and other areas. Companies are also invited by us through E-Fil to store data that cannot be disclosed. All of the above work is progressing steadily, and there is still a surplus of infrastructure built by ND, so we hope to store data that is open and valuable to the public in the IPFS network in the next work. ND hopes to contribute to the development of the Filecoin ecosystem in the wave of decentralized storage. With the recent virus outbreak, we are taking "life science data" as our first step. As stated in my application form, it is fully public data and is valuable, and we plan to store this dataset on the network for about 10 backups. Because the data is of public origin, it is not possible to contact specific clients.

Documentation and Processing. ND has separate server rooms in Singapore, Hong Kong, and the US, and currently has at least another 40P+ of storage capacity, as well as multiple machines for building CAR files and web servers to provide download services. At all three of these separate server rooms (with approximately 100Mbps of bandwidth allocated to the Fil+ project) we can download, package, and send the above datasets. Moreover, during the time ND has been active in the community, we have contacted many high-quality SPs in the industry, and we will distribute data to these high-quality SPs for storage. In addition, the storage middleware developed by ND will check the data for duplication to ensure that the data is not duplicated or misused. In addition, the nodes provided by ND and high-quality SPs in the industry will support data retrieval, and we will also provide data storage CIDs for network-wide retrieval if needed.

We will do our best to do a good job of storage. Welcome the supervision of the community and hope that notaries can support our project. Thanks.

It sounds good.

I will be frank and honest with you like i am with everyone. Your reputation is questionable. I won't link the slack threads here with Ipollo , 1475notary etc. etc. but you get the point.

For god sake, please do this right once and for all and make the difference. Stand out to the community and make sure this goes 100% okay. Use boost to make the deals so they are retrievable, make sure the SP's stay online and be transparant so we can stop looking at the past and start looking at a brighter future where there is real data stored on this network and in this program that is valuable to Humanity.

I will support this first round of datacap so you have the means to prove.

Good luck!

5PiB

500TiB

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

f01858410

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

250TiB

0b82e640-da6e-4294-a19d-476b95adf32e

There is no previous allocation for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

Hi, @cryptowhizzard

Firstly, Thanks for your understanding and support.

In addition, I want to clarify that when we joined the Fil+ community, there was an impact on our reputation due to a misunderstanding of the disclosure rules. We were trying to encourage more valuable businesses to join the community, However, the results were not satisfying for everyone.

We also continue to actively contact the governance team, and rectify and disclose that information.

What's more, I also want to point out that we are firm believers in Filecoin, and the good development of Fil+ is also the basis for our development.

So, again, we will do our best to do a perfect job of storage (especially data that is really valuable to the public). The supervision of the community is also welcome, and we will keep pace with the community.

Thanks again, peace & love!

Your Datacap Allocation Request has been proposed by the Notary

bafy2bzacecruocjgo66rw7qnnyxingzxvrvcpgnpkkmwg4yswnyeyvsjaxzd4

Address

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

Datacap Allocated

250.00TiB

Signer Address

f1krmypm4uoxxf3g7okrwtrahlmpcph3y7rbqqgfa

Id

0b82e640-da6e-4294-a19d-476b95adf32e

You can check the status of the message here: https://filfox.info/en/message/bafy2bzacecruocjgo66rw7qnnyxingzxvrvcpgnpkkmwg4yswnyeyvsjaxzd4

Your Datacap Allocation Request has been approved by the Notary

bafy2bzacecexo3di3atjikecq2lxercqq7vhu6aw4wycbz5eifpy4vpnfyl2i

Address

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

Datacap Allocated

250.00TiB

Signer Address

f1a2lia2cwwekeubwo4nppt4v4vebxs2frozarz3q

Id

0b82e640-da6e-4294-a19d-476b95adf32e

You can check the status of the message here: https://filfox.info/en/message/bafy2bzacecexo3di3atjikecq2lxercqq7vhu6aw4wycbz5eifpy4vpnfyl2i

I am willing to approve the first round of this series.

checker:manualTrigger

No client address found for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

No client address found for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

checker:manualTrigger

No client address found for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

checker:manualTrigger

No client address found for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

f01858410

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

500TiB

9c77146e-a487-4005-9e54-abf36e6a7c01

f01858410

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

xingjitansuo & cryptowhizzard

100% of weekly dc amount requested

500TiB

250TiB

4.75PiB

| Number of deals | Number of storage providers | Previous DC Allocated | Top provider | Remaining DC |

|---|---|---|---|---|

| 5736 | 10 | 250TiB | 16.35 | 58.93TiB |

No client address found for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

checker:manualTrigger

No client address found for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

It appears that the check bot is not in effect and we will disclose the contents of the partially stored file cid and ask community members to verify it for our LDN. If more information is required, please contact us at slack (ND LABS - OFFICE). Our nodes all support file retrieval.

f01740934: piece_cid:baga6ea4seaql3nwjb4kexvk53rup45b3c76yrbuxgrlltvlvdnrhewcmmps46ci payload_cid:bafykbzacedcx3eitxl2ydwvpsdabzcdwhp35gwxgiy7e76psynayv2xscdf5q

f01834253: piece_cid:baga6ea4seaqn26rt5zcpe7533do2va2ia46nhkjr37gkwxhwg5p656xr7nn7udi payload_cid:bafykbzacedqyizguncvlqny3a7fipvytjfry2yv3taj3ur322xalux4sastoq

f01853104: piece_cid:baga6ea4seaqfq2qqn3gocmuunvuhr7ubylg44kprntknb7fkab2j3nvscv4nkca payload_cid:bafykbzaceacykgjk3esbmitkwiw67glesn53zqbrlbmqmvili2kcp3z75wons

f01854080: piece_cid:baga6ea4seaqk4wrzjlbg6p3gibcaorxnzjbvfem32vhwlta6ethlt5c5pn3xuky payload_cid:bafykbzacecdykwdsz3xfszxnv2cfuoej236bsa277x66ntgggpoclwjldykaw

f01890456: piece_cid:baga6ea4seaqd4hkfd3p6n6iwfkglpxxoxxdidfszz3rywopzizbfupcysngn4iq payload_cid:bafykbzacedf33i2e5ntvv66ikvsdjgbycexrwogq2yc4j7iqtlynp34tgf6v2

f01985611: piece_cid:baga6ea4seaqikwl765shan4y4k7z3aaliilpodrcnuo3mlpwh3purppm7a46kia payload_cid:bafykbzaceb2iz3bnmk4m34kpravkemje2caewlgiigz4evqb6i2xuko4vqowy

checker:manualTrigger

No client address found for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

checker:manualTrigger

No client address found for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

I successfully retrieved a whole sector and verified the content is from S3.

aws s3 ls --no-sign-request s3://sra-pub-src-2/ERR4632019/ 2021-05-08 11:26:57 258229844846 URT1_KO_1.tar.gz.2

Your Datacap Allocation Request has been proposed by the Notary

bafy2bzacebrnttm45vmgs7wt32rbfvdkg7i7ppjb4bbuccksl274e6kfa2otc

Address

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

Datacap Allocated

500.00TiB

Signer Address

f1yjhnsoga2ccnepb7t3p3ov5fzom3syhsuinxexa

Id

9c77146e-a487-4005-9e54-abf36e6a7c01

You can check the status of the message here: https://filfox.info/en/message/bafy2bzacebrnttm45vmgs7wt32rbfvdkg7i7ppjb4bbuccksl274e6kfa2otc

There was an error signing the transaction. The message cid: bafy2bzacebrnttm45vmgs7wt32rbfvdkg7i7ppjb4bbuccksl274e6kfa2otc

Please, contact the governance team.I tried connecting the SPs below and everything looks fine. I hope you continue to abide by the fil+ rules

No client address found for this issue.

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

manually unblocking this, waiting to fix the issue

@fabriziogianni7 Thanks for your work, this helps us a lot.

Everything looks fine. Hope you continue to abide by the fil+ rules.

Your Datacap Allocation Request has been approved by the Notary

bafy2bzaceddflwoawpma373ljmt5owuqhob7lbi4rymv2ruke66caec3muvxs

Address

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

Datacap Allocated

500.00TiB

Signer Address

f1mdk7s2vntzm6hu35yuo6vjubtrpfnb2awhgvrri

Id

9c77146e-a487-4005-9e54-abf36e6a7c01

You can check the status of the message here: https://filfox.info/en/message/bafy2bzaceddflwoawpma373ljmt5owuqhob7lbi4rymv2ruke66caec3muvxs

@YuanHeHK @1ane-1 Thank you, we fully understand the fil+ rules, and we have made adjustments and optimizations at the technical level, and continue to cooperate with many excellent sps for storage. Thanks again for your support.

checker:manualTrigger

checker:manualTrigger

NDLABSf1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

11ane-11cryptowhizzard1kernelogic1xingjitansuo

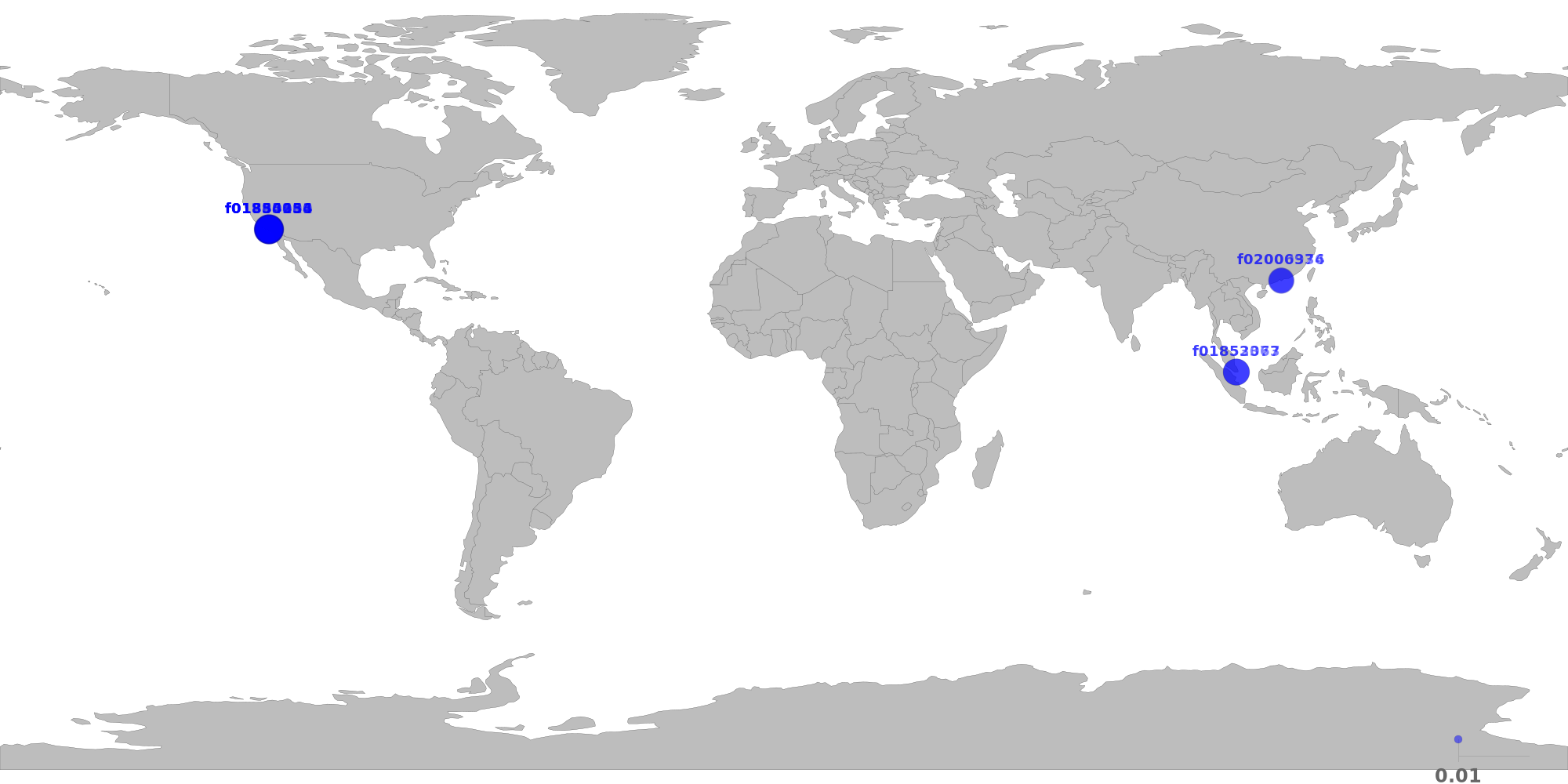

The below table shows the distribution of storage providers that have stored data for this client.

If this is the first time a provider takes verified deal, it will be marked as new.

For most of the datacap application, below restrictions should apply.

Since this is the 3rd allocation, the following restrictions have been relaxed:

✔️ Storage provider distribution looks healthy.

| Provider | Location | Total Deals Sealed | Percentage | Unique Data | Duplicate Deals |

|---|---|---|---|---|---|

| f02000936 | Hong Kong, Central and Western, HK7Road International HK Limited |

31.66 TiB | 7.20% | 31.66 TiB | 0.00% |

| f02006374 | Hong Kong, Central and Western, HK7Road International HK Limited |

25.00 TiB | 5.69% | 25.00 TiB | 0.00% |

| f01854080 | Los Angeles, California, USZenlayer Inc |

66.31 TiB | 15.09% | 66.31 TiB | 0.00% |

| f01740934 | Los Angeles, California, USZenlayer Inc |

55.63 TiB | 12.66% | 55.63 TiB | 0.00% |

| f01853104 | Los Angeles, California, USZenlayer Inc |

54.75 TiB | 12.46% | 54.75 TiB | 0.00% |

| f01834253 | Los Angeles, California, USZenlayer Inc |

53.63 TiB | 12.20% | 53.63 TiB | 0.00% |

| f01985611 | Los Angeles, California, USZenlayer Inc |

44.44 TiB | 10.11% | 44.44 TiB | 0.00% |

| f01890456 | Los Angeles, California, USZenlayer Inc |

38.81 TiB | 8.83% | 38.81 TiB | 0.00% |

| f01853077 | Singapore, Singapore, SGZenlayer Inc |

35.72 TiB | 8.13% | 35.72 TiB | 0.00% |

| f01852363 | Singapore, Singapore, SGZenlayer Inc |

33.44 TiB | 7.61% | 33.44 TiB | 0.00% |

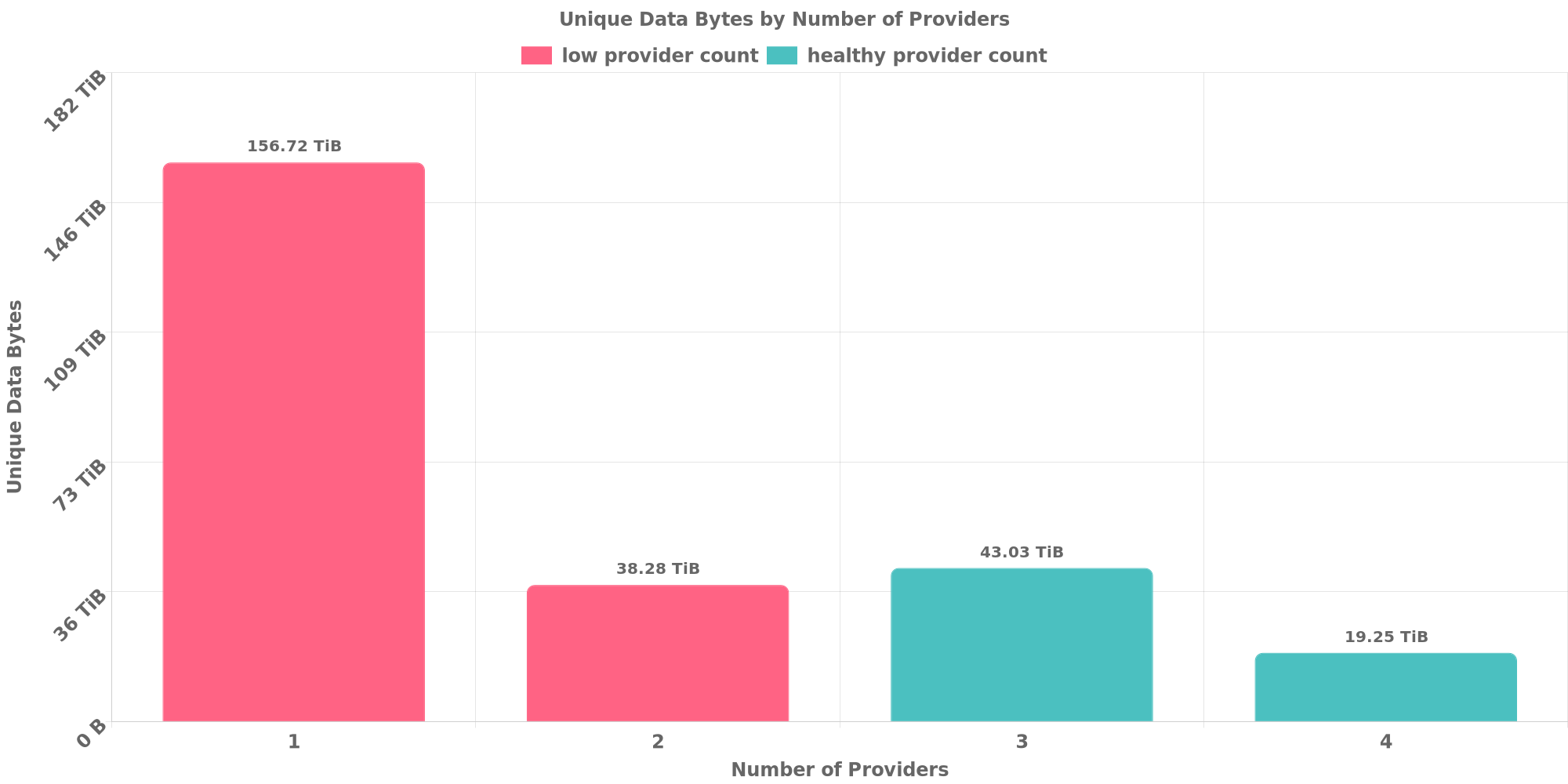

The below table shows how each many unique data are replicated across storage providers.

Since this is the 3rd allocation, the following restrictions have been relaxed:

✔️ Data replication looks healthy.

| Unique Data Size | Total Deals Made | Number of Providers | Deal Percentage |

|---|---|---|---|

| 156.72 TiB | 156.72 TiB | 1 | 35.67% |

| 38.28 TiB | 76.56 TiB | 2 | 17.43% |

| 43.03 TiB | 129.09 TiB | 3 | 29.38% |

| 19.25 TiB | 77.00 TiB | 4 | 17.52% |

The below table shows how many unique data are shared with other clients. Usually different applications owns different data and should not resolve to the same CID.

However, this could be possible if all below clients use same software to prepare for the exact same dataset or they belong to a series of LDN applications for the same dataset.

⚠️ CID sharing has been observed.

| Other Client | Application | Total Deals Affected | Unique CIDs | Approvers |

|---|---|---|---|---|

| f17nerghj2kmg7b4e6asft3xexga5qbzbqe3hi4gy | Unknown | 5.44 TiB | 48 | Unknown |

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

Hi Community FYI, ND open data project <Life Sciences Dataset 1-4> is one proect where the data is uniformly distributed by our maintance colleagues. It is reasonable if there is any CID sharing between thses 4 LDNs. f17nerghj2kmg7b4e6asft3xexga5qbzbqe3hi4gy is the address of Life Sciences Dataset 3

f01858410

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

1000.0TiB

fb23b6f6-0776-4834-b2ea-a1659bbb4c57

f01858410

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

1ane-1 & kernelogic

200% of weekly dc amount requested

1000.0TiB

750TiB

4.26PiB

| Number of deals | Number of storage providers | Previous DC Allocated | Top provider | Remaining DC |

|---|---|---|---|---|

| 18729 | 10 | 500TiB | 14.03 | 117.90TiB |

NDLABSf1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

11ane-11cryptowhizzard1kernelogic1xingjitansuo

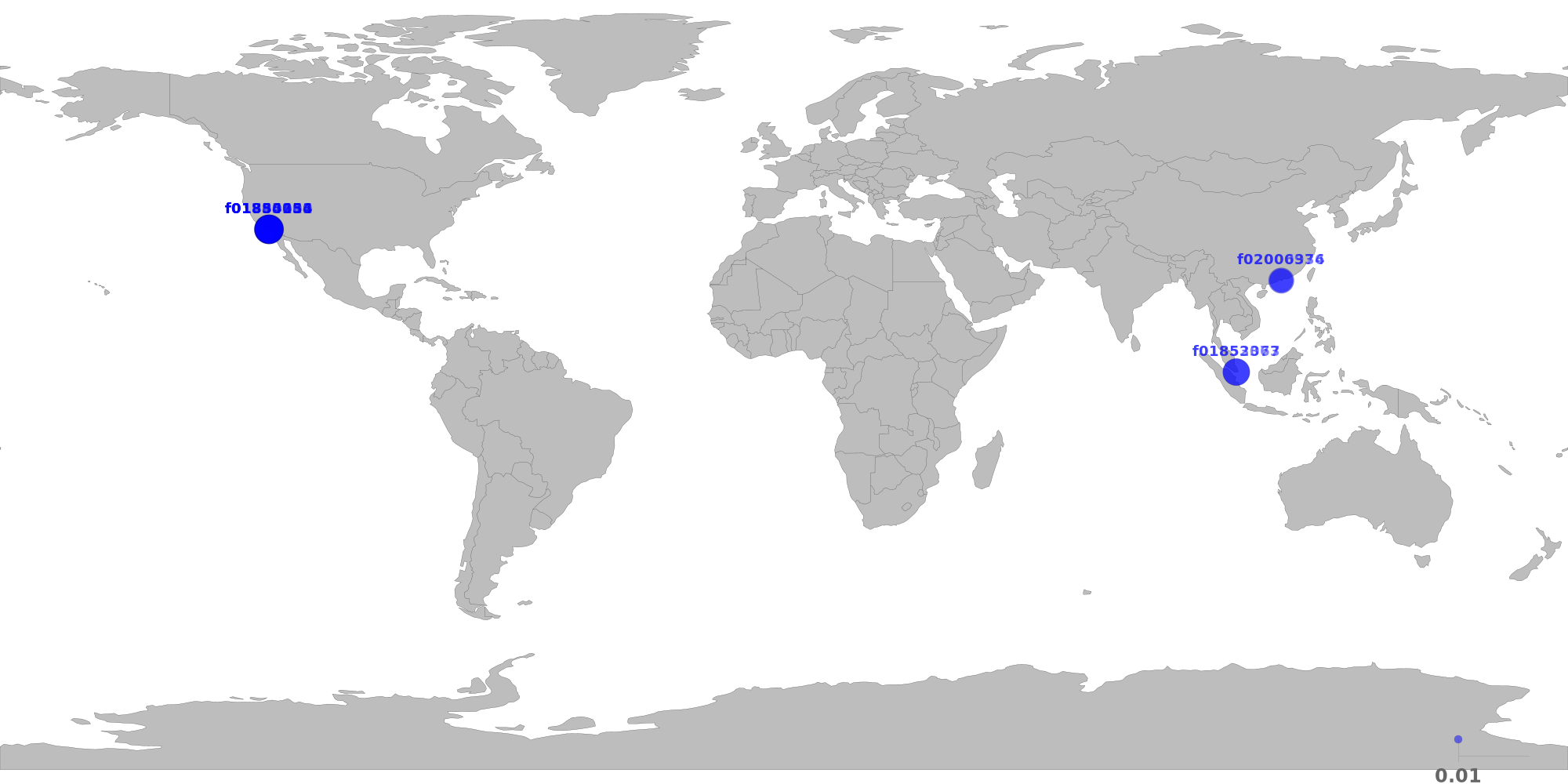

The below table shows the distribution of storage providers that have stored data for this client.

If this is the first time a provider takes verified deal, it will be marked as new.

For most of the datacap application, below restrictions should apply.

Since this is the 4th allocation, the following restrictions have been relaxed:

✔️ Storage provider distribution looks healthy.

| Provider | Location | Total Deals Sealed | Percentage | Unique Data | Duplicate Deals |

|---|---|---|---|---|---|

| f02000936 | Hong Kong, Central and Western, HK7Road International HK Limited |

34.78 TiB | 6.89% | 34.78 TiB | 0.00% |

| f02006374 | Hong Kong, Central and Western, HK7Road International HK Limited |

25.00 TiB | 4.95% | 25.00 TiB | 0.00% |

| f01854080 | Los Angeles, California, USZenlayer Inc |

68.16 TiB | 13.49% | 68.16 TiB | 0.00% |

| f01740934 | Los Angeles, California, USZenlayer Inc |

67.13 TiB | 13.29% | 67.13 TiB | 0.00% |

| f01834253 | Los Angeles, California, USZenlayer Inc |

58.88 TiB | 11.65% | 58.88 TiB | 0.00% |

| f01853104 | Los Angeles, California, USZenlayer Inc |

57.19 TiB | 11.32% | 57.19 TiB | 0.00% |

| f01985611 | Los Angeles, California, USZenlayer Inc |

56.56 TiB | 11.20% | 56.56 TiB | 0.00% |

| f01890456 | Los Angeles, California, USZenlayer Inc |

50.72 TiB | 10.04% | 50.72 TiB | 0.00% |

| f01853077 | Singapore, Singapore, SGZenlayer Inc |

45.06 TiB | 8.92% | 45.06 TiB | 0.00% |

| f01852363 | Singapore, Singapore, SGZenlayer Inc |

41.69 TiB | 8.25% | 41.69 TiB | 0.00% |

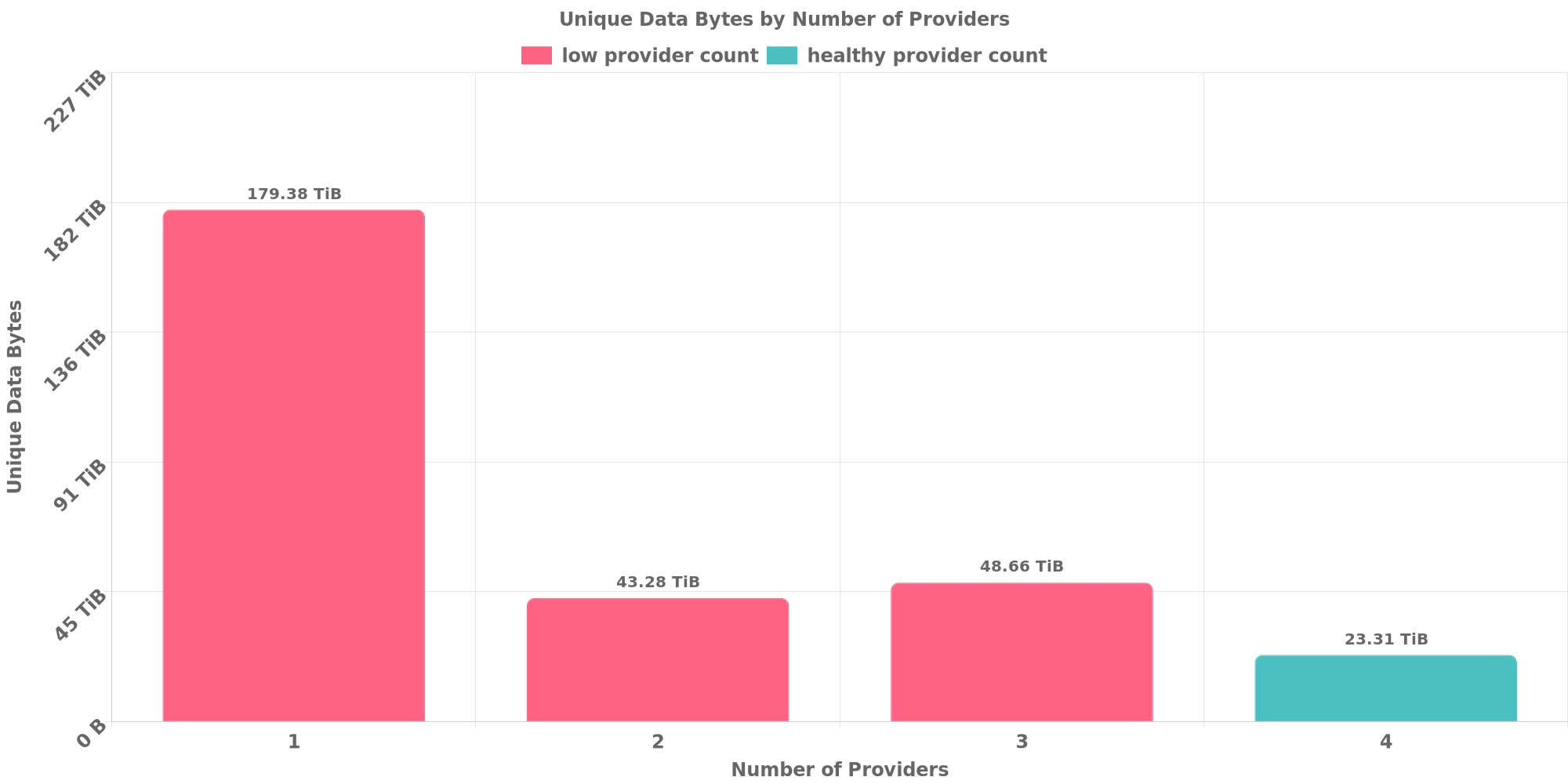

The below table shows how each many unique data are replicated across storage providers.

Since this is the 4th allocation, the following restrictions have been relaxed:

⚠️ 81.54% of deals are for data replicated across less than 4 storage providers.

| Unique Data Size | Total Deals Made | Number of Providers | Deal Percentage |

|---|---|---|---|

| 179.38 TiB | 179.38 TiB | 1 | 35.51% |

| 43.28 TiB | 86.56 TiB | 2 | 17.14% |

| 48.66 TiB | 145.97 TiB | 3 | 28.90% |

| 23.31 TiB | 93.25 TiB | 4 | 18.46% |

The below table shows how many unique data are shared with other clients. Usually different applications owns different data and should not resolve to the same CID.

However, this could be possible if all below clients use same software to prepare for the exact same dataset or they belong to a series of LDN applications for the same dataset.

⚠️ CID sharing has been observed.

| Other Client | Application | Total Deals Affected | Unique CIDs | Approvers |

|---|---|---|---|---|

| f17nerghj2kmg7b4e6asft3xexga5qbzbqe3hi4gy | Unknown | 5.47 TiB | 49 | Unknown |

[^1]: To manually trigger this report, add a comment with text checker:manualTrigger

Data Owner Name

NDLABS

Data Owner Country/Region

Singapore

Data Owner Industry

Life Science / Healthcare

Website

https://www.ndlabs.io/#/

Social Media

Total amount of DataCap being requested

5PiB

Weekly allocation of DataCap requested

500TiB

On-chain address for first allocation

f1q6rat3ob7c56ea4hghcj7fkgmjvmqo2lammjxvy

Custom multisig

Identifier

No response

Share a brief history of your project and organization

Is this project associated with other projects/ecosystem stakeholders?

Yes

If answered yes, what are the other projects/ecosystem stakeholders

Describe the data being stored onto Filecoin

Where was the data currently stored in this dataset sourced from

My Own Storage Infra

If you answered "Other" in the previous question, enter the details here

No response

How do you plan to prepare the dataset

IPFS, lotus

If you answered "other/custom tool" in the previous question, enter the details here

No response

Please share a sample of the data

Confirm that this is a public dataset that can be retrieved by anyone on the Network

If you chose not to confirm, what was the reason

No response

What is the expected retrieval frequency for this data

years

For how long do you plan to keep this dataset stored on Filecoin

1 to 1.5 years

In which geographies do you plan on making storage deals

Greater China, Asia other than Greater China, North America

How will you be distributing your data to storage providers

Cloud storage (i.e. S3), HTTP or FTP server, IPFS, Shipping hard drives, Lotus built-in data transfer

How do you plan to choose storage providers

Slack, Filmine, Partners

If you answered "Others" in the previous question, what is the tool or platform you plan to use

No response

If you already have a list of storage providers to work with, fill out their names and provider IDs below

How do you plan to make deals to your storage providers

Lotus client

If you answered "Others/custom tool" in the previous question, enter the details here

No response

Can you confirm that you will follow the Fil+ guideline

Yes