neural-style

This is a torch implementation of the paper A Neural Algorithm of Artistic Style by Leon A. Gatys, Alexander S. Ecker, and Matthias Bethge.

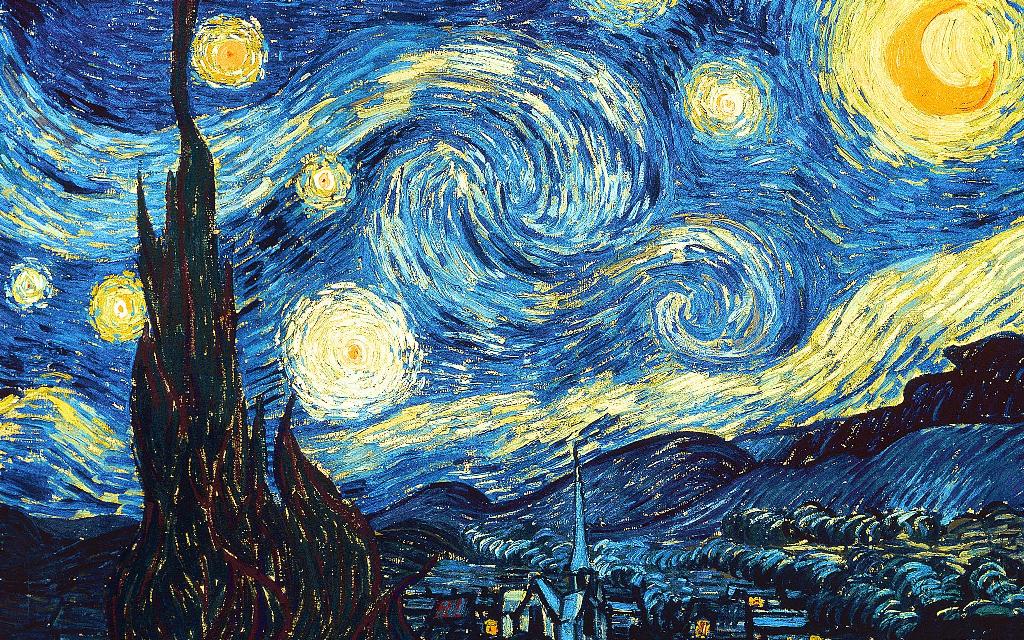

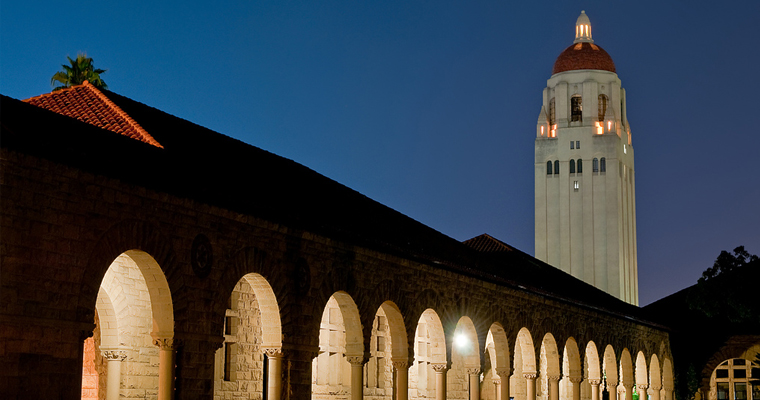

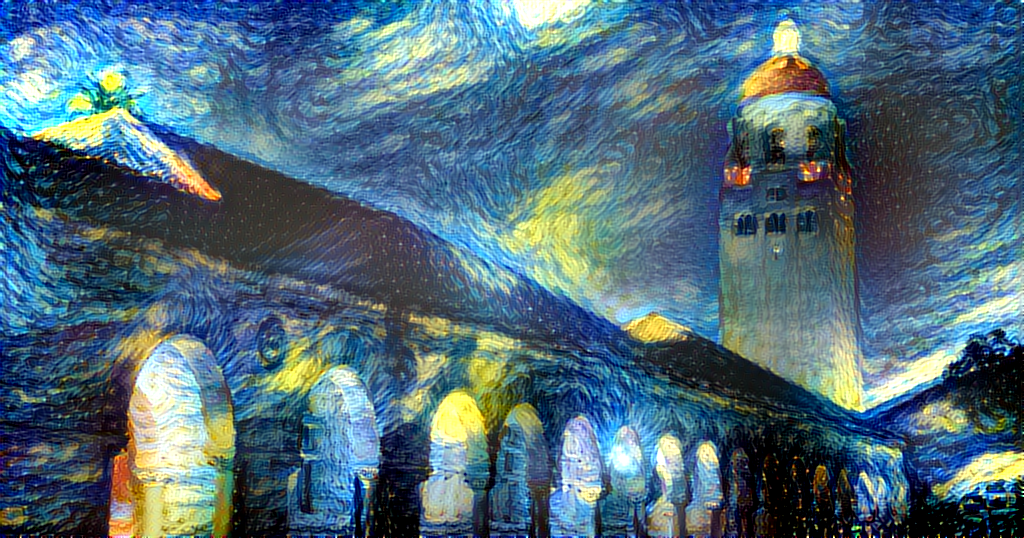

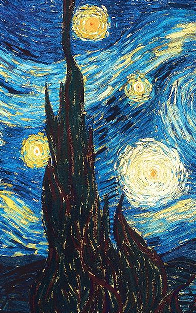

The paper presents an algorithm for combining the content of one image with the style of another image using convolutional neural networks. Here's an example that maps the artistic style of The Starry Night onto a night-time photograph of the Stanford campus:

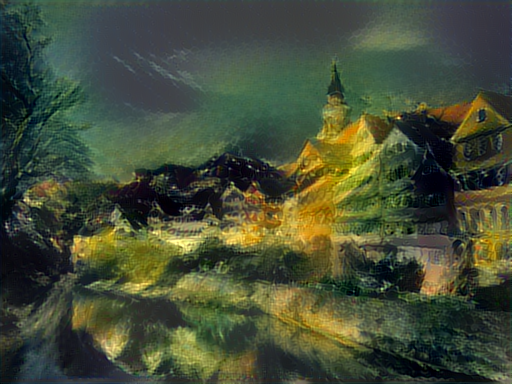

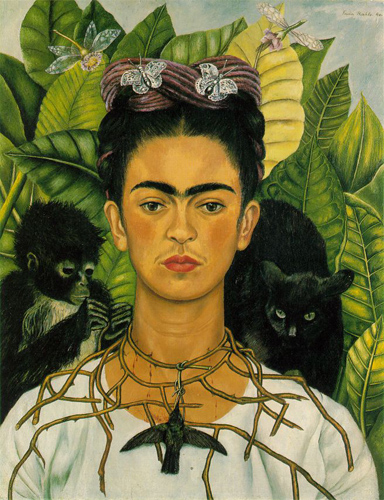

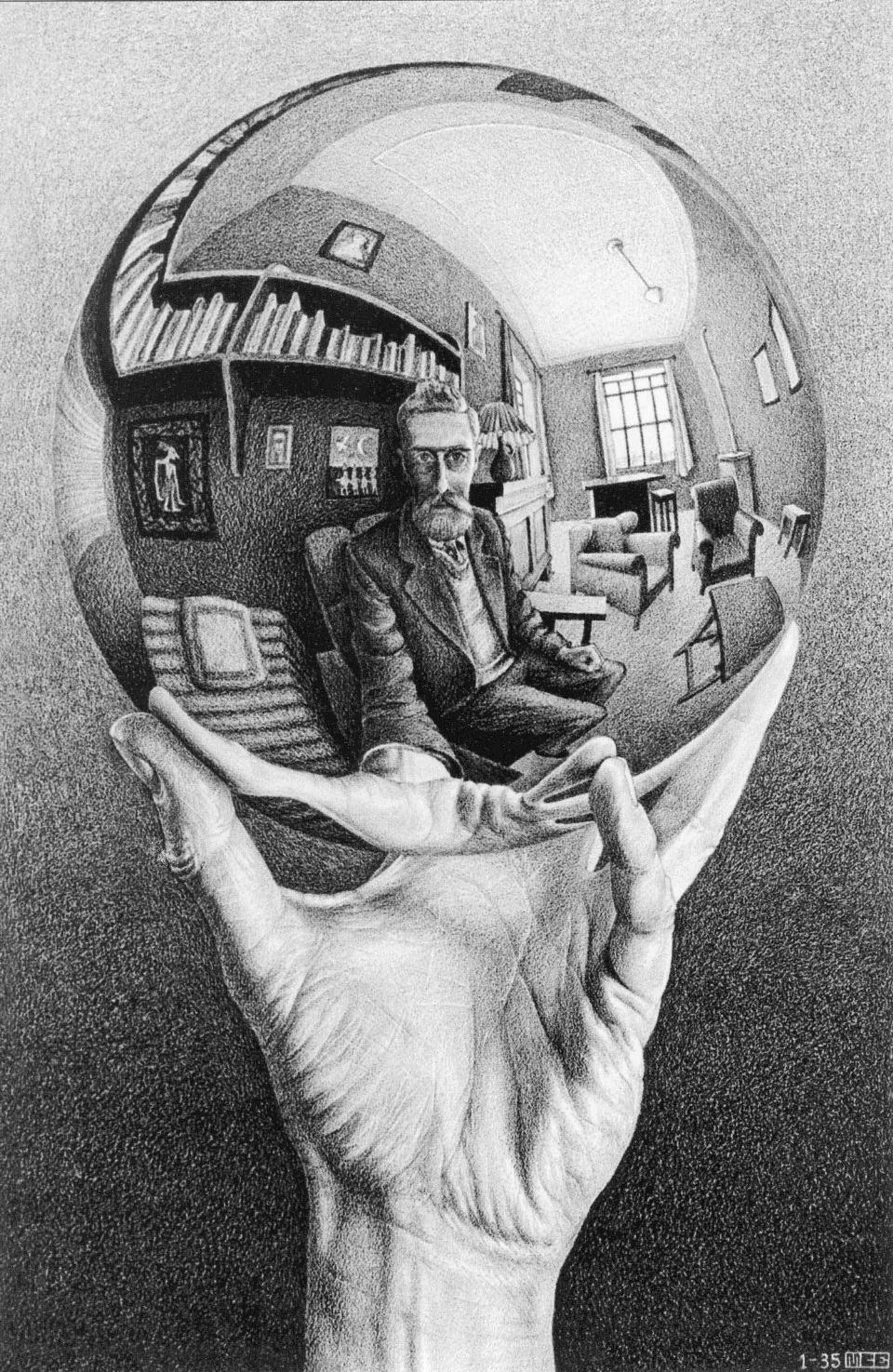

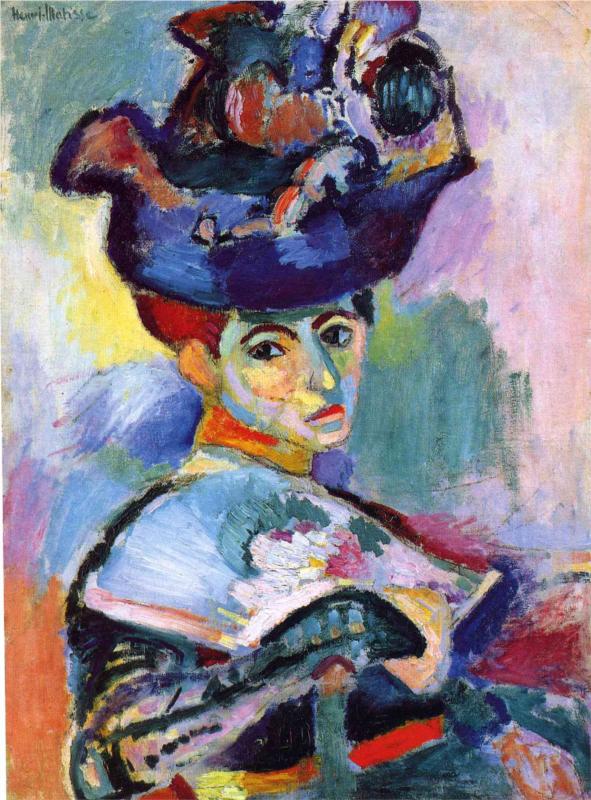

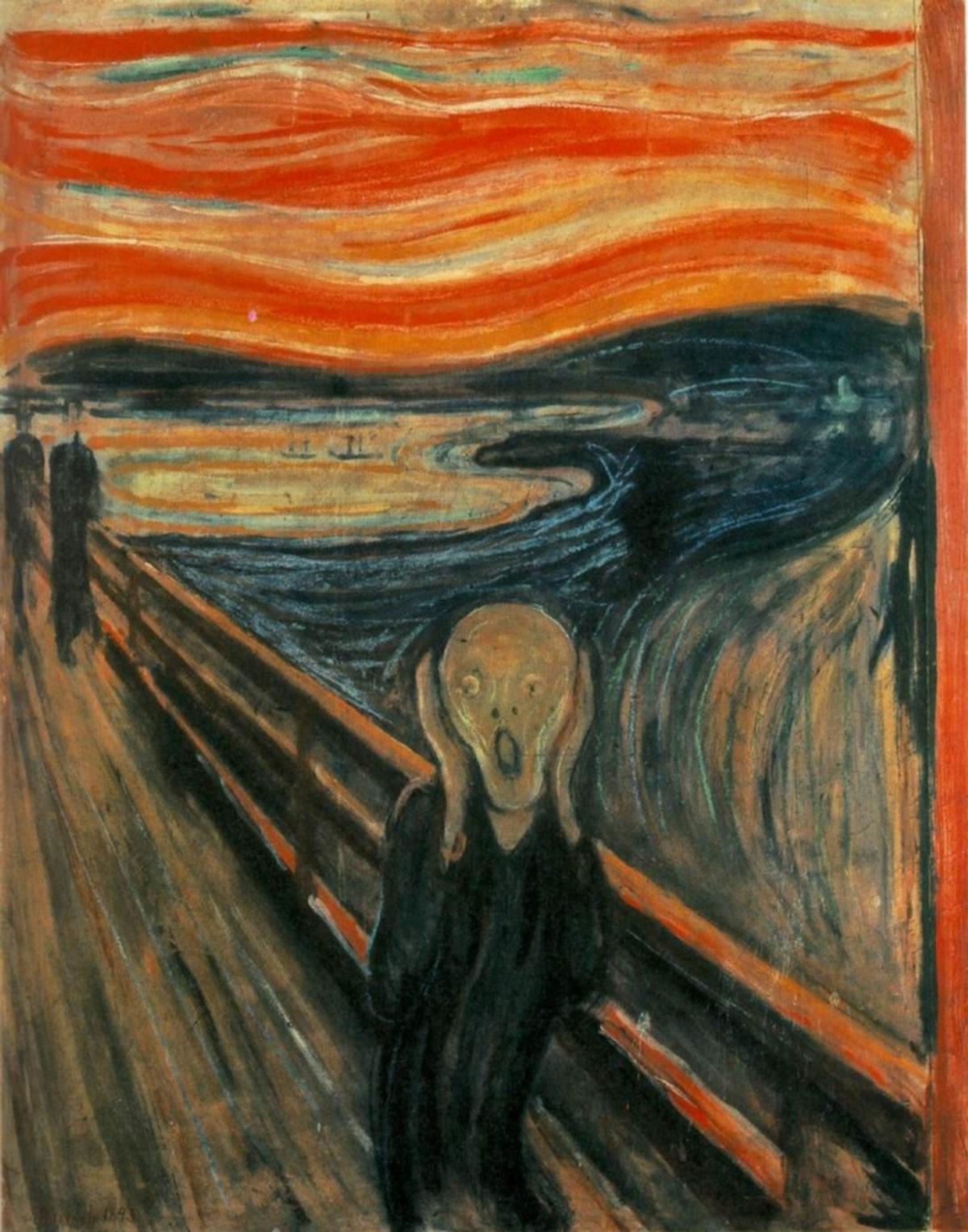

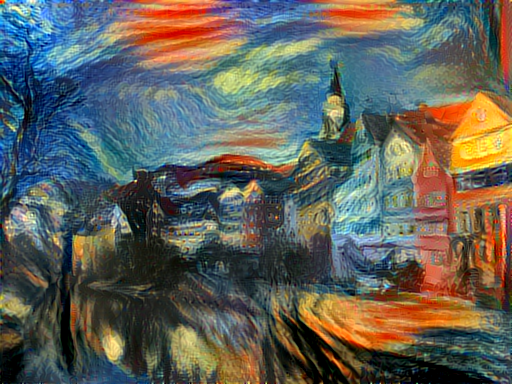

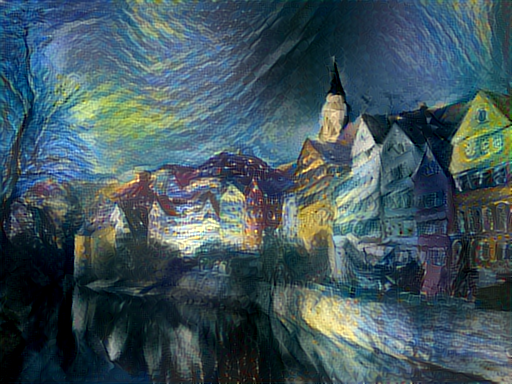

Applying the style of different images to the same content image gives interesting results. Here we reproduce Figure 2 from the paper, which renders a photograph of the Tubingen in Germany in a variety of styles:

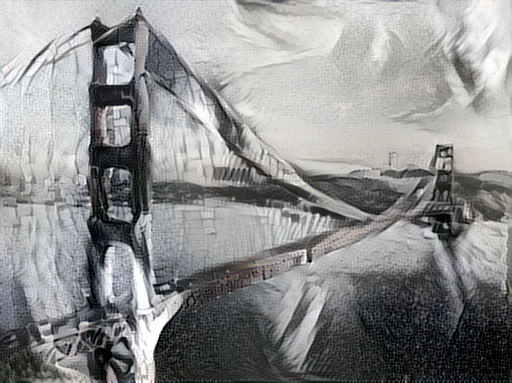

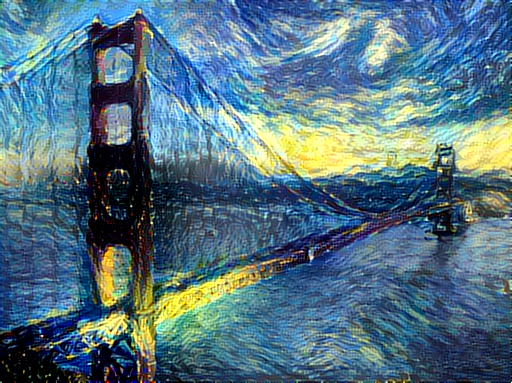

Here are the results of applying the style of various pieces of artwork to this photograph of the golden gate bridge:

Content / Style Tradeoff

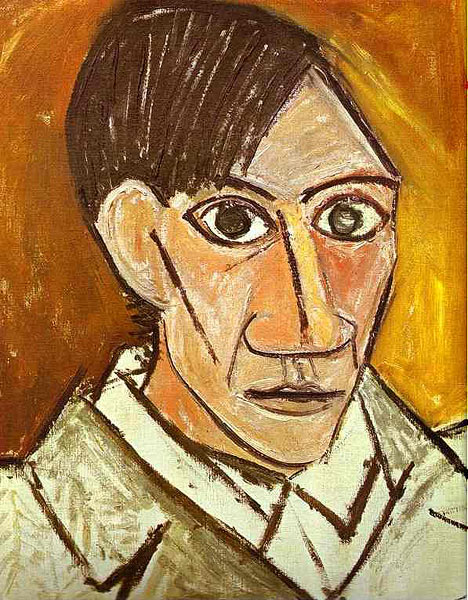

The algorithm allows the user to trade-off the relative weight of the style and content reconstruction terms, as shown in this example where we port the style of Picasso's 1907 self-portrait onto Brad Pitt:

Style Scale

By resizing the style image before extracting style features, we can control the types of artistic

features that are transfered from the style image; you can control this behavior with the -style_scale flag.

Below we see three examples of rendering the Golden Gate Bridge in the style of The Starry Night.

From left to right, -style_scale is 2.0, 1.0, and 0.5.

<img src="https://raw.githubusercontent.com/jcjohnson/neural-style/master/examples/outputs/golden_gate_starry_scale2.png" height=175px"> <img src="https://raw.githubusercontent.com/jcjohnson/neural-style/master/examples/outputs/golden_gate_starry_scale1.png" height=175px"> <img src="https://raw.githubusercontent.com/jcjohnson/neural-style/master/examples/outputs/golden_gate_starry_scale05.png" height=175px">

Multiple Style Images

You can use more than one style image to blend multiple artistic styles.

Clockwise from upper left: "The Starry Night" + "The Scream", "The Scream" + "Composition VII", "Seated Nude" + "Composition VII", and "Seated Nude" + "The Starry Night"

Style Interpolation

When using multiple style images, you can control the degree to which they are blended:

Setup:

Dependencies:

Optional dependencies:

- CUDA 6.5+

- cudnn.torch

After installing dependencies, you'll need to run the following script to download the VGG model:

sh models/download_models.shThis will download the original VGG-19 model. Leon Gatys has graciously provided the modified version of the VGG-19 model that was used in their paper; this will also be downloaded. By default the original VGG-19 model is used.

You can find detailed installation instructions for Ubuntu in the installation guide.

Usage

Basic usage:

th neural_style.lua -style_image <image.jpg> -content_image <image.jpg>To use multiple style images, pass a comma-separated list like this:

-style_image starry_night.jpg,the_scream.jpg.

Options:

-image_size: Maximum side length (in pixels) of of the generated image. Default is 512.-style_blend_weights: The weight for blending the style of multiple style images, as a comma-separated list, such as-style_blend_weights 3,7. By default all style images are equally weighted.-gpu: Zero-indexed ID of the GPU to use; for CPU mode set-gputo -1.

Optimization options:

-content_weight: How much to weight the content reconstruction term. Default is 5e0.-style_weight: How much to weight the style reconstruction term. Default is 1e2.-tv_weight: Weight of total-variation (TV) regularization; this helps to smooth the image. Default is 1e-3. Set to 0 to disable TV regularization.-num_iterations: Default is 1000.-init: Method for generating the generated image; one ofrandomorimage. Default israndomwhich uses a noise initialization as in the paper;imageinitializes with the content image.-optimizer: The optimization algorithm to use; eitherlbfgsoradam; default islbfgs. L-BFGS tends to give better results, but uses more memory. Switching to ADAM will reduce memory usage; when using ADAM you will probably need to play with other parameters to get good results, especially the style weight, content weight, and learning rate; you may also want to normalize gradients when using ADAM.-learning_rate: Learning rate to use with the ADAM optimizer. Default is 1e1.-normalize_gradients: If this flag is present, style and content gradients from each layer will be L1 normalized. Idea from andersbll/neural_artistic_style.

Output options:

-output_image: Name of the output image. Default isout.png.-print_iter: Print progress everyprint_iteriterations. Set to 0 to disable printing.-save_iter: Save the image everysave_iteriterations. Set to 0 to disable saving intermediate results.

Layer options:

-content_layers: Comma-separated list of layer names to use for content reconstruction. Default isrelu4_2.-style_layers: Comman-separated list of layer names to use for style reconstruction. Default isrelu1_1,relu2_1,relu3_1,relu4_1,relu5_1.

Other options:

-style_scale: Scale at which to extract features from the style image. Default is 1.0.-proto_file: Path to thedeploy.txtfile for the VGG Caffe model.-model_file: Path to the.caffemodelfile for the VGG Caffe model. Default is the original VGG-19 model; you can also try the normalized VGG-19 model used in the paper.-pooling: The type of pooling layers to use; one ofmaxoravg. Default ismax. The VGG-19 models uses max pooling layers, but the paper mentions that replacing these layers with average pooling layers can improve the results. I haven't been able to get good results using average pooling, but the option is here.-backend:nnorcudnn. Default isnn.cudnnrequires cudnn.torch and may reduce memory usage.

Frequently Asked Questions

Problem: Generated image has saturation artifacts:

Solution: Update the image packge to the latest version: luarocks install image

Problem: Running without a GPU gives an error message complaining about cutorch not found

Solution:

Pass the flag -gpu -1 when running in CPU-only mode

Problem: The program runs out of memory and dies

Solution: Try reducing the image size: -image_size 256 (or lower). Note that different image sizes will likely

require non-default values for -style_weight and -content_weight for optimal results.

If you are running on a GPU, you can also try running with -backend cudnn to reduce memory usage.

Problem: Get the following error message:

models/VGG_ILSVRC_19_layers_deploy.prototxt.cpu.lua:7: attempt to call method 'ceil' (a nil value)

Solution: Update nn package to the latest version: luarocks install nn

Problem: Get an error message complaining about paths.extname

Solution: Update torch.paths package to the latest version: luarocks install paths

Memory Usage

By default, neural-style uses the nn backend for convolutions and L-BFGS for optimization.

These give good results, but can both use a lot of memory. You can reduce memory usage with the following:

- Use cuDNN: Add the flag

-backend cudnnto use the cuDNN backend. This will only work in GPU mode. - Use ADAM: Add the flag

-optimizer adamto use ADAM instead of L-BFGS. This should significantly reduce memory usage, but may require tuning of other parameters for good results; in particular you should play with the learning rate, content weight, style weight, and also consider using gradient normalization. This should work in both CPU and GPU modes. - Reduce image size: If the above tricks are not enough, you can reduce the size of the generated image;

pass the flag

-image_size 256to generate an image at half the default size.

With the default settings, neural-style uses about 3.5GB of GPU memory on my system;

switching to ADAM and cuDNN reduces the GPU memory footprint to about 1GB.

Speed

On a GTX Titan X, running 1000 iterations of gradient descent with -image_size=512 takes about 2 minutes.

In CPU mode on an Intel Core i7-4790k, running the same takes around 40 minutes.

Most of the examples shown here were run for 2000 iterations, but with a bit of parameter tuning most images will

give good results within 1000 iterations.

Implementation details

Images are initialized with white noise and optimized using L-BFGS.

We perform style reconstructions using the conv1_1, conv2_1, conv3_1, conv4_1, and conv5_1 layers

and content reconstructions using the conv4_2 layer. As in the paper, the five style reconstruction losses have

equal weights.