Semantic Link Labs

Read the documentation on ReadTheDocs!

Semantic Link Labs is a Python library designed for use in Microsoft Fabric notebooks. This library extends the capabilities of Semantic Link offering additional functionalities to seamlessly integrate and work alongside it. The goal of Semantic Link Labs is to simplify technical processes, empowering people to focus on higher level activities and allowing tasks that are better suited for machines to be efficiently handled without human intervention.

If you encounter any issues, please raise a bug.

If you have ideas for new features/functions, please request a feature.

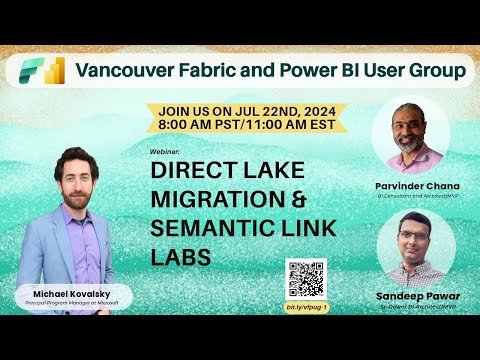

Check out the video below for an introduction to Semantic Link, Semantic Link Labs and demos of key features!

Featured Scenarios

- Semantic Models

- Migrating an import/DirectQuery semantic model to Direct Lake

- Model Best Practice Analyzer (BPA)

- Vertipaq Analyzer

- Tabular Object Model (TOM)

- Translate a semantic model's metadata

- Check Direct Lake Guardrails

- Refresh, clear cache, backup, restore, copy backup files, move/deploy across workspaces

- Run DAX queries which impersonate a user

- Manage Query Scale Out

- Auto-generate descriptions for any/all measures in bulk

- Warm the cache of a Direct Lake semantic model after a refresh (using columns currently in memory)

- Warm the cache of a Direct Lake semantic model (via perspective)

- Visualize a refresh

- Reports

- Capacities

- Lakehouses

- APIs

- Wrapper functions for Power BI, Fabric, and Azure (Fabric Capacity) APIs

Helper Notebooks

Check out the helper notebooks for getting started! Run the code below to load all the helper notebooks to the workspace of your choice at once.

import sempy_labs as labs

import requests

workspace_name = None # Update this to the workspace in which you want to save the notebooks

api_url = "https://api.github.com/repos/microsoft/semantic-link-labs/contents/notebooks"

response = requests.get(api_url)

files = response.json()

notebook_files = {file['name'][:-6]: file['html_url'] for file in files if file['name'].endswith('.ipynb')}

for file_name, file_url in notebook_files.items():

labs.import_notebook_from_web(notebook_name=file_name, url=file_url, workspace=workspace_name)Install the library in a Fabric notebook

%pip install semantic-link-labsOnce installed, run this code to import the library into your notebook

import sempy_labs as labs

from sempy_labs import migration, directlake, admin

from sempy_labs import lakehouse as lake

from sempy_labs import report as rep

from sempy_labs.tom import connect_semantic_model

from sempy_labs.report import ReportWrapper

from sempy_labs import ConnectWarehouse

from sempy_labs import ConnectLakehouseLoad Semantic Link Labs into a custom Fabric environment

An even better way to ensure the semantic-link-labs library is available in your workspace/notebooks is to load it as a library in a custom Fabric environment. If you do this, you will not have to run the above '%pip install' code every time in your notebook. Please follow the steps below.

Create a custom environment

- Navigate to your Fabric workspace

- Click 'New' -> More options

- Within 'Data Science', click 'Environment'

- Name your environment, click 'Create'

Add semantic-link-labs as a library to the environment

- Within 'Public libraries', click 'Add from PyPI'

- Enter 'semantic-link-labs'.

- Click 'Save' at the top right of the screen

- Click 'Publish' at the top right of the screen

- Click 'Publish All'

Update your notebook to use the new environment (must wait for the environment to finish publishing)

- Navigate to your Notebook

- Select your newly created environment within the 'Environment' drop down in the navigation bar at the top of the notebook

Version History

- 0.8.6 (November 14, 2024)

- 0.8.5 (November 13, 2024)

- 0.8.4 (October 30, 2024)

- 0.8.3 (October 14, 2024)

- 0.8.2 (October 2, 2024)

- 0.8.1 (October 2, 2024)

- 0.8.0 (September 25, 2024)

- 0.7.4 (September 16, 2024)

- 0.7.3 (September 11, 2024)

- 0.7.2 (August 30, 2024)

- 0.7.1 (August 29, 2024)

- 0.7.0 (August 26, 2024)

- 0.6.0 (July 22, 2024)

- 0.5.0 (July 2, 2024)

- 0.4.2 (June 18, 2024)

Direct Lake migration

The following process automates the migration of an import/DirectQuery model to a new Direct Lake model. The first step is specifically applicable to models which use Power Query to perform data transformations. If your model does not use Power Query, you must migrate the base tables used in your semantic model to a Fabric lakehouse.

Check out Nikola Ilic's terrific blog post on this topic!

Check out my blog post on this topic!

Prerequisites

- Make sure you enable XMLA Read/Write for your capacity

- Make sure you have a lakehouse in a Fabric workspace

- Enable the following setting: Workspace -> Workspace Settings -> General -> Data model settings -> Users can edit data models in the Power BI service

Instructions

- Download this notebook.

- Make sure you are in the 'Data Engineering' persona. Click the icon at the bottom left corner of your Workspace screen and select 'Data Engineering'

- In your workspace, select 'New -> Import notebook' and import the notebook from step 1.

- Add your lakehouse to your Fabric notebook

- Follow the instructions within the notebook.

The migration process

[!NOTE] The first 4 steps are only necessary if you have logic in Power Query. Otherwise, you will need to migrate your semantic model source tables to lakehouse tables.

- The first step of the notebook creates a Power Query Template (.pqt) file which eases the migration of Power Query logic to Dataflows Gen2.

- After the .pqt file is created, sync files from your OneLake file explorer

- Navigate to your lakehouse (this is critical!). From your lakehouse, create a new Dataflows Gen2, and import the Power Query Template file. Doing this step from your lakehouse will automatically set the destination for all tables to this lakehouse (instead of having to manually map each one).

- Publish the Dataflow Gen2 and wait for it to finish creating the delta lake tables in your lakehouse.

- Back in the notebook, the next step will create your new Direct Lake semantic model with the name of your choice, taking all the relevant properties from the orignal semantic model and refreshing/framing your new semantic model.

[!NOTE] Calculated tables are also migrated to Direct Lake (as data tables with their DAX expression stored as model annotations in the new semantic model). Additionally, Field Parameters are migrated as they were in the original semantic model (as a calculated table). Auto date/time tables are not migrated. Auto date/time must be disabled in Power BI Desktop and proper date table(s) must be created prior to migration.

- Finally, you can easily rebind your all reports which use the import/DQ semantic model to the new Direct Lake semantic model in one click.

Completing these steps will do the following:

- Offload your Power Query logic to Dataflows Gen2 inside of Fabric (where it can be maintained and development can continue).

- Dataflows Gen2 will create delta tables in your Fabric lakehouse. These tables can then be used for your Direct Lake model.

- Create a new semantic model in Direct Lake mode containing all the standard tables and columns, calculation groups, measures, relationships, hierarchies, roles, row level security, perspectives, and translations from your original semantic model.

- Viable calculated tables are migrated to the new semantic model as data tables. Delta tables are dynamically generated in the lakehouse to support the Direct Lake model. The calculated table DAX logic is stored as model annotations in the new semantic model.

- Field parameters are migrated to the new semantic model as they were in the original semantic model (as calculated tables). Any calculated columns used in field parameters are automatically removed in the new semantic model's field parameter(s).

- Non-supported objects are not transferred (i.e. calculated columns, relationships using columns with unsupported data types etc.).

- Reports used by your original semantic model will be rebinded to your new semantic model.

Limitations

- Calculated columns are not migrated.

- Auto date/time tables are not migrated.

- References to calculated columns in Field Parameters are removed.

- References to calculated columns in measure expressions or other DAX expressions will break.

- Calculated tables are migrated as possible. The success of this migration depends on the interdependencies and complexity of the calculated table. This part of the migration is a workaround as technically calculated tables are not supported in Direct Lake.

- See here for the rest of the limitations of Direct Lake.

Contributing

This project welcomes contributions and suggestions. Most contributions require you to agree to a Contributor License Agreement (CLA) declaring that you have the right to, and actually do, grant us the rights to use your contribution. For details, visit https://cla.opensource.microsoft.com.

When you submit a pull request, a CLA bot will automatically determine whether you need to provide a CLA and decorate the PR appropriately (e.g., status check, comment). Simply follow the instructions provided by the bot. You will only need to do this once across all repos using our CLA.

This project has adopted the Microsoft Open Source Code of Conduct. For more information see the Code of Conduct FAQ or contact opencode@microsoft.com with any additional questions or comments.

Trademarks

This project may contain trademarks or logos for projects, products, or services. Authorized use of Microsoft trademarks or logos is subject to and must follow Microsoft's Trademark & Brand Guidelines. Use of Microsoft trademarks or logos in modified versions of this project must not cause confusion or imply Microsoft sponsorship. Any use of third-party trademarks or logos are subject to those third-party's policies.