DeepMind : Teaching Machines to Read and Comprehend

This repository contains an implementation of the two models (the Deep LSTM and the Attentive Reader) described in Teaching Machines to Read and Comprehend by Karl Moritz Hermann and al., NIPS, 2015. This repository also contains an implementation of a Deep Bidirectional LSTM.

The three models implemented in this repository are:

deepmind_deep_lstmreproduces the experimental settings of the DeepMind paper for the LSTM readerdeepmind_attentive_readerreproduces the experimental settings of the DeepMind paper for the Attentive readerdeep_bidir_lstm_2x128implements a two-layer bidirectional LSTM reader

Our results

We trained the three models during 2 to 4 days on a Titan Black GPU. The following results were obtained:

| DeepMind | Us | |||

| CNN | CNN | |||

| Valid | Test | Valid | Test | |

| Attentive Reader | 61.6 | 63.0 | 59.37 | 61.07 |

| Deep Bidir LSTM | - | - | 59.76 | 61.62 |

| Deep LSTM Reader | 55.0 | 57.0 | 46 | 47 |

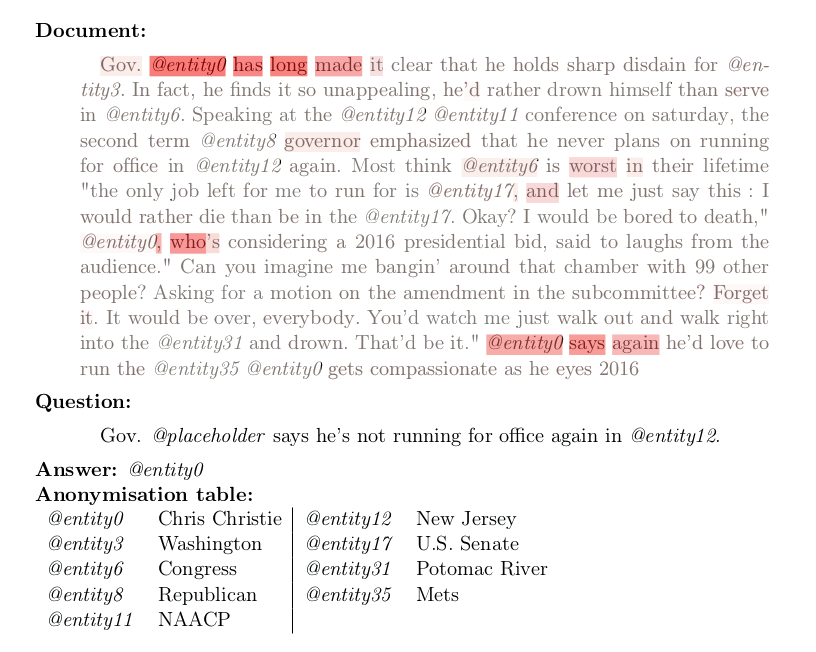

Here is an example of attention weights used by the attentive reader model on an example:

Requirements

Software dependencies:

Optional dependencies:

- Blocks Extras and a Bokeh server for the plot

We recommend using Anaconda 2 and installing them with the following commands (where pip refers to the pip command from Anaconda):

pip install git+git://github.com/Theano/Theano.git

pip install git+git://github.com/mila-udem/fuel.git

pip install git+git://github.com/mila-udem/blocks.git -r https://raw.githubusercontent.com/mila-udem/blocks/master/requirements.txtAnaconda also includes a Bokeh server, but you still need to install blocks-extras if you want to have the plot:

pip install git+git://github.com/mila-udem/blocks-extras.gitThe corresponding dataset is provided by DeepMind but if the script does not work (or you are tired of waiting) you can check this preprocessed version of the dataset by Kyunghyun Cho.

Running

Set the environment variable DATAPATH to the folder containing the DeepMind QA dataset. The training questions are expected to be in $DATAPATH/deepmind-qa/cnn/questions/training.

Run:

cp deepmind-qa/* $DATAPATH/deepmind-qa/This will copy our vocabulary list vocab.txt, which contains a subset of all the words appearing in the dataset.

To train a model (see list of models at the beginning of this file), run:

./train.py model_nameBe careful to set your THEANO_FLAGS correctly! For instance you might want to use THEANO_FLAGS=device=gpu0 if you have a GPU (highly recommended!)

Reference

Teaching Machines to Read and Comprehend, by Karl Moritz Hermann, Tomáš Kočiský, Edward Grefenstette, Lasse Espeholt, Will Kay, Mustafa Suleyman and Phil Blunsom, Neural Information Processing Systems, 2015.

Credits

Acknowledgments

We would like to thank the developers of Theano, Blocks and Fuel at MILA for their excellent work.

We thank Simon Lacoste-Julien from SIERRA team at INRIA, for providing us access to two Titan Black GPUs.